Govern generative AI in the enterprise with Amazon SageMaker Canvas

With the rise of powerful foundation models (FMs) powered by services such as Amazon Bedrock and Amazon SageMaker JumpStart, enterprises want to exercise granular control over which users and groups can access and use these models. This is crucial for compliance, security, and governance.

Launched in 2021, Amazon SageMaker Canvas is a visual point-and-click service that allows business analysts and citizen data scientists to use ready-to-use machine learning (ML) models and build custom ML models to generate accurate predictions without writing any code. SageMaker Canvas provides a no-code interface to consume a broad range of FMs from both services in an off-the-shelf fashion, as well as to customize model responses using a Retrieval Augmented Generation (RAG) workflow using Amazon Kendra as a knowledge base or fine-tune using a labeled dataset. This simplifies access to generative artificial intelligence (AI) capabilities to business analysts and data scientists without the need for technical knowledge or having to write code, thereby accelerating productivity.

In this post, we analyze strategies for governing access to Amazon Bedrock and SageMaker JumpStart models from within SageMaker Canvas using AWS Identity and Access Management (IAM) policies. You’ll learn how to create granular permissions to control the invocation of ready-to-use Amazon Bedrock models and prevent the provisioning of SageMaker endpoints with specified SageMaker JumpStart models. We provide code examples tailored to common enterprise governance scenarios. By the end, you’ll understand how to lock down access to generative AI capabilities based on your organizational requirements, maintaining secure and compliant use of cutting-edge AI within the no-code SageMaker Canvas environment.

This post covers an increasingly important topic as more powerful AI models become available, making it a valuable resource for ML operators, security teams, and anyone governing AI in the enterprise.

Solution overview

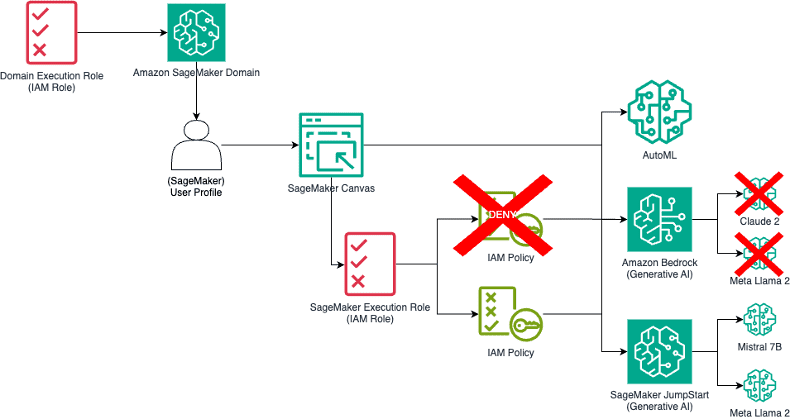

The following diagram illustrates the solution architecture.

The architecture of SageMaker Canvas allows business analysts and data scientists to interact with ML models without writing any code. However, managing access to these models is crucial for maintaining security and compliance. When a user interacts with SageMaker Canvas, the operations they perform, such as invoking a model or creating an endpoint, are run by the SageMaker service role. SageMaker user profiles can either inherit the default role from the SageMaker domain or have a user-specific role.

By customizing the policies attached to this role, you can control what actions are permitted or denied, thereby governing the access to generative AI capabilities. As part of this post, we discuss which IAM policies to use for this role to control operations within SageMaker Canvas, such as invoking models or creating endpoints, based on enterprise organizational requirements. We analyze two patterns for both Amazon Bedrock models and SageMaker JumpStart models: limiting access to all models from a service or limiting access to specific models.

Govern Amazon Bedrock access to SageMaker Canvas

In order to use Amazon Bedrock models, SageMaker Canvas calls the following Amazon Bedrock APIs:

- bedrock:InvokeModel – Invokes the model synchronously

- bedrock:InvokeModelWithResponseStream – Invokes the model synchronously, with the response being streamed over a socket, as illustrated in the following diagram

Additionally, SageMaker Canvas can call the bedrock:FineTune API to fine-tune large language models (LLMs) with Amazon Bedrock. At the time of writing, SageMaker Canvas only allows fine-tuning of Amazon Titan models.

To use a specific LLM from Amazon Bedrock, SageMaker Canvas uses the model ID of the chosen LLM as part of the API calls. At the time of writing, SageMaker Canvas supports the following models from Amazon Bedrock, grouped by model provider:

- AI21

- Jurassic-2 Mid:

j2-mid-v1 - Jurassic-2 Ultra :

j2-ultra-v1

- Jurassic-2 Mid:

- Amazon

- Titan:

titan-text-premier-v1:* - Titan Large:

titan-text-lite-v1 - Titan Express:

titan-text-express-v1

- Titan:

- Anthropic

- Claude 2:

claude-v2 - Claude Instant:

claude-instant-v1

- Claude 2:

- Cohere

- Command Text:

command-text-* - Command Light:

command-light-text-*

- Command Text:

- Meta

- Llama 2 13B:

llama2-13b-chat-v1 - Llama 2 70B:

llama2-70b-chat-v1

- Llama 2 13B:

For the complete list of models IDs for Amazon Bedrock, see Amazon Bedrock model IDs.

Limit access to all Amazon Bedrock models

To restrict access to all Amazon Bedrock models, you can modify the SageMaker role to explicitly deny these APIs. This makes sure no user can invoke any Amazon Bedrock model through SageMaker Canvas.

The following is an example IAM policy to achieve this:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Deny",

"Action": [

"bedrock:InvokeModel",

"bedrock:InvokeModelWithResponseStream"

],

"Resource": "*"

}

]

}

The policy uses the following parameters:

"Effect": "Deny"specifies that the following actions are denied"Action": ["bedrock:InvokeModel", "bedrock:InvokeModelWithResponseStream"]specifies the Amazon Bedrock APIs that are denied"Resource": "*"indicates that the denial applies to all Amazon Bedrock models

Limit access to specific Amazon Bedrock models

You can extend the preceding IAM policy to restrict access to specific Amazon Bedrock models by specifying the model IDs in the Resources section of the policy. This way, users can only invoke the allowed models.

The following is an example of the extended IAM policy:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Deny",

"Action": [

"bedrock:InvokeModel",

"bedrock:InvokeModelWithResponseStream"

],

"Resource": [

"arn:aws:bedrock:::foundation-model/",

"arn:aws:bedrock:::foundation-model/"

]

}

]

}

In this policy, the Resource array lists the specific Amazon Bedrock models that are denied. Provide the AWS Region, account, and model IDs appropriate for your environment.

Govern SageMaker JumpStart access to SageMaker Canvas

For SageMaker Canvas to be able to consume LLMs from SageMaker JumpStart, it must perform the following operations:

- Select the LLM from SageMaker Canvas or from the list of JumpStart Model IDs (link below).

- Create an endpoint configuration and Deploy the LLM on a real-time endpoint.

- Invoke the endpoint to generate the prediction.

The following diagram illustrates this workflow.

For a list of available JumpStart model IDs, see JumpStart Available Model Table. At the time of writing, SageMaker Canvas supports the following model IDs:

huggingface-textgeneration1-mpt-7b-*huggingface-llm-mistral-*meta-textgeneration-llama-2-*huggingface-llm-falcon-*huggingface-textgeneration-dolly-v2-*huggingface-text2text-flan-t5-*

To identify the right model from SageMaker JumpStart, SageMaker Canvas passes aws:RequestTag/sagemaker-sdk:jumpstart-model-id as part of the endpoint configuration. To learn more about other techniques to limit access to SageMaker JumpStart models using IAM permissions, refer to Manage Amazon SageMaker JumpStart foundation model access with private hubs.

Configure permissions to deploy endpoints through the UI

On the SageMaker domain configuration page on the SageMaker page of the AWS Management Console, you can configure SageMaker Canvas to be able to deploy SageMaker endpoints. This option also enables deployment of real-time endpoints for classic ML models, such as time series forecasting or classification. To enable model deployment, complete the following steps:

- On the Amazon SageMaker console, navigate to your domain.

- On the Domain details page, choose the App Configurations

- In the Canvas section, choose Edit.

- Turn on Enable direct deployment of Canvas models in the ML Ops configuration

Limit access to all SageMaker JumpStart models

To limit access to all SageMaker JumpStart models, configure the SageMaker role to block the CreateEndpointConfig and CreateEndpoint APIs on any SageMaker JumpStart Model ID. This prevents the creation of endpoints using these models. See the following code:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Deny",

"Action": [

"sagemaker:CreateEndpointConfig",

"sagemaker:CreateEndpoint"

],

"Resource": "*",

"Condition": {

"Null": {

"aws:RequestTag/sagemaker-sdk:jumpstart-model-id":”*”

}

}

}

]

}

This policy uses the following parameters:

"Effect": "Deny"specifies that the following actions are denied"Action": ["sagemaker:CreateEndpointConfig", "sagemaker:CreateEndpoint"]specifies the SageMaker APIs that are denied- The

"Null"condition operator in AWS IAM policies is used to check whether a key exists or not. It does not check the value of the key, only its presence or absence "aws:RequestTag/sagemaker-sdk:jumpstart-model-id":”*”indicates that the denial applies to all SageMaker JumpStart models

Limit access and deployment for specific SageMaker JumpStart models

Similar to Amazon Bedrock models, you can limit access to specific SageMaker JumpStart models by specifying their model IDs in the IAM policy. To achieve this, an administrator needs to restrict users from creating endpoints with unauthorized models. For example, to deny access to Hugging Face FLAN T5 models and MPT models, use the following code:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Deny",

"Action": [

"sagemaker:CreateEndpointConfig",

"sagemaker:CreateEndpoint"

],

"Resource": "*",

"Condition": {

"StringLike": {

"aws:RequestTag/sagemaker-sdk:jumpstart-model-id": [

"huggingface-textgeneration1-mpt-7b-*",

"huggingface-text2text-flan-t5-*"

]

}

}

}

]

}

In this policy, the "StringLike" condition allows for pattern matching, enabling the policy to apply to multiple model IDs with similar prefixes.

Clean up

To avoid incurring future workspace instance charges, log out of SageMaker Canvas when you’re done using the application. Optionally, you can configure SageMaker Canvas to automatically shut down when idle.

Conclusion

In this post, we demonstrated how SageMaker Canvas invokes LLMs powered by Amazon Bedrock and SageMaker JumpStart, and how enterprises can govern access to these models, whether you want to limit access to specific models or to any model from either service. You can combine the IAM policies shown in this post in the same IAM role to provide complete control.

By following these guidelines, enterprises can make sure their use of generative AI models is both secure and compliant with organizational policies. This approach not only safeguards sensitive data but also empowers business analysts and data scientists to harness the full potential of AI within a controlled environment.

Now that your environment is configured according to the enterprise standard, we suggest reading the following posts to learn what SageMaker Canvas enables you to do with generative AI:

- Prioritizing employee well-being: An innovative approach with generative AI and Amazon SageMaker Canvas

- Fine-tune and deploy language models with Amazon SageMaker Canvas and Amazon Bedrock

- Analyze security findings faster with no-code data preparation using generative AI and Amazon SageMaker Canvas

- Overcoming common contact center challenges with generative AI and Amazon SageMaker Canvas

- Empower your business users to extract insights from company documents using Amazon SageMaker Canvas and Generative AI

About the Authors

Davide Gallitelli is a Senior Specialist Solutions Architect GenAI/ML. He is Italian, based in Brussels, and works closely with customer all around the world on Generative AI workloads and Low-Code No-Code ML technology. He has been a developer since very young, starting to code at the age of 7. He started learning AI/ML in his later years of university, and has fallen in love with it since then.

Davide Gallitelli is a Senior Specialist Solutions Architect GenAI/ML. He is Italian, based in Brussels, and works closely with customer all around the world on Generative AI workloads and Low-Code No-Code ML technology. He has been a developer since very young, starting to code at the age of 7. He started learning AI/ML in his later years of university, and has fallen in love with it since then.

Lijan Kuniyil is a Senior Technical Account Manager at AWS. Lijan enjoys helping AWS enterprise customers build highly reliable and cost-effective systems with operational excellence. Lijan has more than 25 years of experience in developing solutions for financial and consulting companies.

Lijan Kuniyil is a Senior Technical Account Manager at AWS. Lijan enjoys helping AWS enterprise customers build highly reliable and cost-effective systems with operational excellence. Lijan has more than 25 years of experience in developing solutions for financial and consulting companies.

Saptarshi Banerjee serves as a Senior Partner Solutions Architect at AWS, collaborating closely with AWS Partners to design and architect mission-critical solutions. With a specialization in generative AI, AI/ML, serverless architecture, and cloud-based solutions, Saptarshi is dedicated to enhancing performance, innovation, scalability, and cost-efficiency for AWS Partners within the cloud ecosystem.

Saptarshi Banerjee serves as a Senior Partner Solutions Architect at AWS, collaborating closely with AWS Partners to design and architect mission-critical solutions. With a specialization in generative AI, AI/ML, serverless architecture, and cloud-based solutions, Saptarshi is dedicated to enhancing performance, innovation, scalability, and cost-efficiency for AWS Partners within the cloud ecosystem.

Leave a Reply