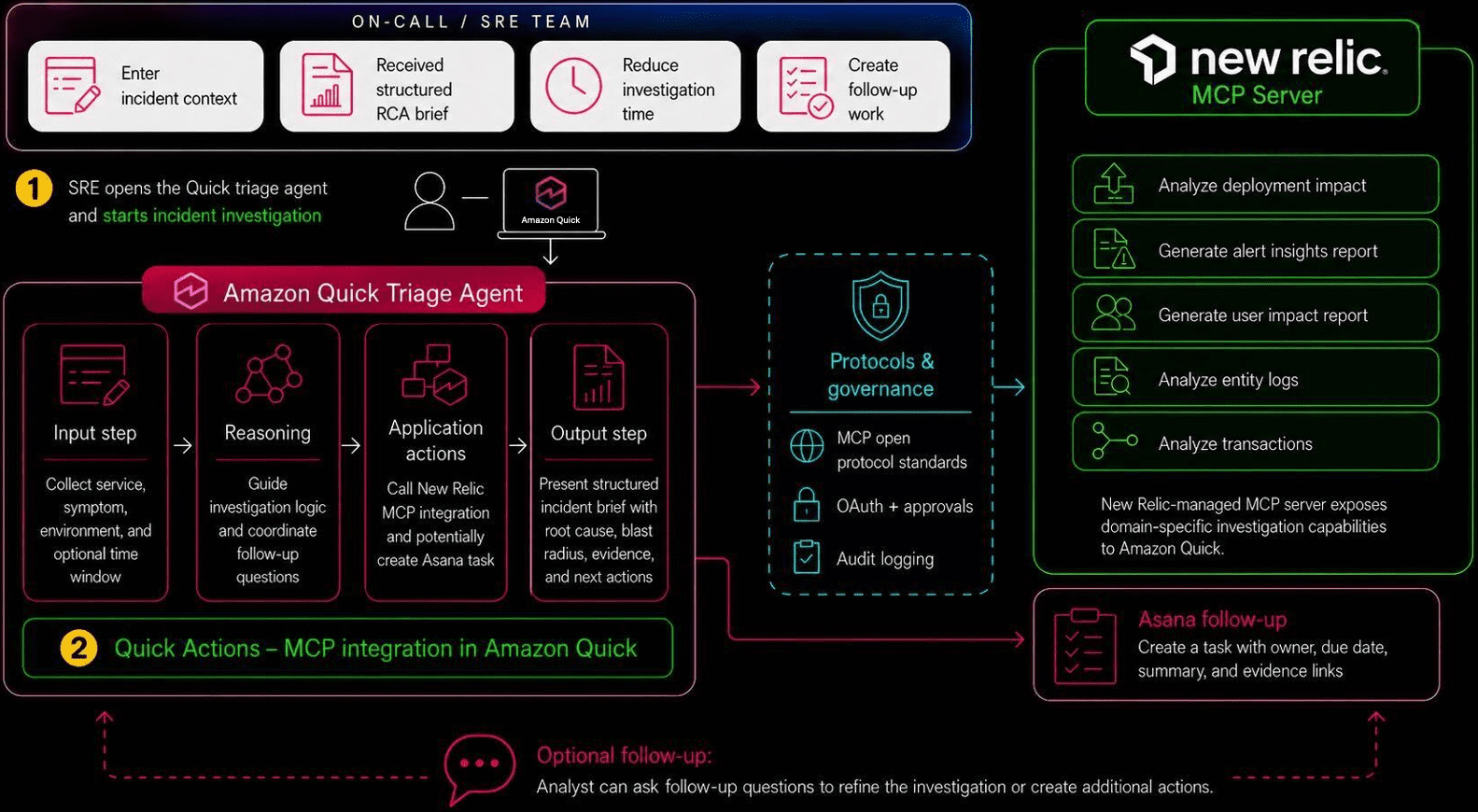

Favorite Incident triage is time-sensitive because site reliability engineers (SREs) and support engineers often need to collect evidence, assess user impact, and create follow-up work across separate tools. With Amazon Quick and New Relic, you can coordinate those investigation and handoff steps in a single conversational workflow. This post shows

Read More

Shared by AWS Machine Learning June 9, 2026

Shared by AWS Machine Learning June 9, 2026

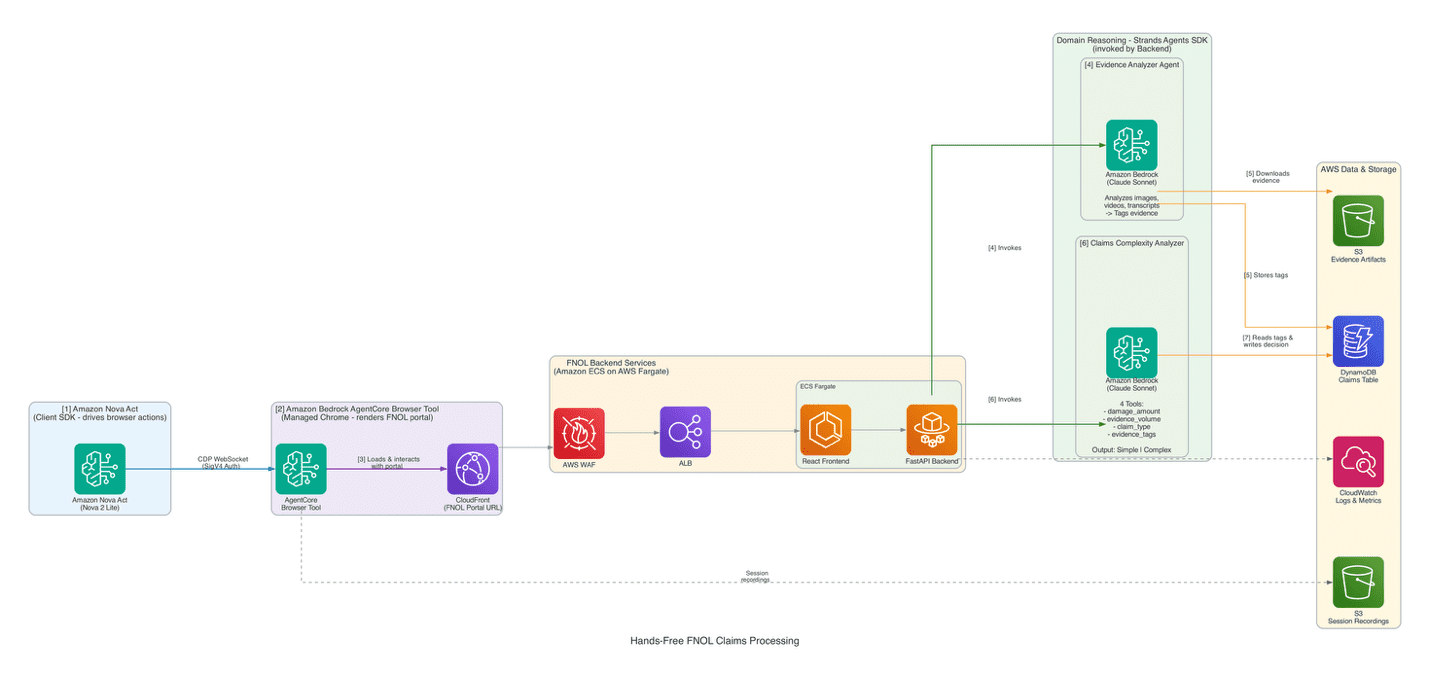

Favorite Turning multimodal first notice of loss (FNOL) evidence into tagged, decision-ready intake so adjusters start with context instead of raw artifacts. Manual FNOL processing consumes significant expert time on repetitive tasks because unstructured, multimodal evidence must be interpreted through portals designed for human interaction. Photos captured in the field,

Read More

Shared by AWS Machine Learning June 9, 2026

Shared by AWS Machine Learning June 9, 2026

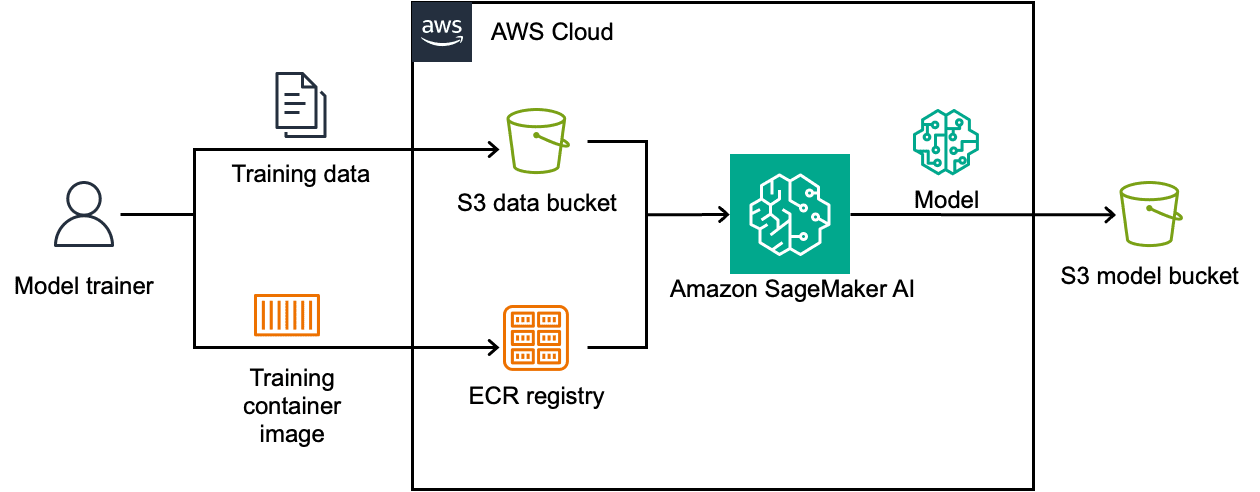

Favorite Physical AI is moving from research into production. Robots are increasingly trained in high-fidelity simulation before being deployed to factories, warehouses, and logistics centers, because training in the real world is slow, expensive, and often unsafe, while GPU-accelerated simulation can compress months of learning into hours. This shifts the

Read More

Shared by AWS Machine Learning June 9, 2026

Shared by AWS Machine Learning June 9, 2026

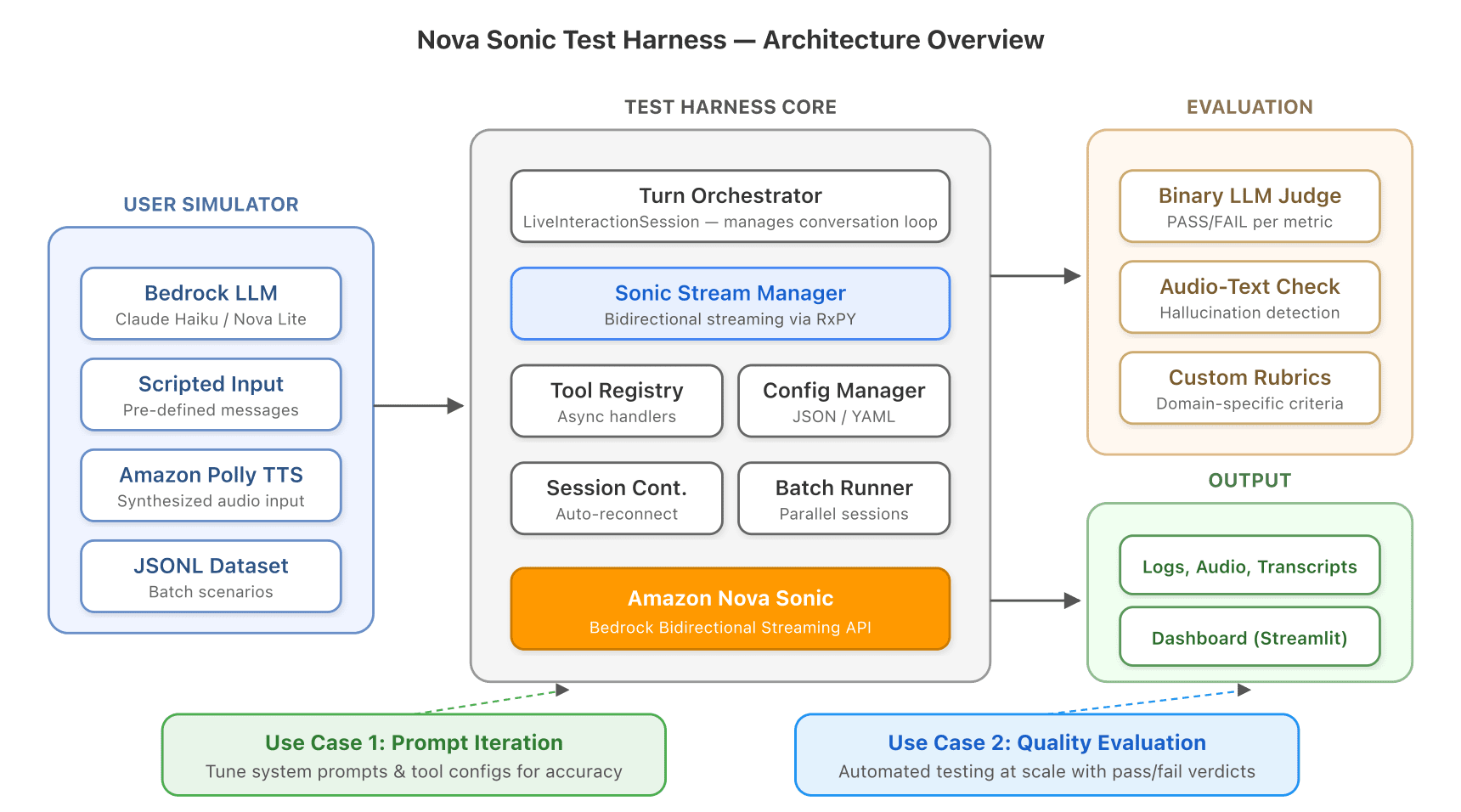

Favorite Voice agents are transforming how businesses interact with customers, handling appointment bookings, order inquiries, account management, and more through natural spoken conversation. But as these agents grow more capable, a fundamental challenge emerges: how do you test them? Unlike text-based chatbots where you can script inputs and assert outputs,

Read More

Shared by AWS Machine Learning June 8, 2026

Shared by AWS Machine Learning June 8, 2026

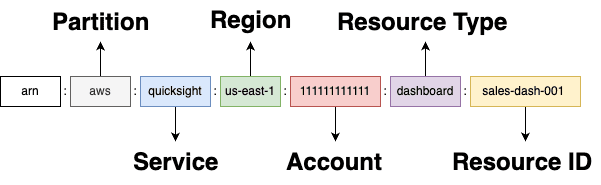

Favorite You migrate dashboards from development to production, but the permissions don’t carry over. You share a dashboard with your Finance team, but they keep getting “access denied.” You set up namespaces for multi-tenant isolation, and the same username works in one namespace but not another. These are real tasks

Read More

Shared by AWS Machine Learning June 8, 2026

Shared by AWS Machine Learning June 8, 2026

Favorite Machine learning (ML) inference often requires processing sensitive data—medical records, proprietary business information, or personal communications. What if you could run ML inference in the cloud while hiding your data from the cloud itself? More specifically, what if you could enforce that your data stayed encrypted throughout the entire

Read More

Shared by AWS Machine Learning June 8, 2026

Shared by AWS Machine Learning June 8, 2026

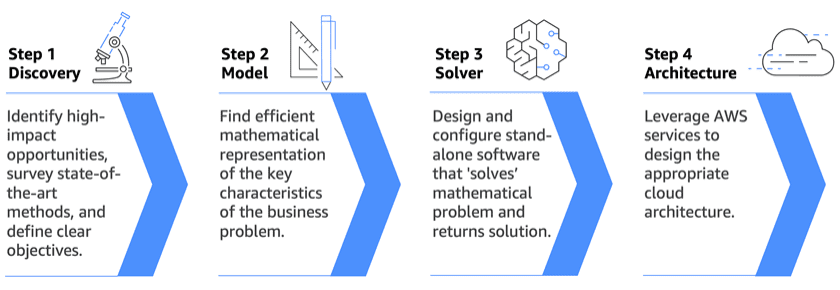

Favorite The science of optimal decisions — and how leading organizations are applying it. Every enterprise faces decisions that are too complex for intuition or manual decision-making alone. Which delivery routes minimize cost while meeting next-day promises? How should hundreds of robots sequence movements across a factory floor without collision?

Read More

Shared by AWS Machine Learning June 8, 2026

Shared by AWS Machine Learning June 8, 2026

Favorite There’s a habit going around. Walking from one meeting to the next with the laptop cradled half-open. Sitting through a 1:1 with the lid propped just enough to keep the screen alive. Riding home while holding your laptop because it must stay running. Anywhere except closed on a desk,

Read More

Shared by AWS Machine Learning June 8, 2026

Shared by AWS Machine Learning June 8, 2026

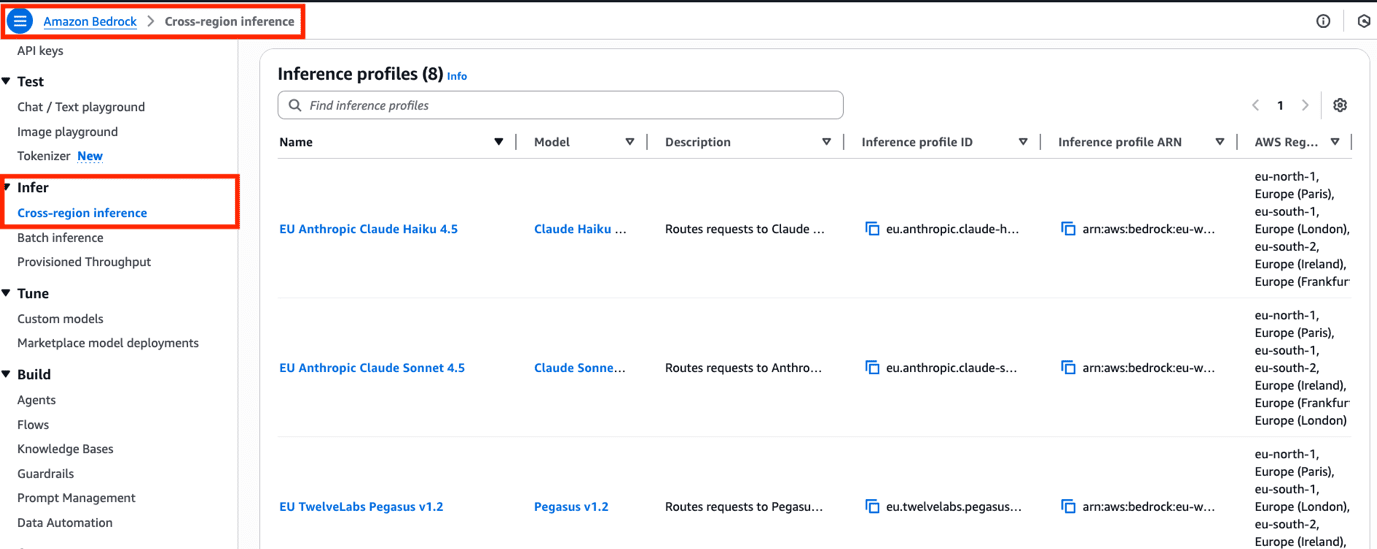

Favorite With access to the latest generative AI models and high-performance accelerated compute in high global demand, AWS customers need tools to take advantage of model availability and capacity across multiple AWS Regions, while still meeting their security and privacy requirements. cross-Region Inference (CRIS) on Amazon Bedrock meets these needs

Read More

Shared by AWS Machine Learning June 8, 2026

Shared by AWS Machine Learning June 8, 2026

Favorite Here are Google’s latest AI updates from May 2026 View Original Source (blog.google/technology/ai/) Here.

![]() Shared by AWS Machine Learning June 9, 2026

Shared by AWS Machine Learning June 9, 2026