Implement Amazon SageMaker domain cross-Region disaster recovery using custom Amazon EFS instances

Amazon SageMaker is a cloud-based machine learning (ML) platform within the AWS ecosystem that offers developers a seamless and convenient way to build, train, and deploy ML models. Extensively used by data scientists and ML engineers across various industries, this robust tool provides high availability and uninterrupted access for its users. When working with SageMaker, your environment resides within a SageMaker domain, which encompasses critical components like Amazon Elastic File System (Amazon EFS) for storage, user profiles, and a diverse array of security configurations. This comprehensive setup enables collaborative efforts by allowing users to store, share, and access notebooks, Python files, and other essential artifacts.

In 2023, SageMaker announced the release of the new SageMaker Studio, which offers two new types of applications: JupyterLab and Code Editor. The old SageMaker Studio was renamed to SageMaker Studio Classic. Unlike other applications that share one single storage volume in SageMaker Studio Classic, each JupyterLab and Code Editor instance has its own Amazon Elastic Block Store (Amazon EBS) volume. For more information about this architecture, see New – Code Editor, based on Code-OSS VS Code Open Source now available in Amazon SageMaker Studio. Another new feature was the ability to bring your own EFS instance, which enables you to attach and detach a custom EFS instance.

A SageMaker domain exclusive to the new SageMaker Studio is composed of the following entities:

- User profiles

- Applications including JupyterLab, Code Editor, RStudio, Canvas, and MLflow

- A variety of security, application, policy, and Amazon Virtual Private Cloud (Amazon VPC) configurations

As a precautionary measure, some customers may want to ensure continuous operation of SageMaker in unlikely event of regional impairment of SageMaker service. This solution leverages Amazon EFS’s built-in cross-region replication capability to serve as a robust disaster recovery mechanism, providing continuous and uninterrupted access to your SageMaker domain data across multiple regions. Replicating your data and resources across multiple Regions helps to safeguards against Regional outages and fortifies your defenses against natural disasters or unforeseen technical failures, thereby providing business continuity and disaster recovery capabilities. This setup is particularly crucial for mission-critical and time-sensitive workloads, so data scientists and ML engineers can seamlessly continue their work without any disruptive interruptions.

The solution illustrated in this post focuses on the new SageMaker Studio experience, particularly private JupyterLab and Code Editor spaces. Although the code base doesn’t include shared spaces, the solution is straightforward to extend with the same concept. In this post, we guide you through a step-by-step process to seamlessly migrate and safeguard your new SageMaker domain in Amazon SageMaker Studio from one active AWS to another AWS Region, including all associated user profiles and files. By using a combination of AWS services, you can implement this feature effectively, overcoming the current limitations within SageMaker.

Solution overview

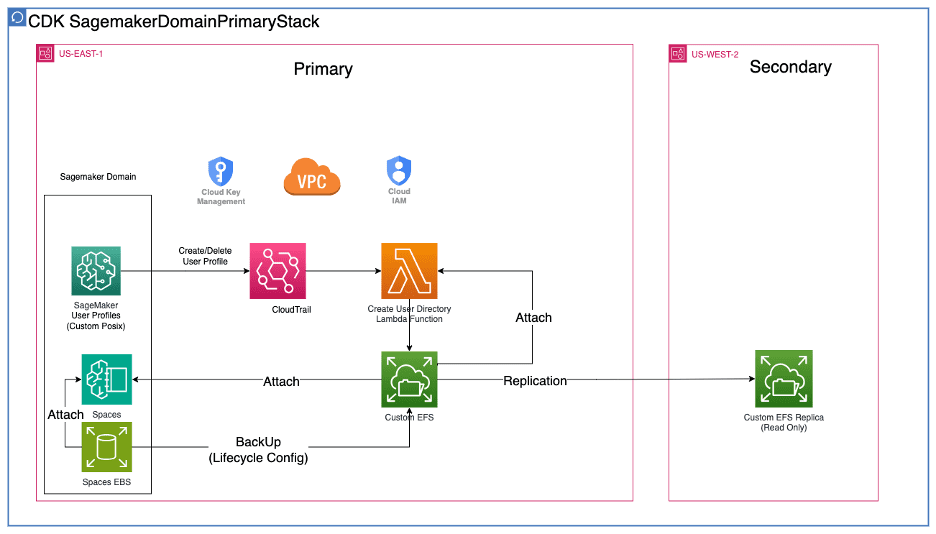

In active-passive mode, the SageMaker domain infrastructure is only provisioned in the primary AWS Region. Data backup is in near real time using Amazon EFS replication. Diagram 1 illustrates this architecture.

Diagram 1:

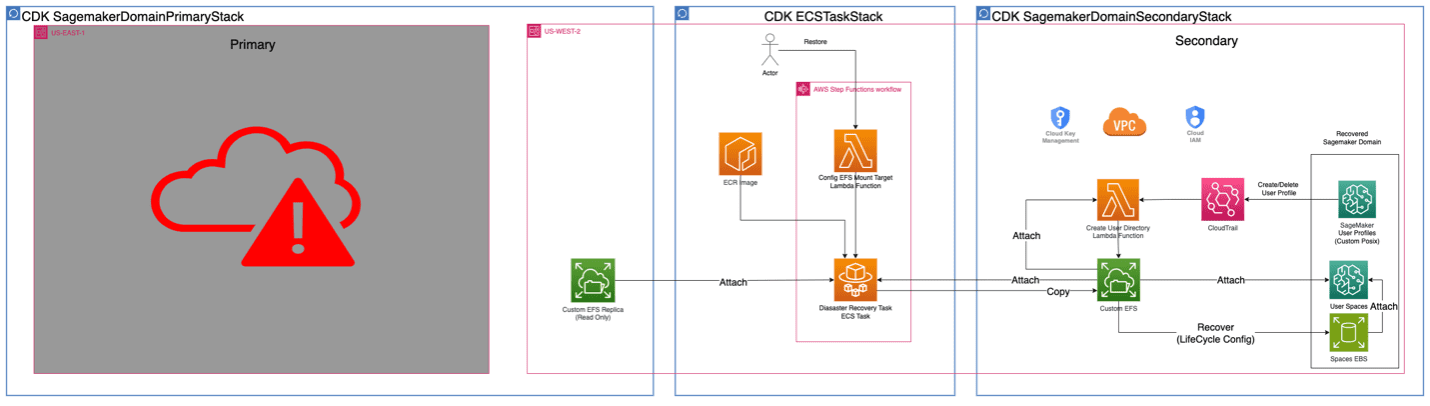

When the primary Region is down, a new domain is launched in the secondary Region, and an AWS Step Functions workflow runs to restore data as seen in diagram 2.

Diagram 2:

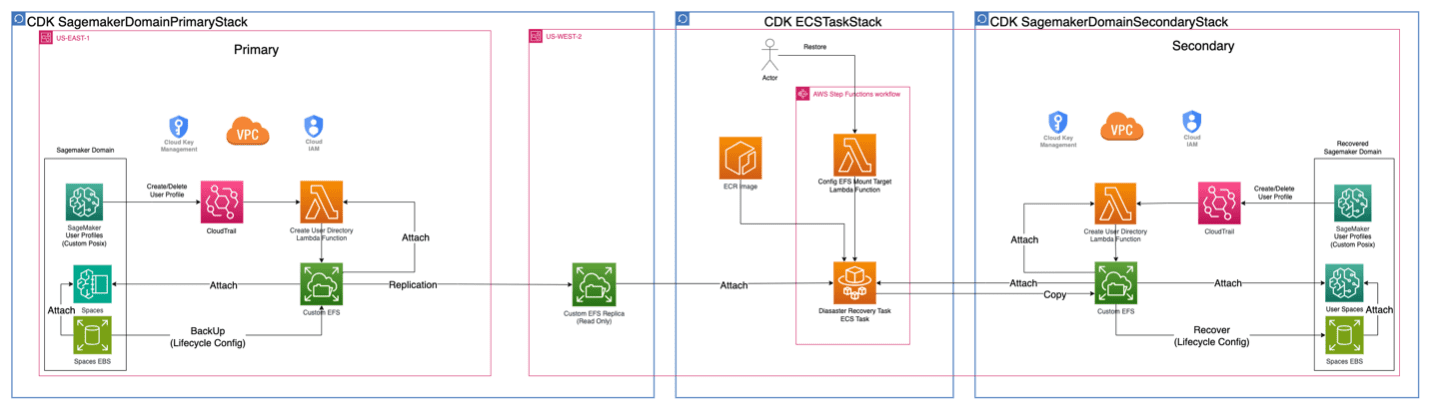

In active-active mode depicted in diagram 3, the SageMaker domain infrastructure is provisioned in two AWS Regions. Data backup is in near real time using Amazon EFS replication. The data sync is completed by the Step Functions workflow, and its cadence can be on demand, scheduled, or invoked by an event.

Diagram 3:

You can find the complete code sample in the GitHub repo.

Click here to open the AWS console and follow along.

With all the benefits of upgraded SageMaker domains, we developed a fast and robust cross- AWS Region disaster recovery solution, using Amazon EFS to back up and recover user data stored in SageMaker Studio applications. In addition, domain user profiles and respective custom Posix are managed by a YAML file in an AWS Cloud Development Kit (AWS CDK) code base to make sure domain entities in the secondary AWS Region are identical to those in the primary AWS Region. Because user-level custom EFS instances are only configurable through programmatic API calls, creating users on the AWS Management Console is not considered in our context.

Backup

Backup is performed within the primary AWS Region. There are two types of sources: an EBS space and a custom EFS instance.

For an EBS space, a lifecycle config is attached to JupyterLab or Code Editor for the purposes of backing up files. Every time the user opens the application, the lifecycle config takes a snapshot of its EBS spaces and stores them in the custom EFS instance using an rsync command.

For a custom EFS instance, it’s automatically replicated to its read-only replica in the secondary AWS Region.

Recovery

For recovery in the secondary AWS Region, a SageMaker domain with the same user profiles and spaces is deployed, and an empty custom EFS instance is created and attached to it. Then an Amazon Elastic Container Service (Amazon ECS) task runs to copy all the backup files to the empty custom EFS instance. At the last step, a lifecycle config script is run to restore the Amazon EBS snapshots before the SageMaker space launched.

Prerequisites

Complete the following prerequisite steps:

- Clone the GitHub repo to your local machine by running the following command in your terminal:

- Navigate to the project working directory and set up the Python virtual environment:

- Install the required dependencies:

- Bootstrap your AWS account and set up the AWS CDK environment in both Regions:

- Synthesize the AWS CloudFormation templates by running the following code:

- Configure the necessary arguments in the constants.py file:

- Set the primary Region in which you want to deploy the solution.

- Set the secondary Region in which you want to recover the primary domain.

- Replace the account ID variable with your AWS account ID.

Deploy the solution

Complete the following steps to deploy the solution:

- Deploy the primary SageMaker domain:

- Deploy the secondary SageMaker domain:

- Deploy the disaster recovery Step Functions workflow:

- Launch the application with the custom EFS instance attached and add files to the application’s EBS volume and custom EFS instance.

Test the solution

Complete the following steps to test the solution:

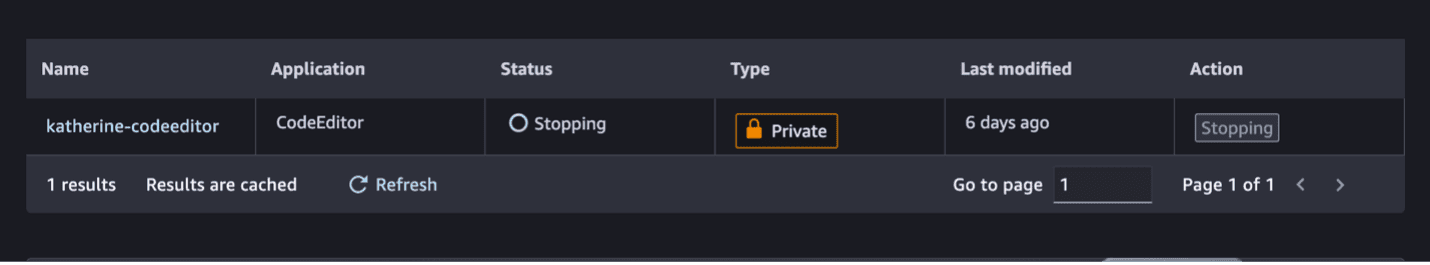

- Add test files using Code Editor or JupyterLab in the primary Region.

- Stop and restart the application.

This invokes the lifecycle config script to take an Amazon EBS snapshot on the application.

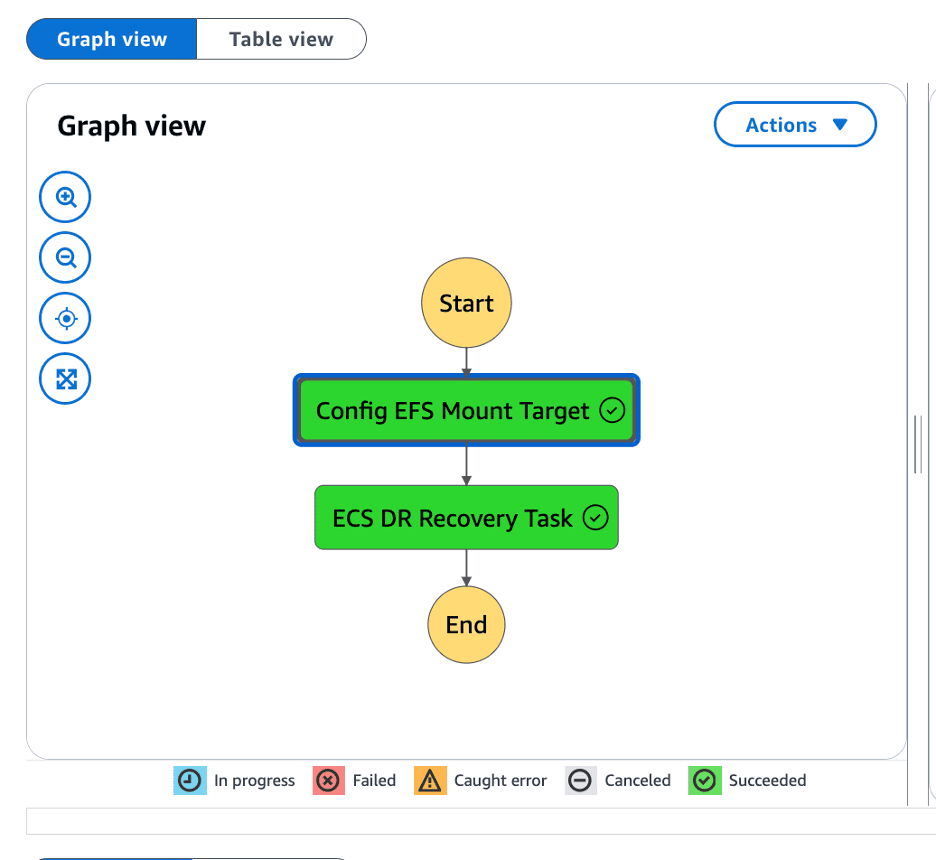

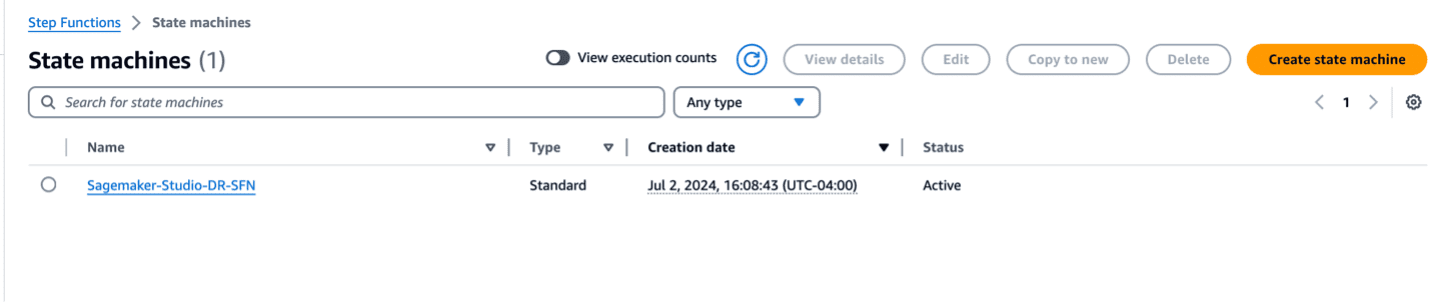

- On the Step Functions console in the secondary Region, run the disaster recovery Step Functions workflow.

The following figure illustrates the workflow steps.

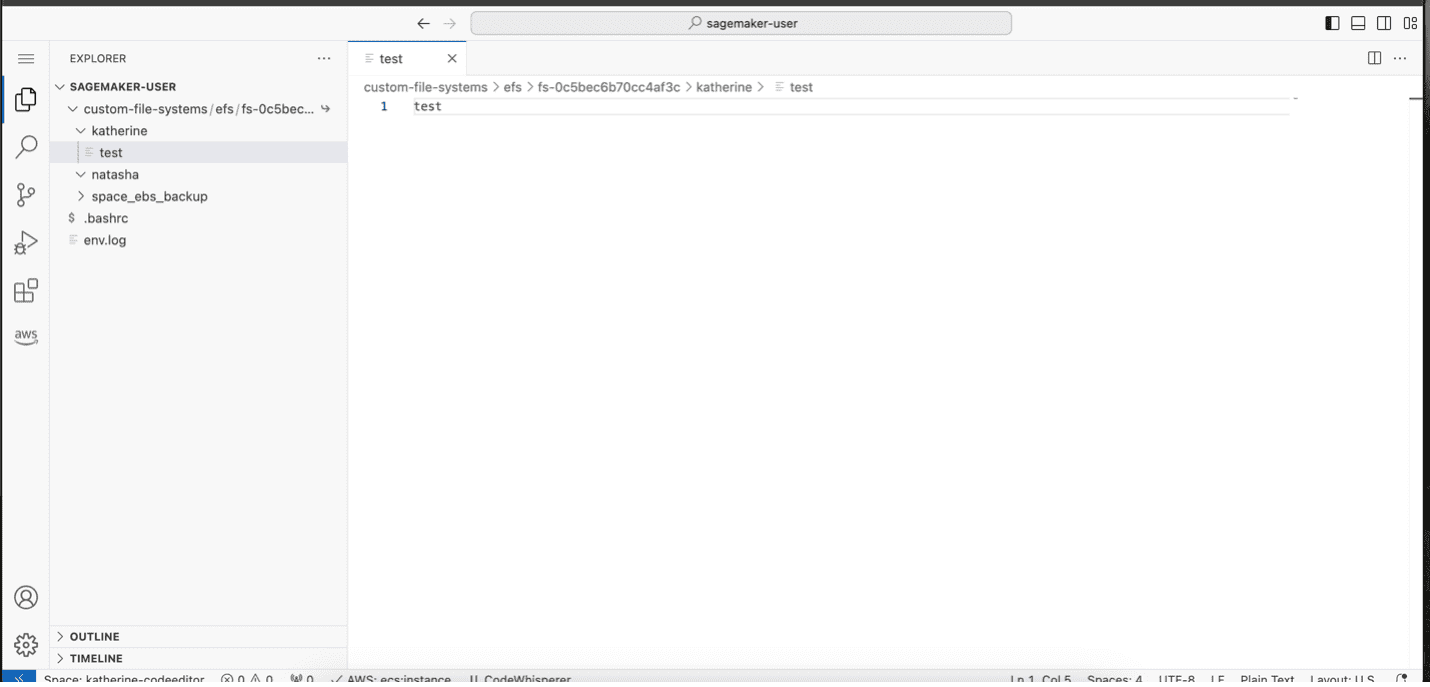

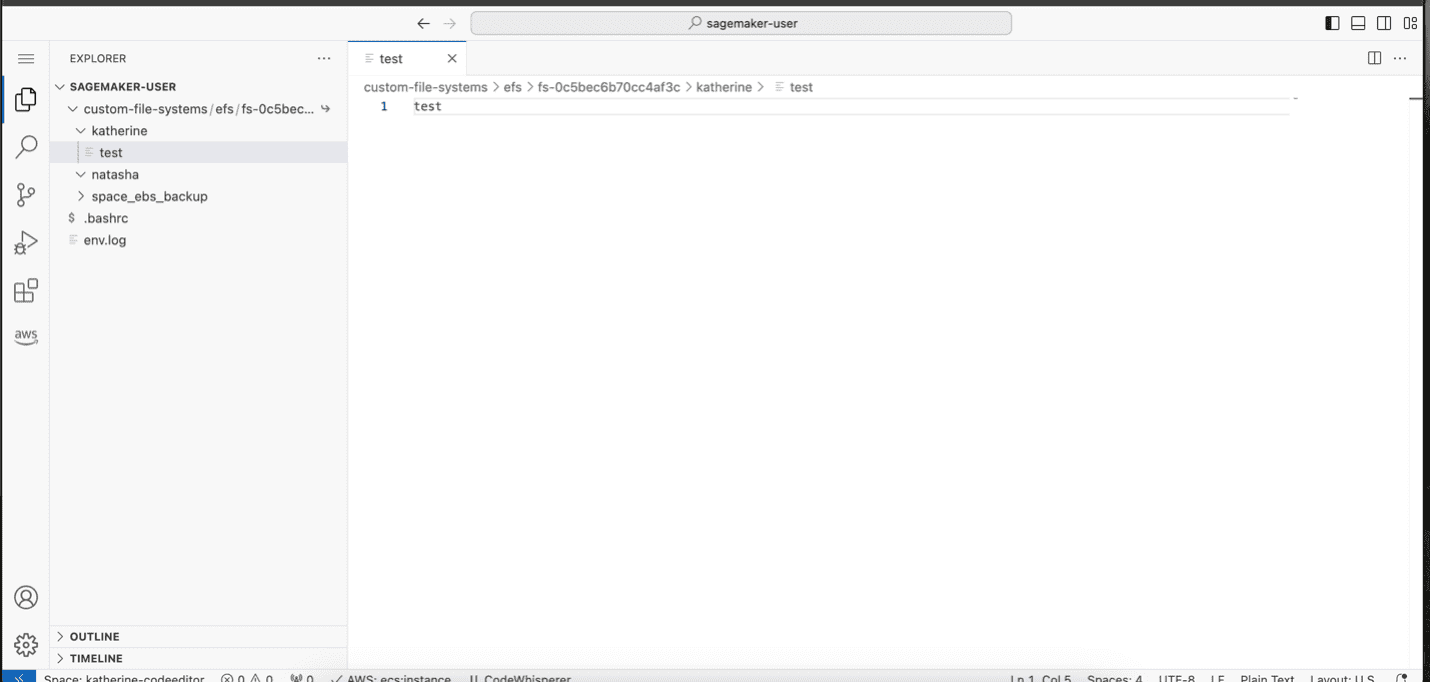

- On the SageMaker console in the secondary Region, launch the same user’s SageMaker Studio.

You will find your files backed up in either Code Editor or JupyterLab.

Clean up

To avoid incurring ongoing charges, clean up the resources you created as part of this post:

- Stop all Code Editor and JupyterLab Apps

- Delete all cdk stacks

Conclusion

SageMaker offers a robust and highly available ML platform, enabling data scientists and ML engineers to build, train, and deploy models efficiently. For critical use cases, implementing a comprehensive disaster recovery strategy enhances the resilience of your SageMaker domain, ensuring continuous operation in the unlikely event of regional impairment. This post presents a detailed solution for migrating and safeguarding your SageMaker domain, including user profiles and files, from one active AWS Region to another passive or active AWS Region. By using a strategic combination of AWS services, such as Amazon EFS, Step Functions, and the AWS CDK, this solution overcomes the current limitations within SageMaker Studio and provides continuous access to your valuable data and resources. Whether you choose an active-passive or active-active architecture, this solution provides a robust and resilient backup and recovery mechanism, fortifying your defenses against natural disasters, technical failures, and Regional outages. With this comprehensive guide, you can confidently safeguard your mission-critical and time-sensitive workloads, maintaining business continuity and uninterrupted access to your SageMaker domain, even in the case of unforeseen circumstances.

For more information on disaster recovery on AWS, refer to the following:

- What is disaster recovery?

- Disaster Recovery of Workloads on AWS: Recovery in the Cloud

- AWS Elastic Disaster Recovery

- Implement backup and recovery using an event-driven serverless architecture with Amazon SageMaker Studio

About the Authors

Jinzhao Feng is a Machine Learning Engineer at AWS Professional Services. He focuses on architecting and implementing large-scale generative AI and classic ML pipeline solutions. He is specialized in FMOps, LLMOps, and distributed training.

Jinzhao Feng is a Machine Learning Engineer at AWS Professional Services. He focuses on architecting and implementing large-scale generative AI and classic ML pipeline solutions. He is specialized in FMOps, LLMOps, and distributed training.

Nick Biso is a Machine Learning Engineer at AWS Professional Services. He solves complex organizational and technical challenges using data science and engineering. In addition, he builds and deploys AI/ML models on the AWS Cloud. His passion extends to his proclivity for travel and diverse cultural experiences.

Nick Biso is a Machine Learning Engineer at AWS Professional Services. He solves complex organizational and technical challenges using data science and engineering. In addition, he builds and deploys AI/ML models on the AWS Cloud. His passion extends to his proclivity for travel and diverse cultural experiences.

Natasha Tchir is a Cloud Consultant at the Generative AI Innovation Center, specializing in machine learning. With a strong background in ML, she now focuses on the development of generative AI proof-of-concept solutions, driving innovation and applied research within the GenAIIC.

Natasha Tchir is a Cloud Consultant at the Generative AI Innovation Center, specializing in machine learning. With a strong background in ML, she now focuses on the development of generative AI proof-of-concept solutions, driving innovation and applied research within the GenAIIC.

Katherine Feng is a Cloud Consultant at AWS Professional Services within the Data and ML team. She has extensive experience building full-stack applications for AI/ML use cases and LLM-driven solutions.

Katherine Feng is a Cloud Consultant at AWS Professional Services within the Data and ML team. She has extensive experience building full-stack applications for AI/ML use cases and LLM-driven solutions.

Leave a Reply