Best practices and lessons for fine-tuning Anthropic’s Claude 3 Haiku on Amazon Bedrock

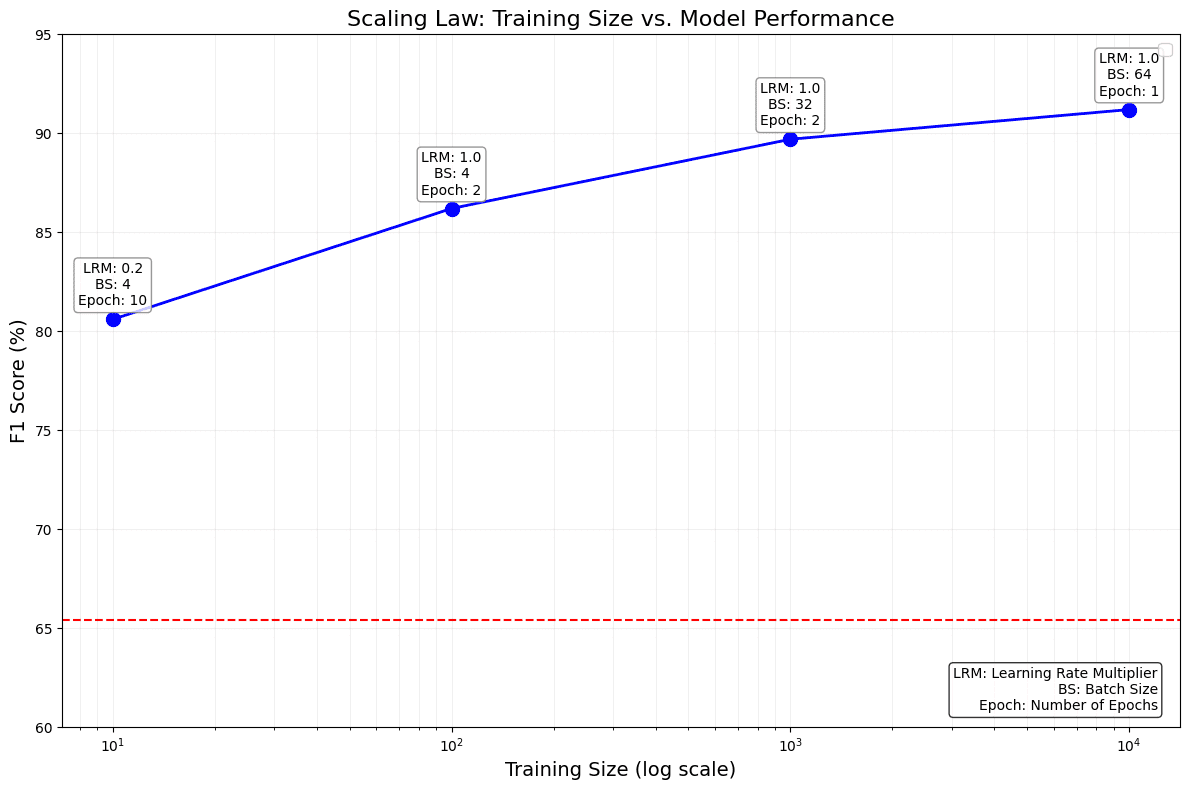

Fine-tuning is a powerful approach in natural language processing (NLP) and generative AI, allowing businesses to tailor pre-trained large language models (LLMs) for specific tasks. This process involves updating the model’s weights to improve its performance on targeted applications. By fine-tuning, the LLM can adapt its knowledge base to specific data and tasks, resulting in enhanced task-specific capabilities. To achieve optimal results, having a clean, high-quality dataset is of paramount importance. A well-curated dataset forms the foundation for successful fine-tuning. Additionally, careful adjustment of hyperparameters such as learning rate multiplier and batch size plays a crucial role in optimizing the model’s adaptation to the target task.

The capabilities in Amazon Bedrock for fine-tuning LLMs offer substantial benefits for enterprises. This feature enables companies to optimize models like Anthropic’s Claude 3 Haiku on Amazon Bedrock for custom use cases, potentially achieving performance levels comparable to or even surpassing more advanced models such as Anthropic’s Claude 3 Opus or Anthropic’s Claude 3.5 Sonnet. The result is a significant improvement in task-specific performance, while potentially reducing costs and latency. This approach offers a versatile solution to satisfy your goals for performance and response time, allowing businesses to balance capability, domain knowledge, and efficiency in your AI-powered applications.

In this post, we explore the best practices and lessons learned for fine-tuning Anthropic’s Claude 3 Haiku on Amazon Bedrock. We discuss the important components of fine-tuning, including use case definition, data preparation, model customization, and performance evaluation. This post dives deep into key aspects such as hyperparameter optimization, data cleaning techniques, and the effectiveness of fine-tuning compared to base models. We also provide insights on how to achieve optimal results for different dataset sizes and use cases, backed by experimental data and performance metrics.

As part of this post, we first introduce general best practices for fine-tuning Anthropic’s Claude 3 Haiku on Amazon Bedrock, and then present specific examples with the TAT- QA dataset (Tabular And Textual dataset for Question Answering).

Recommended use cases for fine-tuning

The use cases that are the most well-suited for fine-tuning Anthropic’s Claude 3 Haiku include the following:

- Classification – For example, when you have 10,000 labeled examples and want Anthropic’s Claude 3 Haiku to do well at this task.

- Structured outputs – For example, when you have 10,000 labeled examples specific to your use case and need Anthropic’s Claude 3 Haiku to accurately identify them.

- Tools and APIs – For example, when you need to teach Anthropic’s Claude 3 Haiku how to use your APIs well.

- Particular tone or language – For example, when you need Anthropic’s Claude 3 Haiku to respond with a particular tone or language specific to your brand.

Fine-tuning Anthropic’s Claude 3 Haiku has demonstrated superior performance compared to few-shot prompt engineering on base Anthropic’s Claude 3 Haiku, Anthropic’s Claude 3 Sonnet, and Anthropic’s Claude 3.5 Sonnet across various tasks. These tasks include summarization, classification, information retrieval, open-book Q&A, and custom language generation such as SQL. However, achieving optimal performance with fine-tuning requires effort and adherence to best practices.

To better illustrate the effectiveness of fine-tuning compared to other approaches, the following table provides a comprehensive overview of various problem types, examples, and their likelihood of success when using fine-tuning versus prompting with Retrieval Augmented Generation (RAG). This comparison can help you understand when and how to apply these different techniques effectively.

| Problem | Examples | Likelihood of Success with Fine-tuning | Likelihood of Success with Prompting + RAG |

| Make the model follow a specific format or tone | Instruct the model to use a specific JSON schema or talk like the organization’s customer service reps | Very High | High |

| Teach the model a new skill | Teach the model how to call APIs, fill out proprietary documents, or classify customer support tickets | High | Medium |

| Teach the model a new skill, and hope it learns similar skills | Teach the model to summarize contract documents, in order to learn how to write better contract documents | Low | Medium |

| Teach the model new knowledge, and expect it to use that knowledge for general tasks | Teach the model the organizations’ acronyms or more music facts | Low | Medium |

Prerequisites

Before diving into the best practices and optimizing fine-tuning LLMs on Amazon Bedrock, familiarize yourself with the general process and how-to outlined in Fine-tune Anthropic’s Claude 3 Haiku in Amazon Bedrock to boost model accuracy and quality. The post provides essential background information and context for the fine-tuning process, including step-by-step guidance on fine-tuning Anthropic’s Claude 3 Haiku on Amazon Bedrock both through the Amazon Bedrock console and Amazon Bedrock API.

LLM fine-tuning lifecycle

The process of fine-tuning an LLM like Anthropic’s Claude 3 Haiku on Amazon Bedrock typically follows these key stages:

- Use case definition – Clearly define the specific task or knowledge domain for fine-tuning

- Data preparation – Gather and clean high-quality datasets relevant to the use case

- Data formatting – Structure the data following best practices, including semantic blocks and system prompts where appropriate

- Model customization – Configure the fine-tuning job on Amazon Bedrock, setting parameters like learning rate and batch size, enabling features like early stopping to prevent overfitting

- Training and monitoring – Run the training job and monitor the status of training job

- Performance evaluation – Assess the fine-tuned model’s performance against relevant metrics, comparing it to base models

- Iteration and deployment – Based on the result, refine the process if needed, then deploy the model for production

Throughout this journey, depending on the business case, you may choose to combine fine-tuning with techniques like prompt engineering for optimal results. The process is inherently iterative, allowing for continuous improvement as new data or requirements emerge.

Use case and dataset

The TAT-QA dataset is related to a use case for question answering on a hybrid of tabular and textual content in finance where tabular data is organized in table formats such as HTML, JSON, Markdown, and LaTeX. We focus on the task of answering questions about the table. The evaluation metric is the F1 score that measures the word-to-word matching of the extracted content between the generated output and the ground truth answer. The TAT-QA dataset has been divided into train (28,832 rows), dev (3,632 rows), and test (3,572 rows).

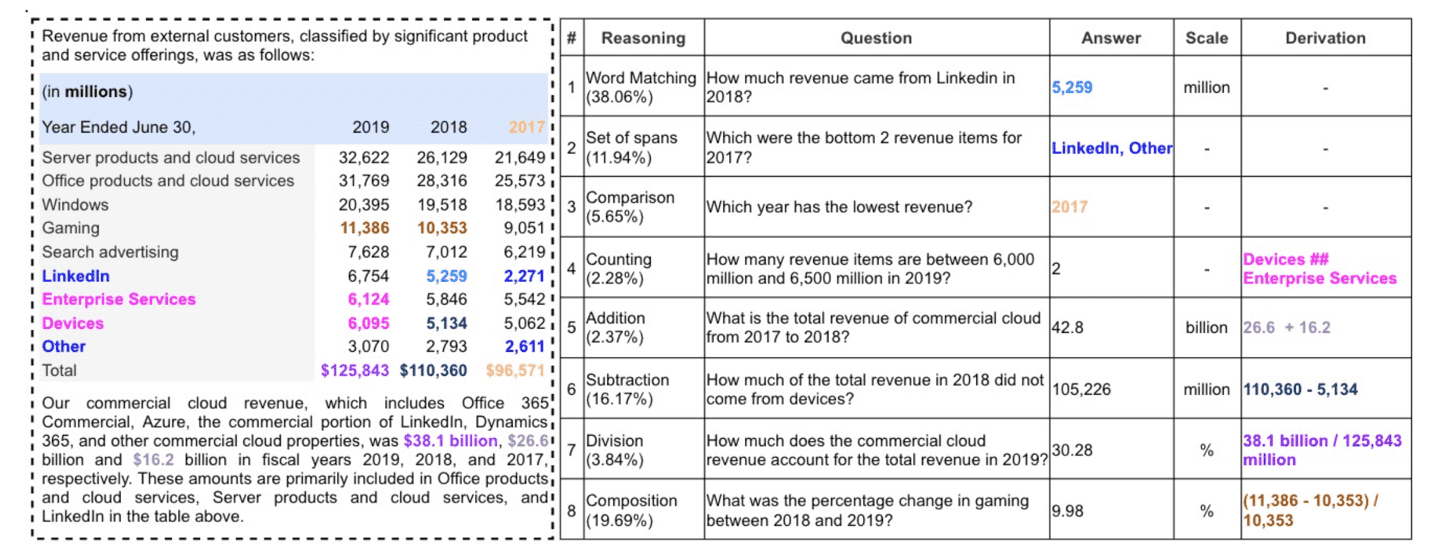

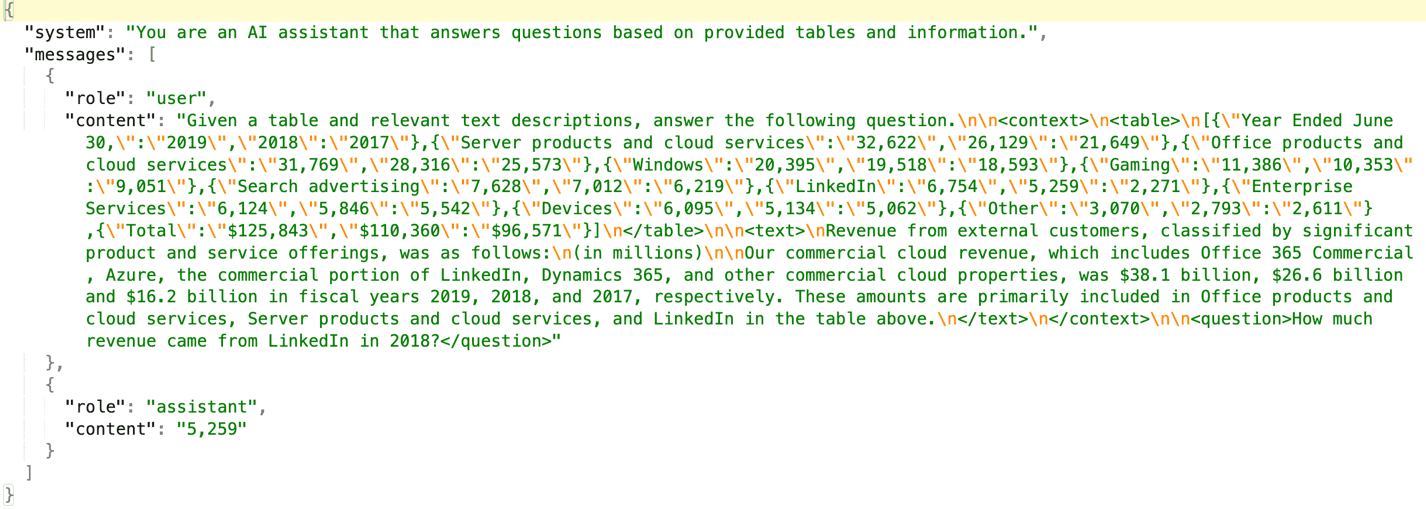

The following screenshot provides a snapshot of the TAT-QA data, which comprises a table with tabular and textual financial data. Following this financial data table, a detailed question-answer set is presented to demonstrate the complexity and depth of analysis possible with the TAT-QA dataset. This comprehensive table is from the paper TAT-QA: A Question Answering Benchmark on a Hybrid of Tabular and Textual Content in Finance, and it includes several key components:

- Reasoning types – Each question is categorized by the type of reasoning required

- Questions – A variety of questions that test different aspects of understanding and interpreting the financial data

- Answers – The correct responses to each question, showcasing the precision required in financial analysis

- Scale – Where applicable, the unit of measurement for the answer

- Derivation – For some questions, the calculation or logic used to arrive at the answer is provided

The following screenshot shows a formatted version of the data as JSONL and is passed to Anthropic’s Claude 3 Haiku for fine-tuning training data. The preceding table has been structured in JSONL format with system, user role (which contains the data and the question), and assistant role (which has answers). The table is enclosed within the XML tag

| . | . | . | . | . | Fine-Tuned Model Performance | Base Model Performance | Improvement: Fine-Tuned Anthropic’s Claude 3 Haiku vs. Base Models | ||||

| Target Use Case | Task Type | Fine-Tuning Data Size | Test Data Size | Eval Metric | Anthropic’s Claude 3 Haiku | Anthropic’s Claude 3 Haiku (Base Model) | Anthropic’s Claude 3 Sonnet | Anthropic’s Claude 3.5 Sonnet | vs. Anthropic’s Claude 3 Haiku Base | vs. Anthropic’s Claude 3 Sonnet Base | vs. Anthropic’s Claude 3.5 Sonnet Base |

| TAT-QA | Q&A on financial text and tabular content | 10,000 | 3,572 | F1 score | 91.2% | 73.2% | 76.3% | 83.0% | 24.6% | 19.6% | 9.9% |

Few-shot examples improve performance not only on the base model, but also on fine-tuned models, especially when the fine-tuning data is small.

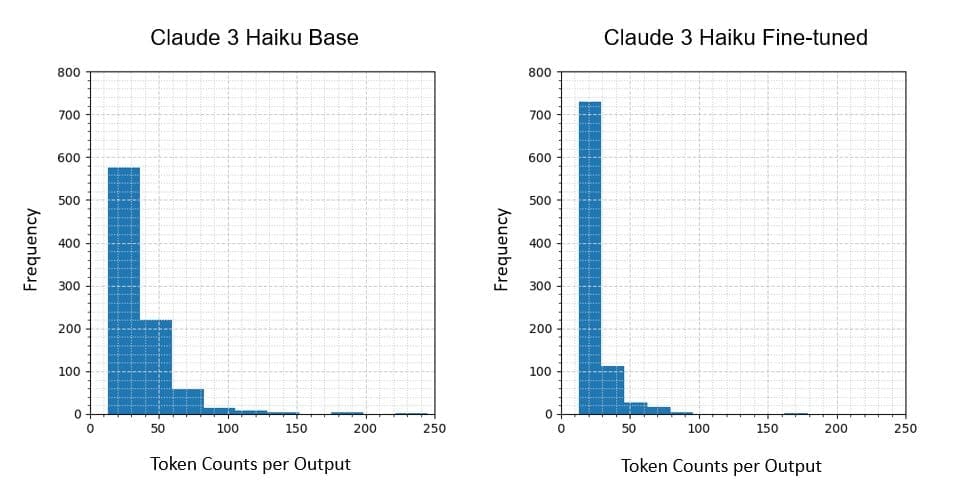

Fine-tuning also demonstrated significant benefits in reducing token usage. On the TAT-QA HTML test set (893 examples), the fine-tuned Anthropic’s Claude 3 Haiku model reduced the average output token count by 35% compared to the base model, as shown in the following table.

| Model | Average Output Token | % Reduced | Median | % Reduced | Standard Deviation | Minimum Token | Maximum Token |

| Anthropic’s Claude 3 Haiku Base | 34 | – | 28 | – | 27 | 13 | 245 |

| Anthropic’s Claude 3 Haiku Fine-Tuned | 22 | 35% | 17 | 39% | 14 | 13 | 179 |

We use the following figures to illustrate the token count distribution for both the base Anthropic’s Claude 3 Haiku and fine-tuned Anthropic’s Claude 3 Haiku models. The left graph shows the distribution for the base model, and the right graph displays the distribution for the fine-tuned model. These histograms demonstrate a shift towards more concise output in the fine-tuned model, with a notable reduction in the frequency of longer token sequences.

To further illustrate this improvement, consider the following example from the test set:

- Question:

"How did the company adopt Topic 606?" - Ground truth answer:

"the modified retrospective method" - Base Anthropic’s Claude 3 Haiku response:

"The company adopted the provisions of Topic 606 in fiscal 2019 utilizing the modified retrospective method" - Fine-tuned Anthropic’s Claude 3 Haiku response:

"the modified retrospective method"

As evident from this example, the fine-tuned model produces a more concise and precise answer, matching the ground truth exactly, whereas the base model includes additional, unnecessary information. This reduction in token usage, combined with improved accuracy, can lead to enhanced efficiency and reduced costs in production deployments.

Conclusion

Fine-tuning Anthropic’s Claude 3 Haiku on Amazon Bedrock offers significant performance improvements for specialized tasks. Our experiments demonstrate that careful attention to data quality, hyperparameter optimization, and best practices in the fine-tuning process can yield substantial gains over base models. Key takeaways include the following:

- The importance of high-quality, task-specific datasets, even if smaller in size

- Optimal hyperparameter settings vary based on dataset size and task complexity

- Fine-tuned models consistently outperform base models across various metrics

- The process is iterative, allowing for continuous improvement as new data or requirements emerge

Although fine-tuning provides impressive results, combining it with other techniques like prompt engineering may lead to even better outcomes. As LLM technology continues to evolve, mastering fine-tuning techniques will be crucial for organizations looking to use these powerful models for specific use cases and tasks.

Now you’re ready to fine-tune Anthropic’s Claude 3 Haiku on Amazon Bedrock for your use case. We look forward to seeing what you build when you put this new technology to work for your business.

Appendix

We used the following hyperparameters as part of our fine-tuning:

- Learning rate multiplier – Learning rate multiplier is one of the most critical hyperparameters in LLM fine-tuning. It influences the learning rate at which model parameters are updated after each batch.

- Batch size – Batch size is the number of training examples processed in one iteration. It directly impacts GPU memory consumption and training dynamics.

- Epoch – One epoch means the model has seen every example in the dataset one time. The number of epochs is a crucial hyperparameter that affects model performance and training efficiency.

For our evaluation, we used the F1 score, which is an evaluation metric to assess the performance of LLMs and traditional ML models.

To compute the F1 score for LLM evaluation, we need to define precision and recall at the token level. Precision measures the proportion of generated tokens that match the reference tokens, and recall measures the proportion of reference tokens that are captured by the generated tokens. The F1 score ranges from 0–100, with 100 being the best possible score and 0 being the lowest. However, interpretation can vary depending on the specific task and requirements.

We calculate these metrics as follows:

- Precision = (Number of matching tokens in generated text) / (Total number of tokens in generated text)

- Recall = (Number of matching tokens in generated text) / (Total number of tokens in reference text)

- F1 = (2 * (Precision * Recall) / (Precision + Recall)) * 100

For example, let’s say the LLM generates the sentence “The cat sits on the mat in the sun” and the reference sentence is “The cat sits on the soft mat under the warm sun.” The precision would be 6/9 (6 matching tokens out of 9 generated tokens), and the recall would be 6/11 (6 matching tokens out of 11 reference tokens).

- Precision = 6/9 ≈ 0.667

- Recall = 6/11 ≈ 0.545

- F1 score = (2 * (0.667 * 0.545) / (0.667 + 0.545)) * 100 ≈ 59.90

About the Authors

Yanyan Zhang is a Senior Generative AI Data Scientist at Amazon Web Services, where she has been working on cutting-edge AI/ML technologies as a Generative AI Specialist, helping customers use generative AI to achieve their desired outcomes. Yanyan graduated from Texas A&M University with a PhD in Electrical Engineering. Outside of work, she loves traveling, working out, and exploring new things.

Yanyan Zhang is a Senior Generative AI Data Scientist at Amazon Web Services, where she has been working on cutting-edge AI/ML technologies as a Generative AI Specialist, helping customers use generative AI to achieve their desired outcomes. Yanyan graduated from Texas A&M University with a PhD in Electrical Engineering. Outside of work, she loves traveling, working out, and exploring new things.

Sovik Kumar Nath is an AI/ML and Generative AI Senior Solutions Architect with AWS. He has extensive experience designing end-to-end machine learning and business analytics solutions in finance, operations, marketing, healthcare, supply chain management, and IoT. He has double master’s degrees from the University of South Florida and University of Fribourg, Switzerland, and a bachelor’s degree from the Indian Institute of Technology, Kharagpur. Outside of work, Sovik enjoys traveling, and adventures.

Sovik Kumar Nath is an AI/ML and Generative AI Senior Solutions Architect with AWS. He has extensive experience designing end-to-end machine learning and business analytics solutions in finance, operations, marketing, healthcare, supply chain management, and IoT. He has double master’s degrees from the University of South Florida and University of Fribourg, Switzerland, and a bachelor’s degree from the Indian Institute of Technology, Kharagpur. Outside of work, Sovik enjoys traveling, and adventures.

Jennifer Zhu is a Senior Applied Scientist at AWS Bedrock, where she helps building and scaling generative AI applications with foundation models. Jennifer holds a PhD degree from Cornell University, and a master degree from University of San Francisco. Outside of work, she enjoys reading books and watching tennis games.

Jennifer Zhu is a Senior Applied Scientist at AWS Bedrock, where she helps building and scaling generative AI applications with foundation models. Jennifer holds a PhD degree from Cornell University, and a master degree from University of San Francisco. Outside of work, she enjoys reading books and watching tennis games.

Fang Liu is a principal machine learning engineer at Amazon Web Services, where he has extensive experience in building AI/ML products using cutting-edge technologies. He has worked on notable projects such as Amazon Transcribe and Amazon Bedrock. Fang Liu holds a master’s degree in computer science from Tsinghua University.

Fang Liu is a principal machine learning engineer at Amazon Web Services, where he has extensive experience in building AI/ML products using cutting-edge technologies. He has worked on notable projects such as Amazon Transcribe and Amazon Bedrock. Fang Liu holds a master’s degree in computer science from Tsinghua University.

Yanjun Qi is a Senior Applied Science Manager at the Amazon Bedrock Science. She innovates and applies machine learning to help AWS customers speed up their AI and cloud adoption.

Yanjun Qi is a Senior Applied Science Manager at the Amazon Bedrock Science. She innovates and applies machine learning to help AWS customers speed up their AI and cloud adoption.

View Original Source (aws.amazon.com) Here.

Leave a Reply