The future of productivity agents with NinjaTech AI and AWS Trainium

This is a guest post by Arash Sadrieh, Tahir Azim, and Tengfui Xue from NinjaTech AI.

NinjaTech AI’s mission is to make everyone more productive by taking care of time-consuming complex tasks with fast and affordable artificial intelligence (AI) agents. We recently launched MyNinja.ai, one of the world’s first multi-agent personal AI assistants, to drive towards our mission. MyNinja.ai is built from the ground up using specialized agents that are capable of completing tasks on your behalf, including scheduling meetings, conducting deep research from the web, generating code, and helping with writing. These agents can break down complicated, multi-step tasks into branched solutions, and are capable of evaluating the generated solutions dynamically while continually learning from past experiences. All of these tasks are accomplished in a fully autonomous and asynchronous manner, freeing you up to continue your day while Ninja works on these tasks in the background, and engaging when your input is required.

Because no single large language model (LLM) is perfect for every task, we knew that building a personal AI assistant would require multiple LLMs optimized specifically for a variety of tasks. In order to deliver the accuracy and capabilities to delight our users, we also knew that we would require these multiple models to work together in tandem. Finally, we needed scalable and cost-effective methods for training these various models—an undertaking that has historically been costly to pursue for most startups. In this post, we describe how we built our cutting-edge productivity agent NinjaLLM, the backbone of MyNinja.ai, using AWS Trainium chips.

Building a dataset

We recognized early that to deliver on the mission of tackling tasks on a user’s behalf, we needed multiple models that were optimized for specific tasks. Examples include our Deep Researcher, Deep Coder, and Advisor models. After testing available open source models, we felt that the out-of-the-box capabilities and responses were insufficient with prompt engineering alone to meet our needs. Specifically, in our testing with open source models, we wanted to make sure each model was optimized for a ReAct/chain-of-thought style of prompting. Additionally, we wanted to make sure the model would, when deployed as part of a Retrieval Augmented Generation (RAG) system, accurately cite each source, as well as any bias towards saying “I don’t know” as opposed to generating false answers. For that purpose, we chose to fine-tune the models for the various downstream tasks.

In constructing our training dataset, our goal was twofold: adapt each model for its suited downstream task and persona (Researcher, Advisor, Coder, and so on), and adapt the models to follow a specific output structure. To that end, we followed the Lima approach for fine-tuning. We used a training sample size of roughly 20 million tokens, focusing on the format and tone of the output while using a diverse but relatively small sample size. To construct our supervised fine-tuning dataset, we began by creating initial seed tasks for each model. With these seed tasks, we generated an initial synthetic dataset using Meta’s Llama 2 model. We were able to use the synthetic dataset to perform an initial round of fine-tuning. To initially evaluate the performance of this fine-tuned model, we crowd-sourced user feedback to iteratively create more samples. We also used a series of benchmarks—internal and public—to assess model performance and continued to iterate.

Fine-tuning on Trainium

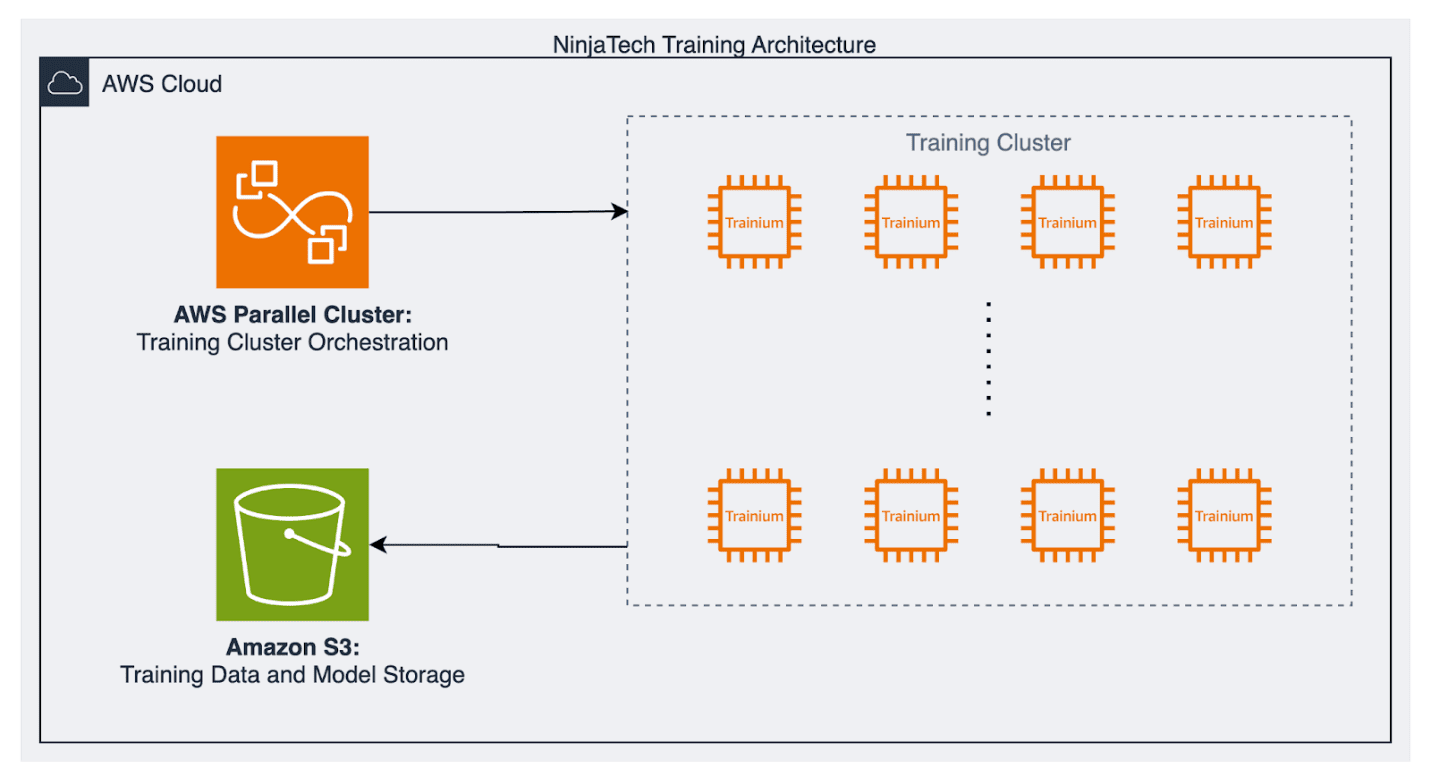

We elected to start with the Llama models for a pre-trained base model for several reasons: most notably the great out-of-the-box performance, strong ecosystem support from various libraries, and the truly open source and permissive license. At the time, we began with Llama 2, testing across the various sizes (7B, 13B, and 70B). For training, we chose to use a cluster of trn1.32xlarge instances to take advantage of Trainium chips. We used a cluster of 32 instances in order to efficiently parallelize the training. We also used AWS ParallelCluster to manage cluster orchestration. By using a cluster of Trainium instances, each fine-tuning iteration took less than 3 hours, at a cost of less than $1,000. This quick iteration time and low cost, allowed us to quickly tune and test our models and improve our model accuracy. To achieve the accuracies discussed in the following sections, we only had to spend around $30k, savings hundreds of thousands, if not millions of dollars if we had to train on traditional training accelerators.

The following diagram illustrates our training architecture.

After we had established our fine-tuning pipelines built on top of Trainium, we were able to fine-tune and refine our models thanks to the Neuron Distributed training libraries. This was exceptionally useful and timely, because leading up to the launch of MyNinja.ai, Meta’s Llama 3 models were released. Llama 3 and Llama 2 share similar architecture, so we were able to rapidly upgrade to the newer model. This velocity in switching allowed us to take advantage of the inherent gains in model accuracy, and very quickly run through another round of fine-tuning with the Llama 3 weights and prepare for launch.

Model evaluation

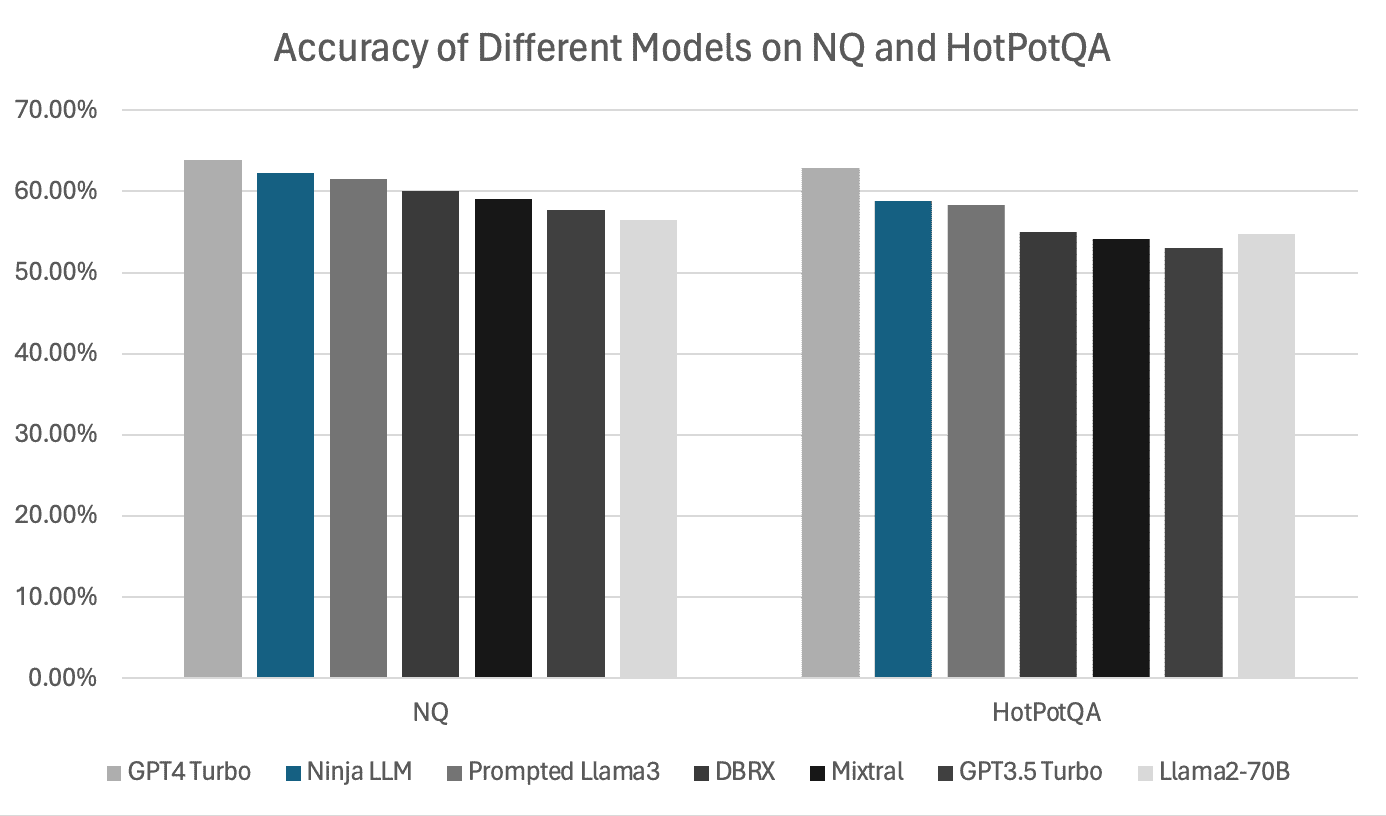

For evaluating the model, there were two objectives: evaluate the model’s ability to answer user questions, and evaluate the system’s ability to answer questions with provided sources, because this is our personal AI assistant’s primary interface. We selected the HotPotQA and Natural Questions (NQ) Open datasets, both of which are a good fit because of their open benchmarking datasets with public leaderboards.

We calculated accuracy by matching the model’s answer to the expected answer, using the top 10 passages retrieved from a Wikipedia corpus. We performed content filtering and ranking using ColBERTv2, a BERT-based retrieval model. We achieved accuracies of 62.22% on the NQ Open dataset and 58.84% on HotPotQA by using our enhanced Llama 3 RAG model, demonstrating notable improvements over other baseline models. The following figure summarizes our results.

Future work

Looking ahead, we’re working on several developments to continue improving our model’s performance and user experience. First, we intend to use ORPO to fine-tune our models. ORPO combines traditional fine-tuning with preference alignment, while using a single preference alignment dataset for both. We believe this will allow us to better align models to achieve better results for users.

Additionally, we intend to build a custom ensemble model from the various models we have fine-tuned thus far. Inspired by Mixture of Expert (MoE) model architectures, we intend to introduce a routing layer to our various models. We believe this will radically simplify our model serving and scaling architecture, while maintaining the quality in various tasks that our users have come to expect from our personal AI assistant.

Conclusion

Building next-gen AI agents to make everyone more productive is NinjaTech AI’s pathway to achieving its mission. To democratize access to this transformative technology, it is critical to have access to high-powered compute, open source models, and an ecosystem of tools that make training each new agent affordable and fast. AWS’s purpose-built AI chips, access to the top open source models, and its training architecture make this possible.

To learn more about how we built NinjaTech AI’s multi-agent personal AI, you can read our whitepaper. You can also try these AI agents for free at MyNinja.ai.

About the authors

Arash Sadrieh is the Co-Founder and Chief Science Officer at Ninjatech.ai. Arash co-founded Ninjatech.ai with a vision to make everyone more productive by taking care of time-consuming tasks with AI agents. This vision was shaped during his tenure as a Senior Applied Scientist at AWS, where he drove key research initiatives that significantly improved infrastructure efficiency over six years, earning him multiple patents for optimizing core infrastructure. His academic background includes a PhD in computer modeling and simulation, with collaborations with esteemed institutions such as Oxford University, Sydney University, and CSIRO. Prior to his industry tenure, Arash had a postdoctoral research tenure marked by publications in high-impact journals, including Nature Communications.

Arash Sadrieh is the Co-Founder and Chief Science Officer at Ninjatech.ai. Arash co-founded Ninjatech.ai with a vision to make everyone more productive by taking care of time-consuming tasks with AI agents. This vision was shaped during his tenure as a Senior Applied Scientist at AWS, where he drove key research initiatives that significantly improved infrastructure efficiency over six years, earning him multiple patents for optimizing core infrastructure. His academic background includes a PhD in computer modeling and simulation, with collaborations with esteemed institutions such as Oxford University, Sydney University, and CSIRO. Prior to his industry tenure, Arash had a postdoctoral research tenure marked by publications in high-impact journals, including Nature Communications.

Tahir Azim is a Staff Software Engineer at NinjaTech. Tahir focuses on NinjaTech’s Inf2 and Trn1 based training and inference platforms, its unified gateway for accessing these platforms, and its RAG-based research skill. He previously worked at Amazon as a senior software engineer, building data-driven systems for optimal utilization of Amazon’s global Internet edge infrastructure, driving down cost, congestion and latency. Before moving to industry, Tahir earned an M.S. and Ph.D. in Computer Science from Stanford University, taught for three years as an assistant professor at NUST(Pakistan), and did a post-doc in fast data analytics systems at EPFL. Tahir has authored several publications presented at top-tier conferences such as VLDB, USENIX ATC, MobiCom and MobiHoc.

Tahir Azim is a Staff Software Engineer at NinjaTech. Tahir focuses on NinjaTech’s Inf2 and Trn1 based training and inference platforms, its unified gateway for accessing these platforms, and its RAG-based research skill. He previously worked at Amazon as a senior software engineer, building data-driven systems for optimal utilization of Amazon’s global Internet edge infrastructure, driving down cost, congestion and latency. Before moving to industry, Tahir earned an M.S. and Ph.D. in Computer Science from Stanford University, taught for three years as an assistant professor at NUST(Pakistan), and did a post-doc in fast data analytics systems at EPFL. Tahir has authored several publications presented at top-tier conferences such as VLDB, USENIX ATC, MobiCom and MobiHoc.

Tengfei Xue is an Applied Scientist at NinjaTech AI. His current research interests include natural language processing and multimodal learning, particularly using large language models and large multimodal models. Tengfei completed his PhD studies at the School of Computer Science, University of Sydney, where he focused on deep learning for healthcare using various modalities. He was also a visiting PhD candidate at the Laboratory of Mathematics in Imaging (LMI) at Harvard University, where he worked on 3D computer vision for complex geometric data.

Tengfei Xue is an Applied Scientist at NinjaTech AI. His current research interests include natural language processing and multimodal learning, particularly using large language models and large multimodal models. Tengfei completed his PhD studies at the School of Computer Science, University of Sydney, where he focused on deep learning for healthcare using various modalities. He was also a visiting PhD candidate at the Laboratory of Mathematics in Imaging (LMI) at Harvard University, where he worked on 3D computer vision for complex geometric data.

Leave a Reply