How Zalando optimized large-scale inference and streamlined ML operations on Amazon SageMaker

This post is cowritten with Mones Raslan, Ravi Sharma and Adele Gouttes from Zalando.

Zalando SE is one of Europe’s largest ecommerce fashion retailers with around 50 million active customers. Zalando faces the challenge of regular (weekly or daily) discount steering for more than 1 million products, also referred to as markdown pricing. Markdown pricing is a pricing approach that adjusts prices over time and is a common strategy to maximize revenue from goods that have a limited lifespan or are subject to seasonal demand (Sul 2023).

Because many items are ordered ahead of season and not replenished afterwards, businesses have an interest in selling the products evenly throughout the season. The main rationale is to avoid overstock and understock situations. An overstock situation would lead to high costs after the season ends, and an understock situation would lead to lost sales because customers would choose to buy at competitors.

To address this issue, discount steering is an effective approach because it influences item-level demand and therefore stock levels.

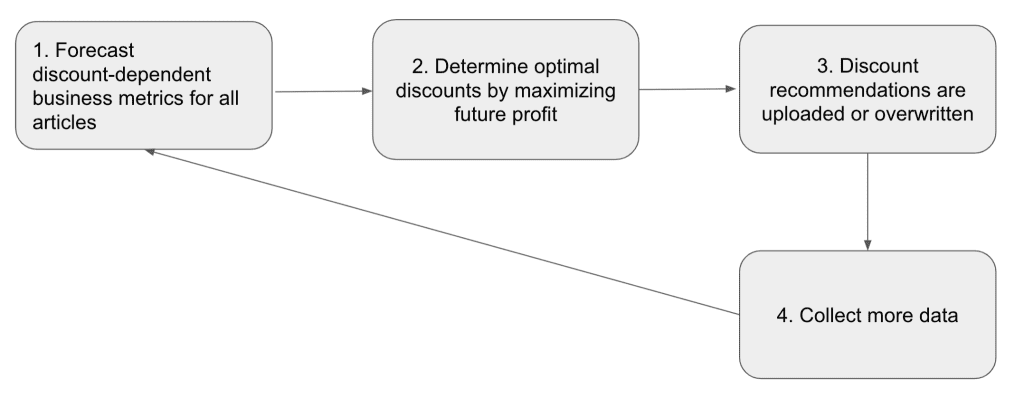

The markdown pricing algorithmic solution Zalando relies on is a forecast-then-optimize approach (Kunz et al. 2023 and Streeck et al. 2024). A high-level description of the markdown pricing algorithm solution can be broken down into four steps:

- Discount-dependent forecast – Using past data, forecast future discount-dependent quantities that are relevant for determining the future profit of an item. The following are important metrics that need to be forecasted:

-

- Demand – How many items will be sold in the next X weeks for different discounts?

- Return rate – What share of sold items will be returned by the customer?

- Return time – When will a returned item reappear in the warehouse so that it can be sold again?

- Fulfillment costs – How much will shipping and returning an item cost?

- Residual value – At what price can an item be realistically sold after the end of the season?

-

- Determine an optimal discount – Use the forecasts from Step 1 as input to maximize profit as a function of discount, which is subject to business and stock constraints. Concrete details can be found in Streeck et al. 2024.

- Recommendations – Discount recommendations determined in Step 2 are incorporated into the shop or overwritten by pricing managers.

- Data collection – Updated shop prices lead to updated demand. The new information is used to enhance the training sets used in Step 1 for forecasting discounts.

The following diagram illustrates this workflow.

The focus of this post is on Step 1, creating a discount-dependent forecast. Depending on the complexity of the problem and the structure of underlying data, the predictive models at Zalando range from simple statistical averages, over tree-based models to a Transformer-based deep learning architecture (Kunz et al. 2023).

Regardless of the models used, they all include data preprocessing, training, and inference over several billions of records containing weekly data spanning multiple years and markets to produce forecasts. Operating such large-scale forecasting requires resilient, reusable, reproducible, and automated machine learning (ML) workflows with fast experimentation and continuous improvements.

In this post, we present the implementation and orchestration of the forecast model’s training and inference. The solution was built in a recent collaboration between AWS Professional Services, under which Well-Architected machine learning design principles were followed.

The result of the collaboration is a blueprint that is being reused for similar use cases within Zalando.

Motivation for streamlined ML operations and large-scale inference

As mentioned earlier, discount steering of more than a million items every week requires generating a large amount of forecast records (approximately 10 billion). Effective discount steering calls for continuous improvement of forecasting accuracy.

To improve forecasting accuracy, all involved ML models need to be retrained, and predictions need to be produced weekly, and in some cases daily.

Given the amount of data and nature of ML models in question, training and inference takes from several hours to multiple days. Any error in the process represents risks in terms of operational costs and opportunity costs because Zalando’s commercial pricing team expects results according to defined service level objectives (SLOs).

If an ML model training or inference fails in any given week, an ML model with outdated data is used to generate the forecast records. This has a direct impact on revenue for Zalando because the forecasts and discounts are less accurate when using outdated data.

In this context, our motivation for streamlining ML operations (MLOps) can be summarized as follows:

- Speed up experimentation and evaluation, and enable rapid prototyping and provide sufficient time to meet SLOs

- Design the architecture in a templated approach with the objective of supporting multiple model training and inference, providing a unified ML infrastructure and enabling automated integration for training and inference

- Provide scalability to accommodate different types of forecasting models (also supporting GPU) and growing datasets

- Make end-to-end ML pipelines and experimentation repeatable, fault-tolerant, and traceable

To achieve these objectives, we explored several distributed computing tools.

During our analysis phase, we discovered two key factors that influenced our choice of distributed computing tool. First, our input datasets were stored in the columnar Parquet format, spread across multiple partitions. Second, the required inference operations exhibited embarrassingly parallel characteristics, meaning they could be run independently without necessitating inter-node communication. These factors guided our decision-making process for selecting the most suitable distributed computing tool.

We explored multiple big data processing solutions and decided to use an Amazon SageMaker Processing job for the following reasons:

- It’s highly configurable, with support of pre-built images, custom cluster requirements, and containers. This makes it straightforward to manage and scale with no overhead of inter-node communication.

- Amazon SageMaker supports effortless experimentation with Amazon SageMaker Studio.

- SageMaker Processing integrates seamlessly with AWS Identity and Access Management (IAM), Amazon Simple Storage Service (Amazon S3), AWS Step Functions, and other AWS services.

- SageMaker Processing supports the option to upgrade to GPUs with minimal change in the architecture.

- SageMaker Processing unifies our training and inference architecture, enabling us to use inference architecture for model backtesting.

We also explored other tools, but preferred SageMaker Processing jobs for the following reasons:

- Apache Spark on Amazon EMR – Due to the inference operations displaying embarrassingly parallel characteristics and not requiring inter-node communication, we decided against using Spark on Amazon EMR, which involved additional overhead for inter-node communication.

- SageMaker batch transform jobs – Batch transform jobs have a hard limit of 100 MB payload size, which couldn’t accommodate the dataset partitions. This proved to be a limiting factor for running batch inference on it.

Solution overview

Large-scale inference requires a scalable inference and scalable training solution.

We approached this by designing an architecture with an event-driven principle in mind that enabled us to build ML workflows for training and inference using infrastructure as code (IaC). At the same time, we incorporated continuous integration and delivery (CI/CD) processes, automated testing, and model versioning into the solution. Because applied scientists need to iterate and experiment, we created a flexible experimentation environment very close to the production one.

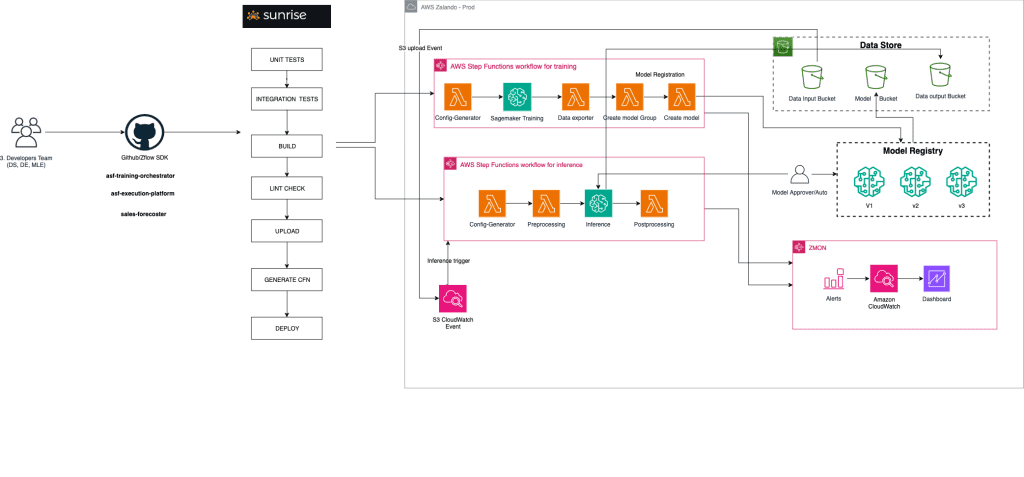

The following high-level architecture diagram shows the ML solution deployed on AWS, which is now used by Zalando’s forecasting team to run pricing forecasting models.

The architecture consists of the following components:

- Sunrise – Sunrise is Zalando’s internal CI/CD tool, which automates the deployment of the ML solution in an AWS environment.

- AWS Step Functions – AWS Step Functions orchestrates the entire ML workflow, coordinating various stages such as model training, versioning, and inference. Step Functions can seamlessly integrate with AWS services such as SageMaker, AWS Lambda, and Amazon S3.

- Data store – S3 buckets serve as the data store, holding input and output data as well as model artifacts.

- Model registry – Amazon SageMaker Model Registry provides a centralized repository for organizing, versioning, and tracking models.

- Logging and monitoring – Amazon CloudWatch handles logging and monitoring, forwarding the metrics to Zalando’s internal alerting tool for further analysis and notifications.

To orchestrate multiple steps within the training and inference pipelines, we used Zflow, a Python-based SDK developed by Zalando that uses the AWS Cloud Development Kit (AWS CDK) to create Step Functions workflows. It uses SageMaker training jobs for model training, processing jobs for batch inference, and the model registry for model versioning.

All the components are declared using Zflow and are deployed using CI/CD (Sunrise) to build reusable end-to-end ML workflows, while integrating with AWS services.

The reusable ML workflow allows experimentation and productionization of different models. This enables the separation of the model orchestration and business logic, allowing data scientists and applied scientists to focus on the business logic and use these predefined ML workflows.

A fully automated production workflow

The MLOps lifecycle starts with ingesting the training data in the S3 buckets. On the arrival of data, Amazon EventBridge invokes the training workflow (containing SageMaker training jobs). Upon completion of the training job, a new model is created and stored in SageMaker Model Registry.

To maintain quality control, the team verifies the model properties against the predetermined requirements. If the model meets the criteria, it’s approved for inference. After a model is approved, the inference pipeline will point to the latest approved version of that model group.

When inference data is ingested on Amazon S3, EventBridge automatically runs the inference pipeline.

This automated workflow streamlines the entire process, from data ingestion to inference, reducing manual interventions and minimizing the risk of errors. By using AWS services such as Amazon S3, EventBridge, SageMaker, and Step Functions, we were able to orchestrate the end-to-end MLOps lifecycle efficiently and reliably.

Seamless integration of experiments

To allow for effortless model experimentation, we created SageMaker notebooks that use the Amazon SageMaker SDK to launch SageMaker training and processing jobs. The notebooks use the same Docker images (SageMaker Studio notebook kernels) as the ones used in CI/CD workflows all the way to production. With these notebooks, applied scientists can bring their own code and connect to different data sources, while also experimenting with different instance sizes by scaling up or down computation and memory requirements. The experimentation setup reflects the production workflows.

Conclusion

In this post, we described how MLOps, in collaboration between Zalando and AWS Professional Services, were streamlined with the objective of improving discount steering at Zalando.

MLOps best practices implemented for forecast model training and inference has provided Zalando a flexible and scalable architecture with reduced engineering complexity.

The implemented architecture enables Zalando’s team to conduct large-scale inference, with frequent experimentation and decreased risks of missing weekly SLOs.

Templatization and automation is expected to provide engineers with weekly savings of 3–4 hours per ML model in operations and maintenance tasks. Furthermore, the transition from data science experimentation into model productionization has been streamlined.

To learn more about ML streamlining, experimentation, and scalability, refer to the following blog posts:

- How Booking.com modernized its ML experimentation framework with Amazon SageMaker

- MLOps foundation roadmap for enterprises with Amazon SageMaker

- LLM experimentation at scale using Amazon SageMaker Pipelines and MLflow

- Amazon SageMaker now integrates with Amazon DataZone to streamline machine learning governance

References

- Eleanor, L., R. Brian, K. Jalaj, and D. A. Little. 2022. “Promotheus: An End-to-End Machine Learning Framework for Optimizing Markdown in Online Fashion E-commerce.” arXiv. https://arxiv.org/abs/2207.01137.

- Kunz, M., S. Birr, M. Raslan, L. Ma, Z. Li, A. Gouttes, M. Koren, et al. 2023. “Deep Learning based Forecasting: a case study from the online fashion industry.” In Forecasting with Artificial Intelligence: Theory and Applications (Switzerland), 2023.

- Streeck, R., T. Gellert, A. Schmitt, A. Dipkaya, V. Fux, T. Januschowski, and T. Berthold. 2024. “Tricks from the Trade for Large-Scale Markdown Pricing: Heuristic Cut Generation for Lagrangian Decomposition.” arXiv. https://arxiv.org/abs/2404.02996#.

- Sul, Inki. 2023. “Customer-centric Pricing: Maximizing Revenue Through Understanding Customer Behavior.” The University of Texas at Dallas. https://utd-ir.tdl.org/items/a2b9fde1-aa17-4544-a16e-c5a266882dda.

About the Authors

Mones Raslan is an Applied Scientist at Zalando’s Pricing Platform with a background in applied mathematics. His work encompasses the development of business-relevant and scalable forecasting models, stretching from prototyping to deployment. In his spare time, Mones enjoys operatic singing and scuba diving.

Mones Raslan is an Applied Scientist at Zalando’s Pricing Platform with a background in applied mathematics. His work encompasses the development of business-relevant and scalable forecasting models, stretching from prototyping to deployment. In his spare time, Mones enjoys operatic singing and scuba diving.

Ravi Sharma is a Senior Software Engineer at Zalando’s Pricing Platform, bringing experience across diverse domains such as football betting, radio astronomy, healthcare, and ecommerce. His broad technical expertise enables him to deliver robust and scalable solutions consistently. Outside work, he enjoys nature hikes, table tennis, and badminton.

Ravi Sharma is a Senior Software Engineer at Zalando’s Pricing Platform, bringing experience across diverse domains such as football betting, radio astronomy, healthcare, and ecommerce. His broad technical expertise enables him to deliver robust and scalable solutions consistently. Outside work, he enjoys nature hikes, table tennis, and badminton.

Adele Gouttes is a Senior Applied Scientist, with experience in machine learning, time series forecasting, and causal inference. She has experience developing products end to end, from the initial discussions with stakeholders to production, and creating technical roadmaps for cross-functional teams. Adele plays music and enjoys gardening.

Adele Gouttes is a Senior Applied Scientist, with experience in machine learning, time series forecasting, and causal inference. She has experience developing products end to end, from the initial discussions with stakeholders to production, and creating technical roadmaps for cross-functional teams. Adele plays music and enjoys gardening.

Irem Gokcek is a Data Architect on the AWS Professional Services team, with expertise spanning both analytics and AI/ML. She has worked with customers from various industries, such as retail, automotive, manufacturing, and finance, to build scalable data architectures and generate valuable insights from the data. In her free time, she is passionate about swimming and painting.

Irem Gokcek is a Data Architect on the AWS Professional Services team, with expertise spanning both analytics and AI/ML. She has worked with customers from various industries, such as retail, automotive, manufacturing, and finance, to build scalable data architectures and generate valuable insights from the data. In her free time, she is passionate about swimming and painting.

Jean-Michel Lourier is a Senior Data Scientist within AWS Professional Services. He leads teams implementing data-driven applications side by side with AWS customers to generate business value out of their data. He’s passionate about diving into tech and learning about AI, machine learning, and their business applications. He is also a cycling enthusiast.

Jean-Michel Lourier is a Senior Data Scientist within AWS Professional Services. He leads teams implementing data-driven applications side by side with AWS customers to generate business value out of their data. He’s passionate about diving into tech and learning about AI, machine learning, and their business applications. He is also a cycling enthusiast.

Junaid Baba, a Senior DevOps Consultant with AWS Professional Services, has expertise in machine learning, generative AI operations, and cloud-centered architectures. He applies these skills to design scalable solutions for clients in the global retail and financial services sectors. In his spare time, Junaid spends quality time with his family and finds joy in hiking adventures.

Junaid Baba, a Senior DevOps Consultant with AWS Professional Services, has expertise in machine learning, generative AI operations, and cloud-centered architectures. He applies these skills to design scalable solutions for clients in the global retail and financial services sectors. In his spare time, Junaid spends quality time with his family and finds joy in hiking adventures.

Luis Bustamante is a Senior Engagement Manager within AWS Professional Services. He helps customers accelerate their journey to the cloud through expertise in digital transformation, cloud migration, and IT remote delivery. He enjoys traveling and reading about historical events.

Luis Bustamante is a Senior Engagement Manager within AWS Professional Services. He helps customers accelerate their journey to the cloud through expertise in digital transformation, cloud migration, and IT remote delivery. He enjoys traveling and reading about historical events.

Viktor Malesevic is a Senior Machine Learning Engineer within AWS Professional Services, leading teams to build advanced machine learning solutions in the cloud. He’s passionate about making AI impactful, overseeing the entire process from modeling to production. In his spare time, he enjoys surfing, cycling, and traveling.

Viktor Malesevic is a Senior Machine Learning Engineer within AWS Professional Services, leading teams to build advanced machine learning solutions in the cloud. He’s passionate about making AI impactful, overseeing the entire process from modeling to production. In his spare time, he enjoys surfing, cycling, and traveling.

Leave a Reply