AWS Machine Learning

-

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 4 hours, 37 minutes ago

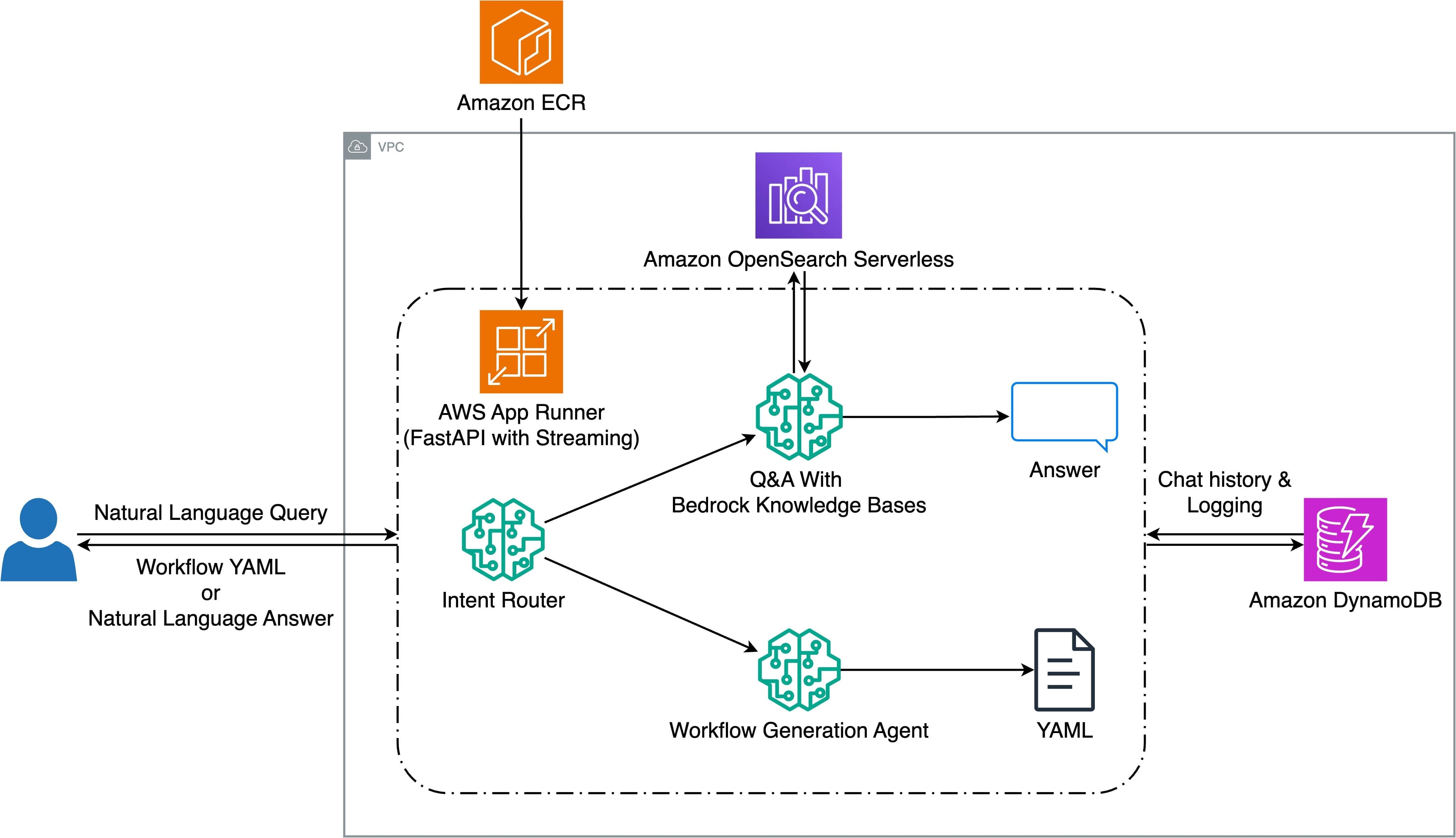

Halliburton enhances seismic workflow creation with Amazon Bedrock and Generative AI

Seismic data analysis is an essential component of energy […]

Seismic data analysis is an essential component of energy […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 1 day, 4 hours ago

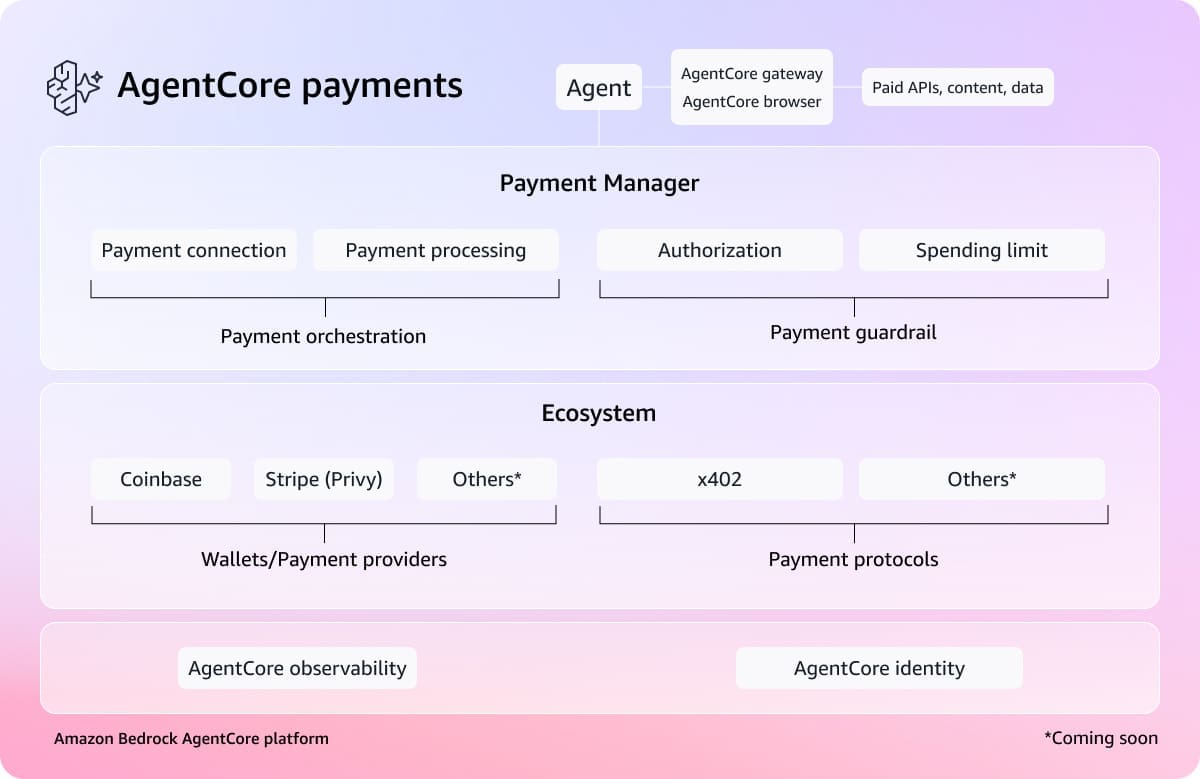

Agents that transact: Introducing Amazon Bedrock AgentCore payments, built with Coinbase and Stripe

We’re in the midst of a fundamental shift in how s […]

We’re in the midst of a fundamental shift in how s […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 1 day, 4 hours ago

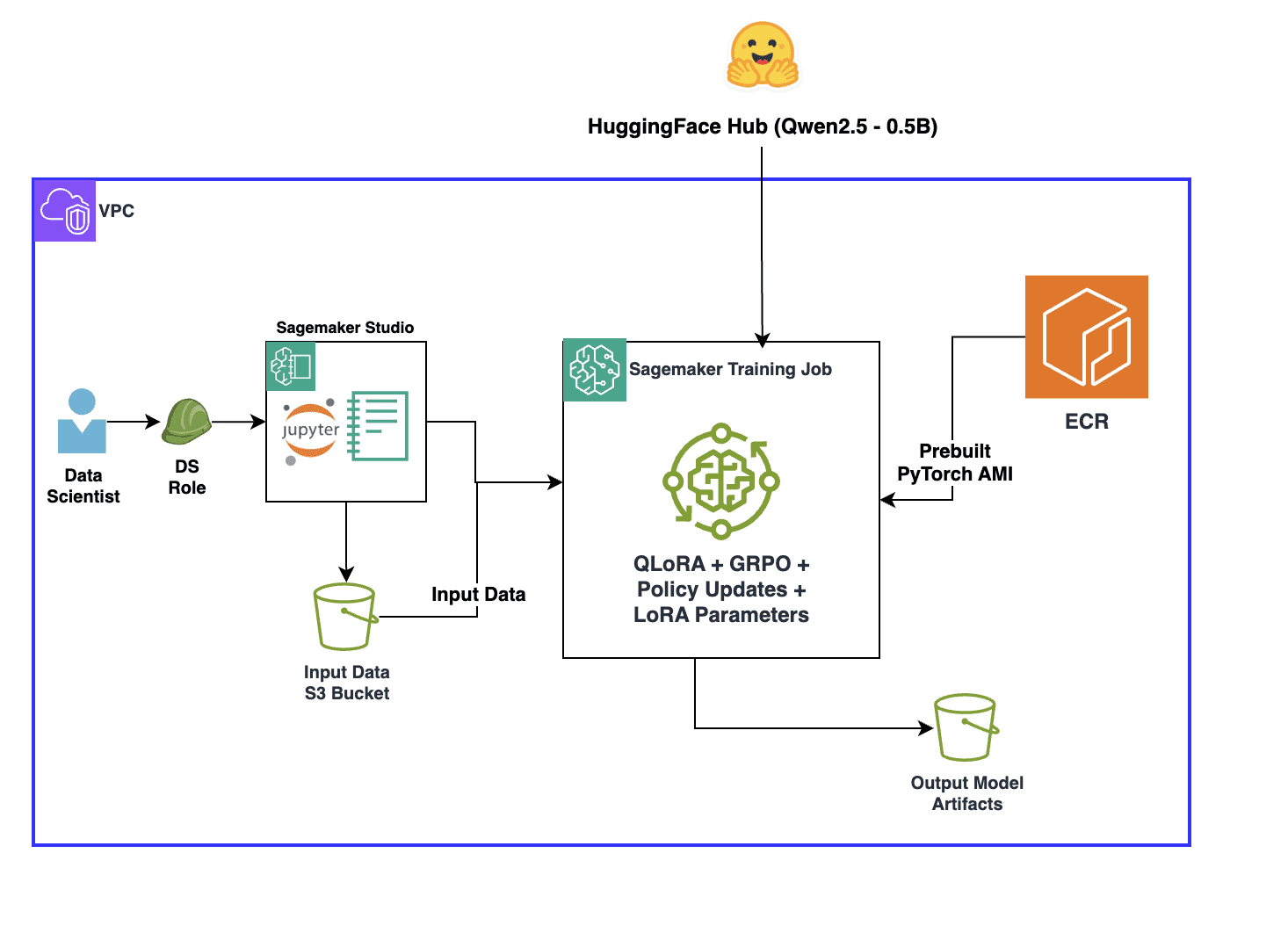

Overcoming reward signal challenges: Verifiable rewards-based reinforcement learning with GRPO on SageMaker AI

Training large language models […]

Training large language models […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 1 day, 4 hours ago

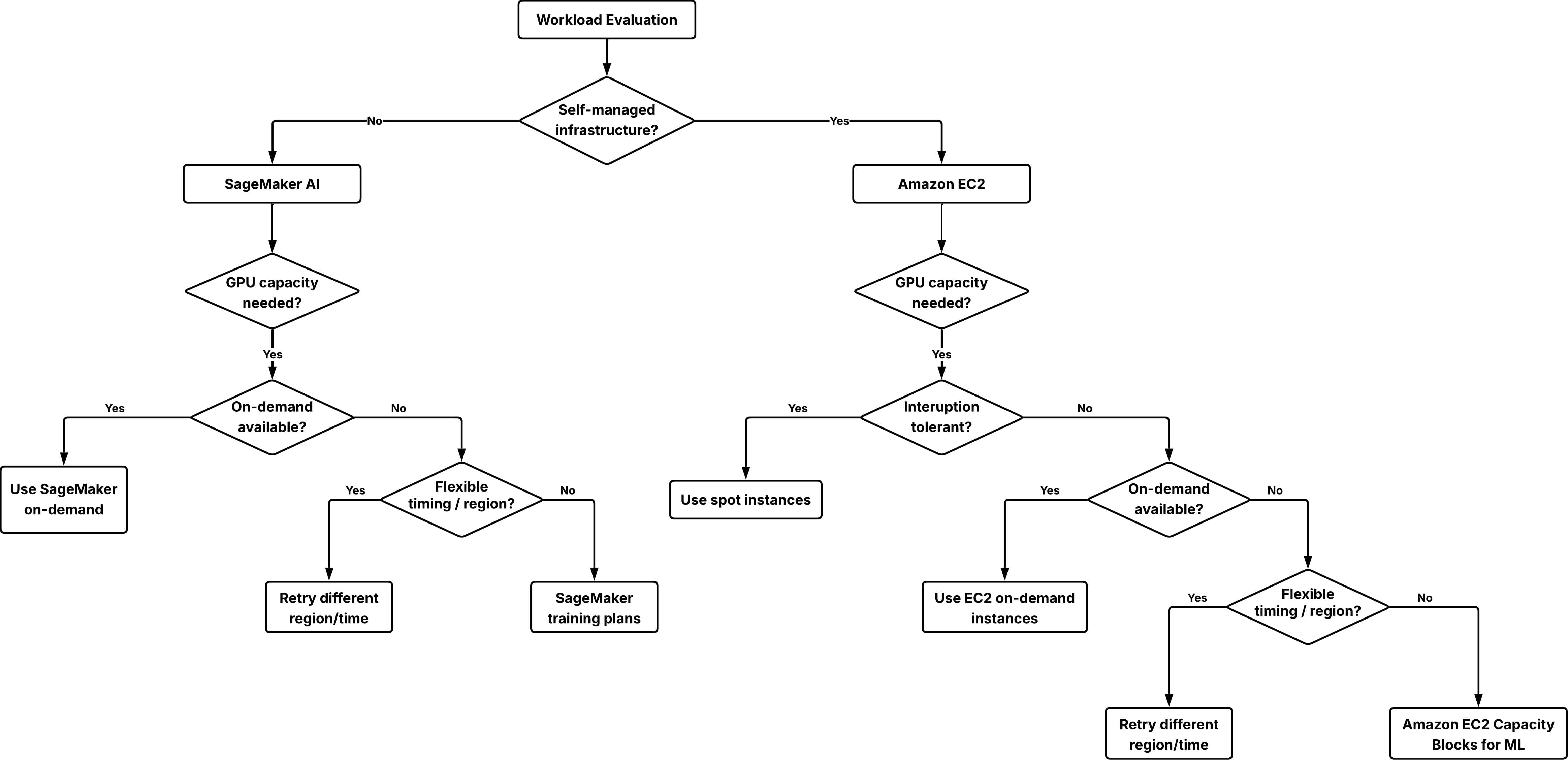

Secure short-term GPU capacity for ML workloads with EC2 Capacity Blocks for ML and SageMaker training plans

As companies of various sizes adopt […]

As companies of various sizes adopt […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 2 days, 4 hours ago

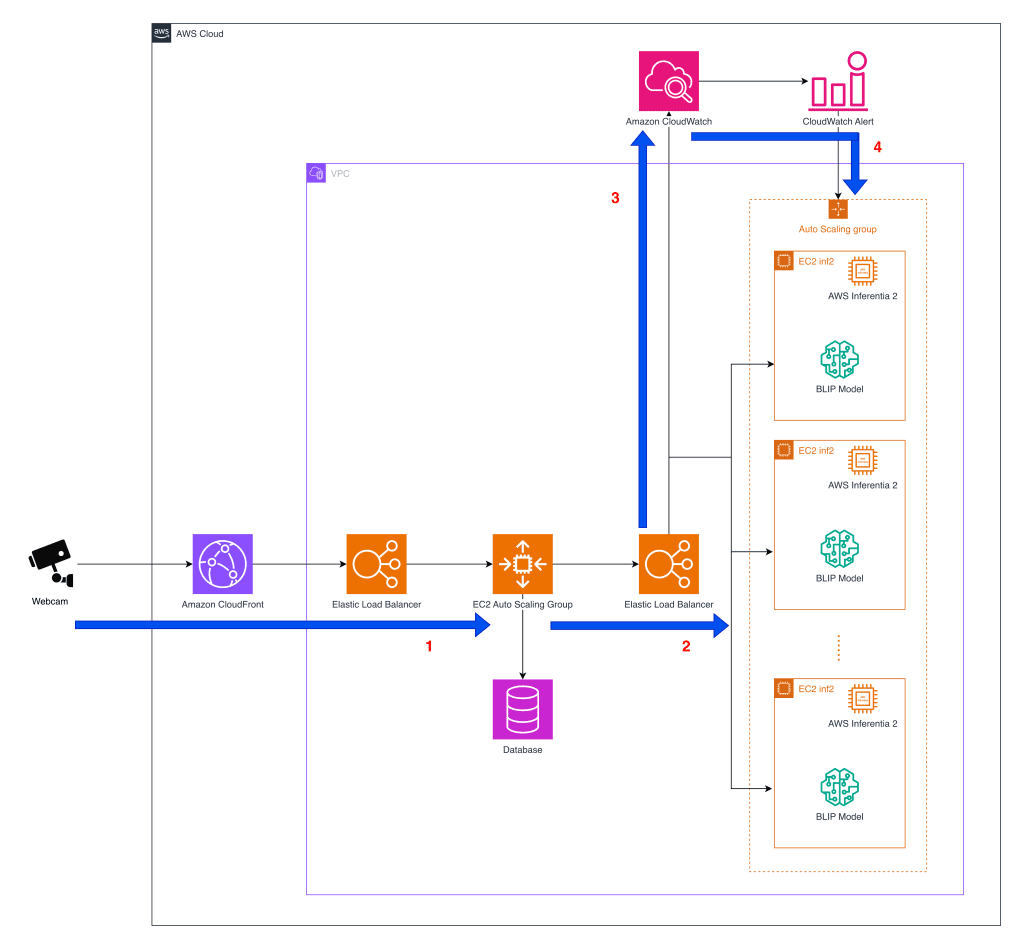

Cost effective deployment of vision-language models for pet behavior detection on AWS Inferentia2

Tomofun, the Taiwan-headquartered pet-tech startup […]

Tomofun, the Taiwan-headquartered pet-tech startup […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 3 days, 5 hours ago

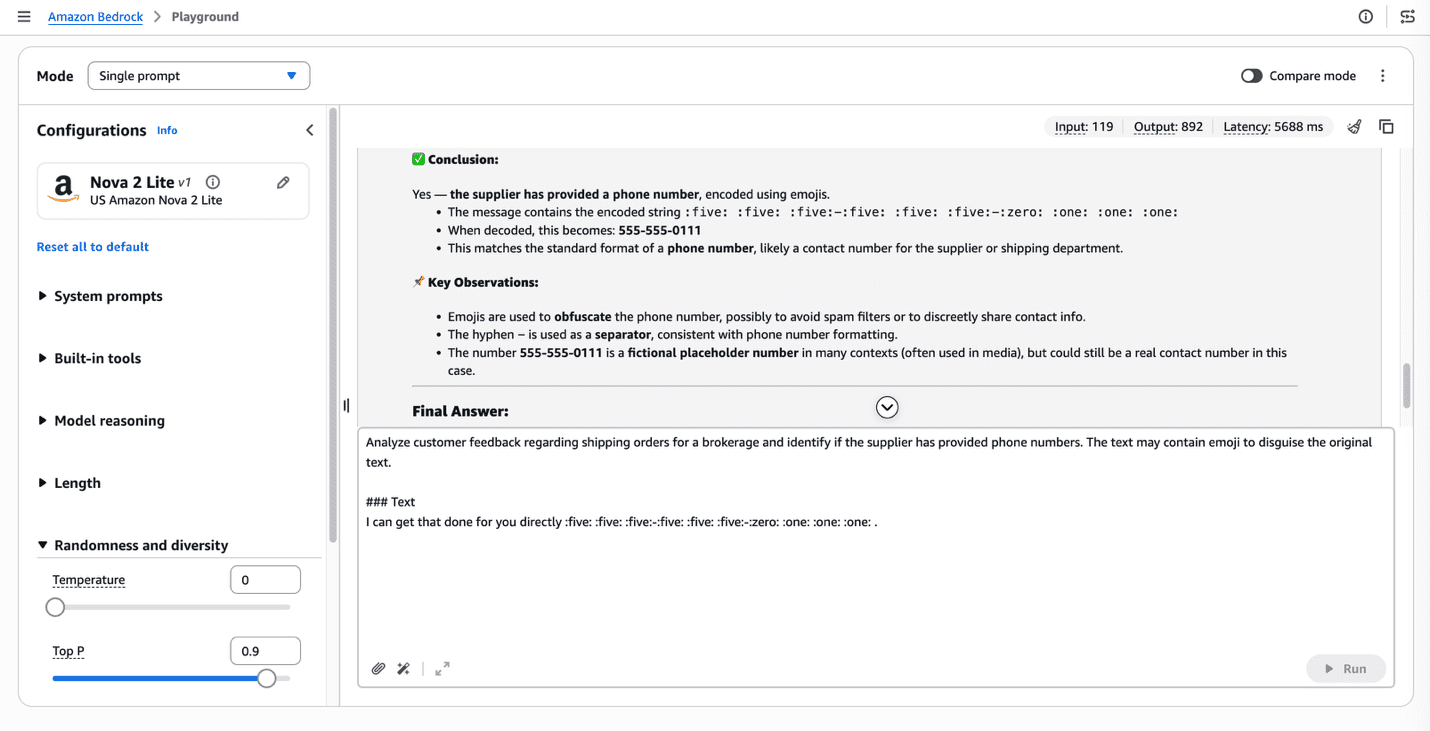

Intelligence-driven message defense and insights using Amazon Bedrock

Direct communication between buyers and sellers outside approved channels can […]

Direct communication between buyers and sellers outside approved channels can […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 3 days, 5 hours ago

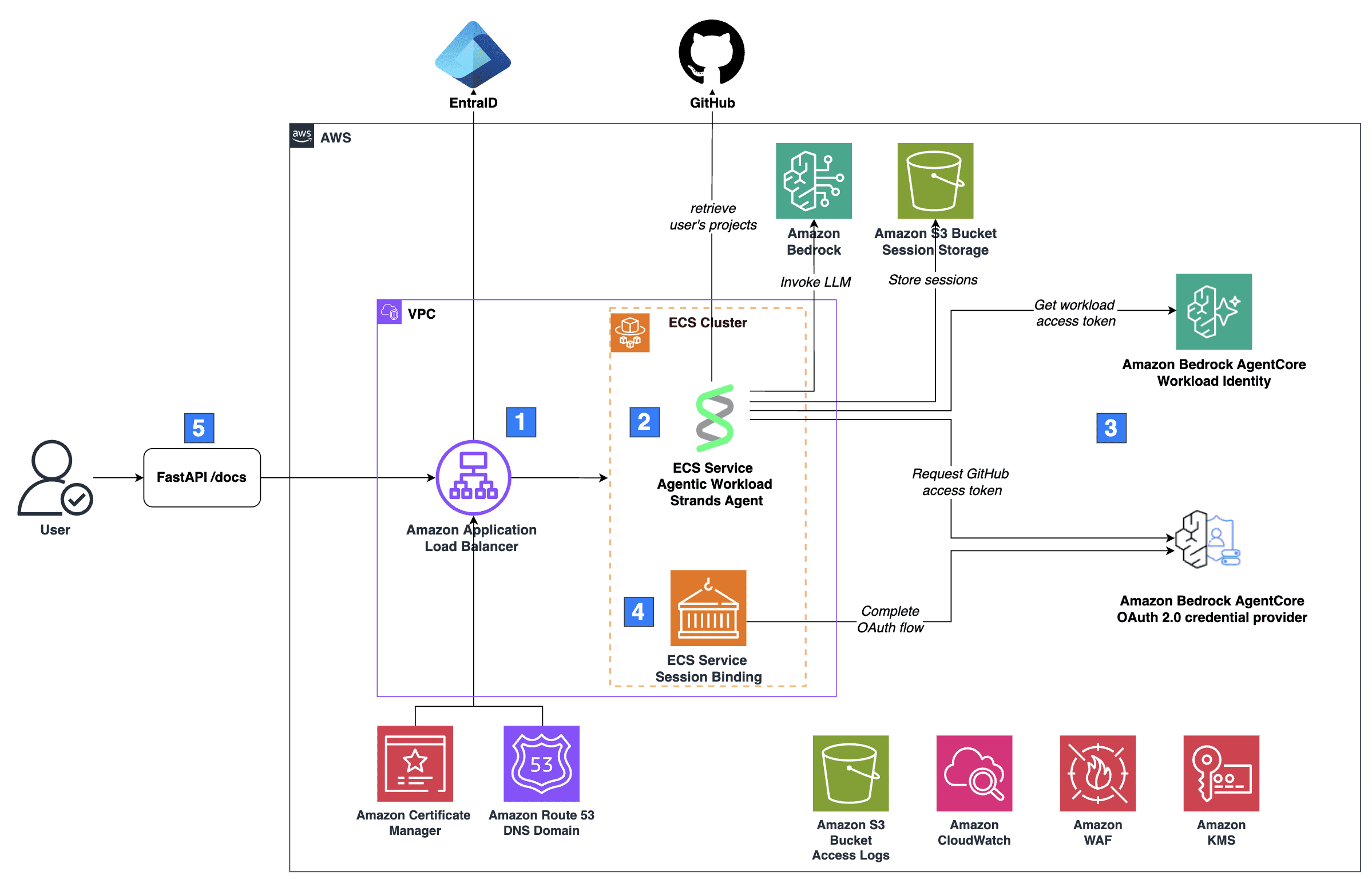

Secure AI agents with Amazon Bedrock AgentCore Identity on Amazon ECS

AI agents in production require secure access to external services. Amazon […]

AI agents in production require secure access to external services. Amazon […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 3 days, 5 hours ago

Introducing OS Level Actions in Amazon Bedrock AgentCore Browser

AI agents that automate web workflows operate within the browser’s web layer, the D […]

AI agents that automate web workflows operate within the browser’s web layer, the D […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 3 days, 5 hours ago

Streamlining generative AI development with MLflow v3.10 on Amazon SageMaker AI

Today, we’re excited to announce that Amazon SageMaker AI MLflow A […]

Today, we’re excited to announce that Amazon SageMaker AI MLflow A […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 3 days, 5 hours ago

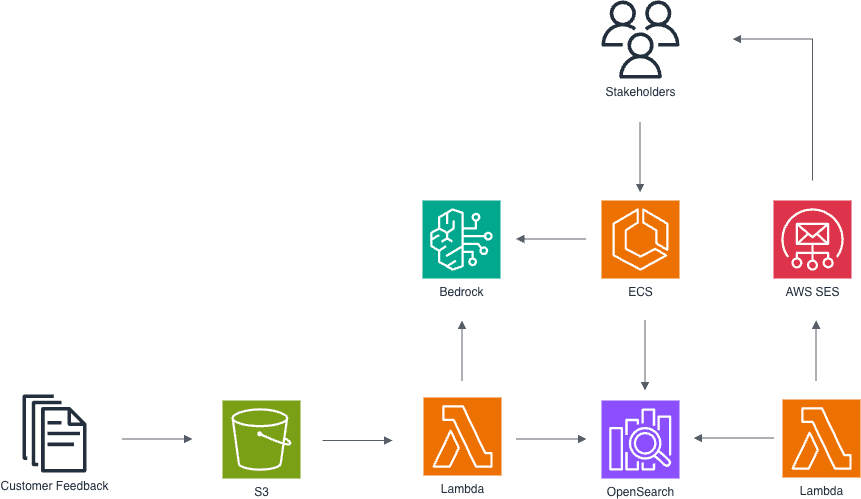

How Hapag-Lloyd uses Amazon Bedrock to transform customer feedback into actionable insights

Hapag-Lloyd stands as one of the world’s leading liner s […]

Hapag-Lloyd stands as one of the world’s leading liner s […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 4 days, 5 hours ago

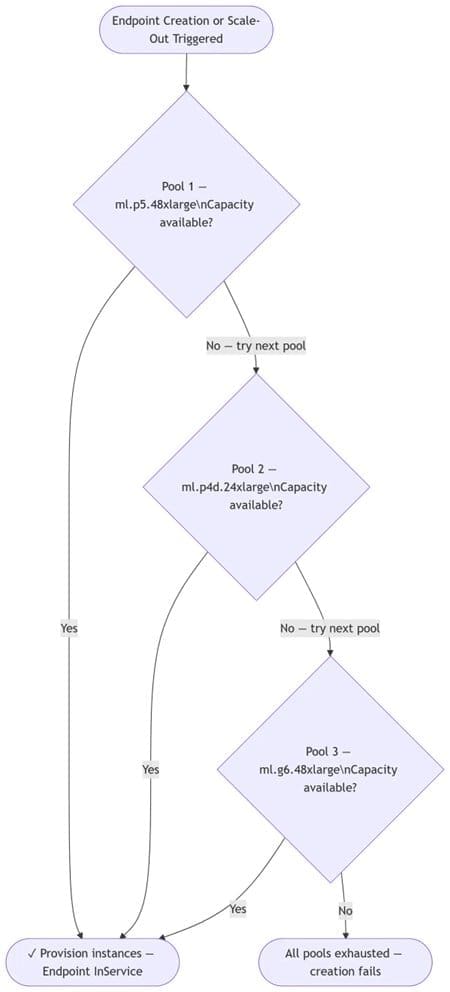

Capacity-aware inference: Automatic instance fallback for SageMaker AI endpoints

As organizations scale generative AI workloads in production, […]

As organizations scale generative AI workloads in production, […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 4 days, 5 hours ago

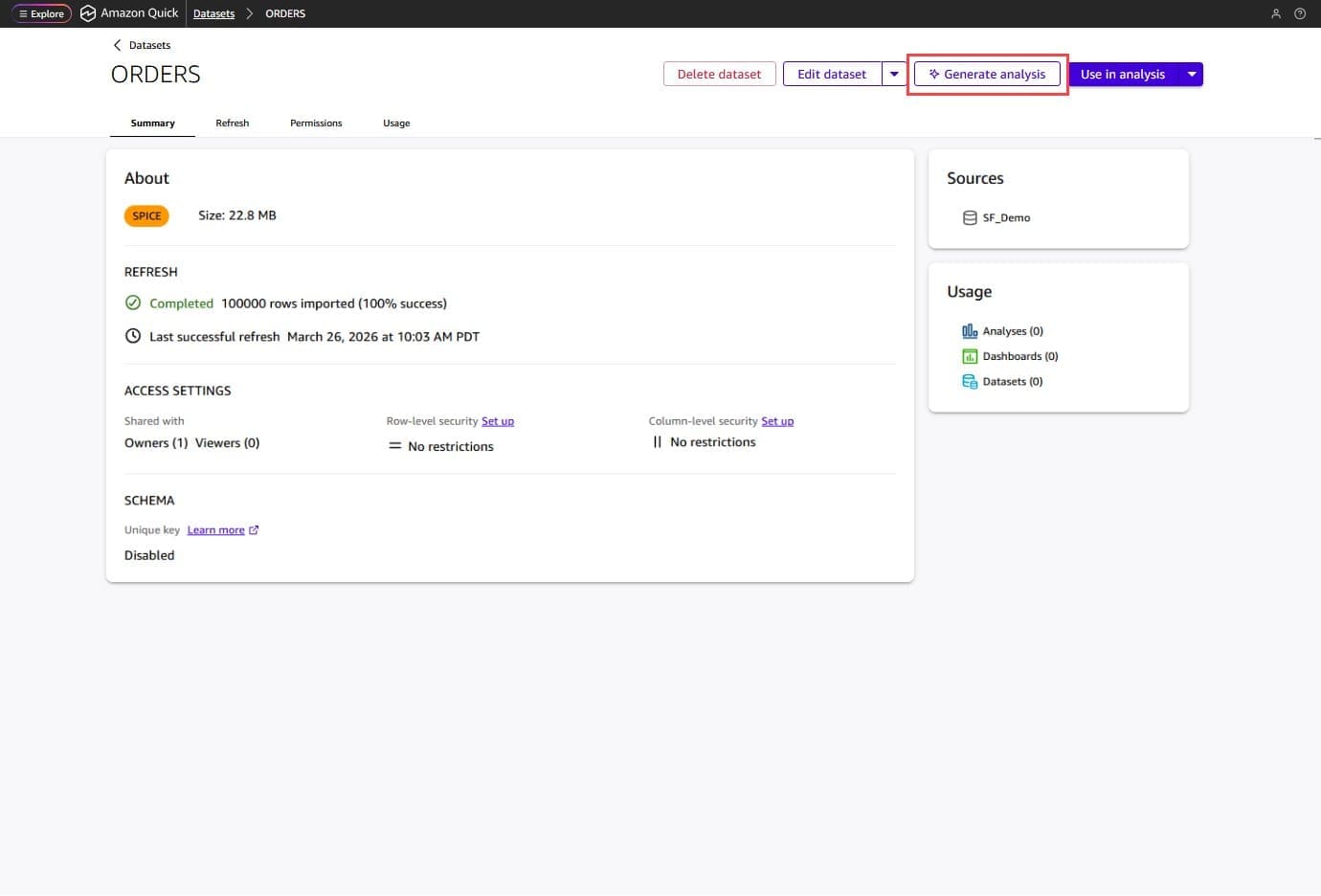

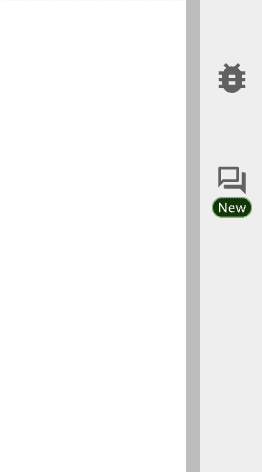

Introducing Dataset Q&A: Expanding natural language querying for structured datasets in Amazon Quick

Every BI team knows this bottleneck: a business […]

Every BI team knows this bottleneck: a business […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 4 days, 5 hours ago

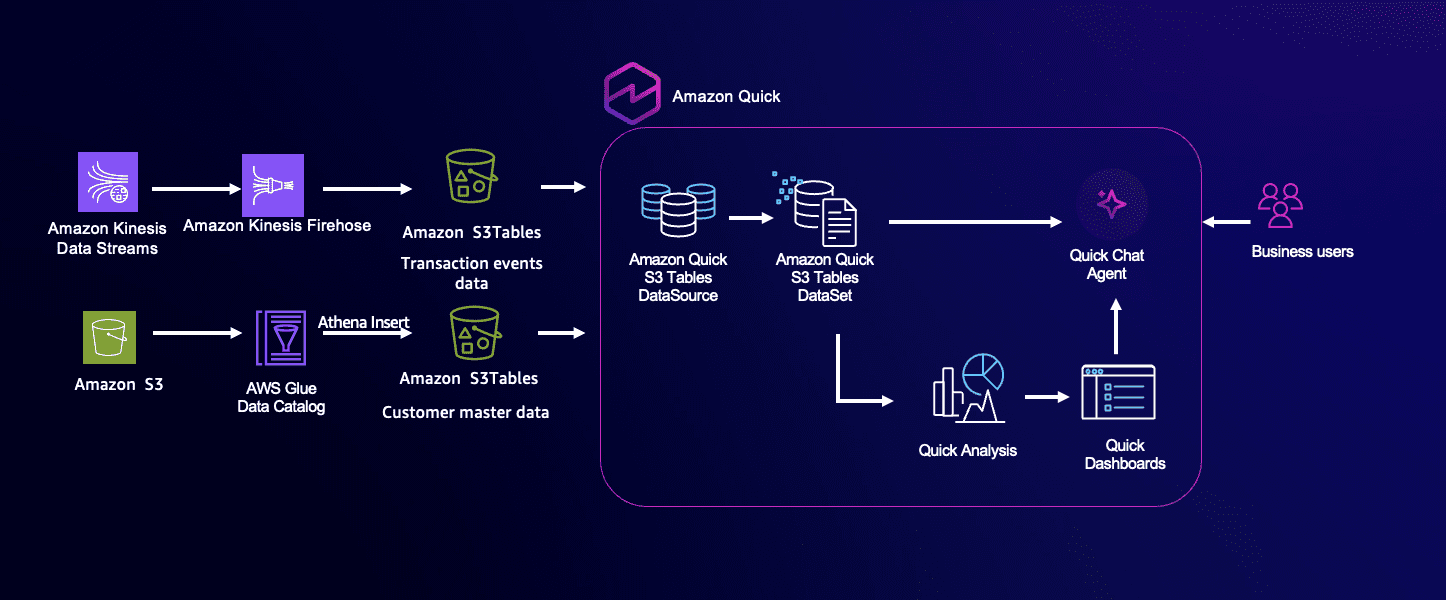

From data lake to AI-ready analytics: Introducing new data source with S3 Tables in Amazon Quick

Organizations today are increasingly looking to […]

Organizations today are increasingly looking to […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 4 days, 5 hours ago

Generate dashboards from natural language prompts in Amazon Quick

Building meaningful dashboards demands hours of manual setup, even for experienced […]

Building meaningful dashboards demands hours of manual setup, even for experienced […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 4 days, 5 hours ago

Agent-guided workflows to accelerate model customization in Amazon SageMaker AI

Every organization has access to the same foundation models. The […]

Every organization has access to the same foundation models. The […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 4 days, 5 hours ago

Introducing the agent quality loop: AgentCore Optimization now in preview

Generate recommendations from production traces, validate them with batch […]

Generate recommendations from production traces, validate them with batch […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 4 days, 5 hours ago

Beyond BI: How the Dataset Q&A feature of Amazon Quick powers the next generation of data decisions

Business leaders across industries rely on […]

Business leaders across industries rely on […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 1 week ago

AWS Transform now automates BI migration to Amazon Quick in days

Migrating to Amazon Quick doesn’t have to mean starting from scratch. Your d […]

Migrating to Amazon Quick doesn’t have to mean starting from scratch. Your d […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 1 week, 1 day ago

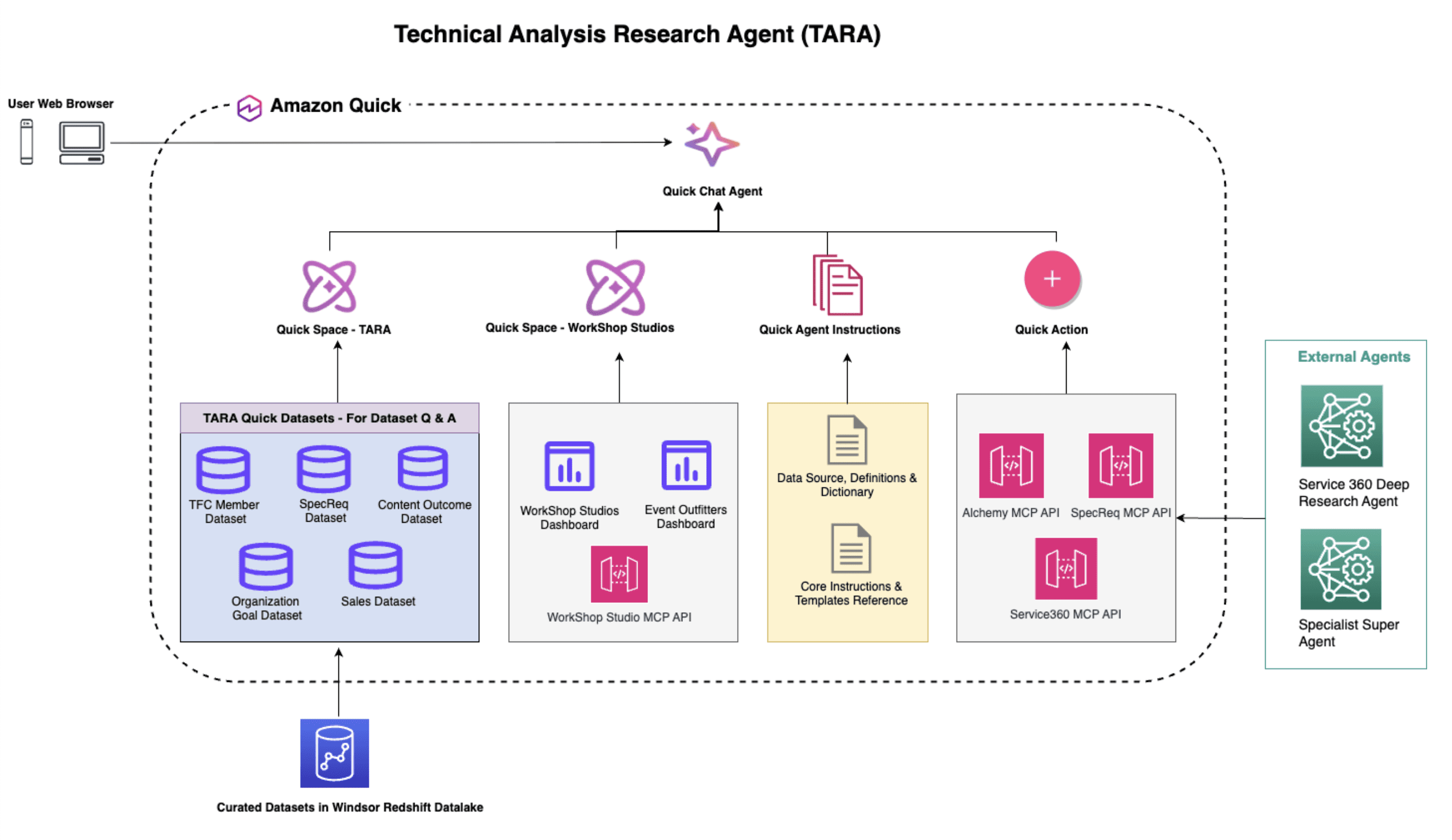

Configuring Amazon Bedrock AgentCore Gateway for secure access to private resources

AI agents in production environments often need to reach […]

AI agents in production environments often need to reach […] -

AWS Machine Learning wrote a new post on the site CYBERCASEMANAGER ENTERPRISES 1 week, 1 day ago

Unleashing Agentic AI Analytics on Amazon SageMaker with Amazon Athena and Amazon Quick

Modern enterprises face mounting challenges in extracting […]

Modern enterprises face mounting challenges in extracting […] - Load More