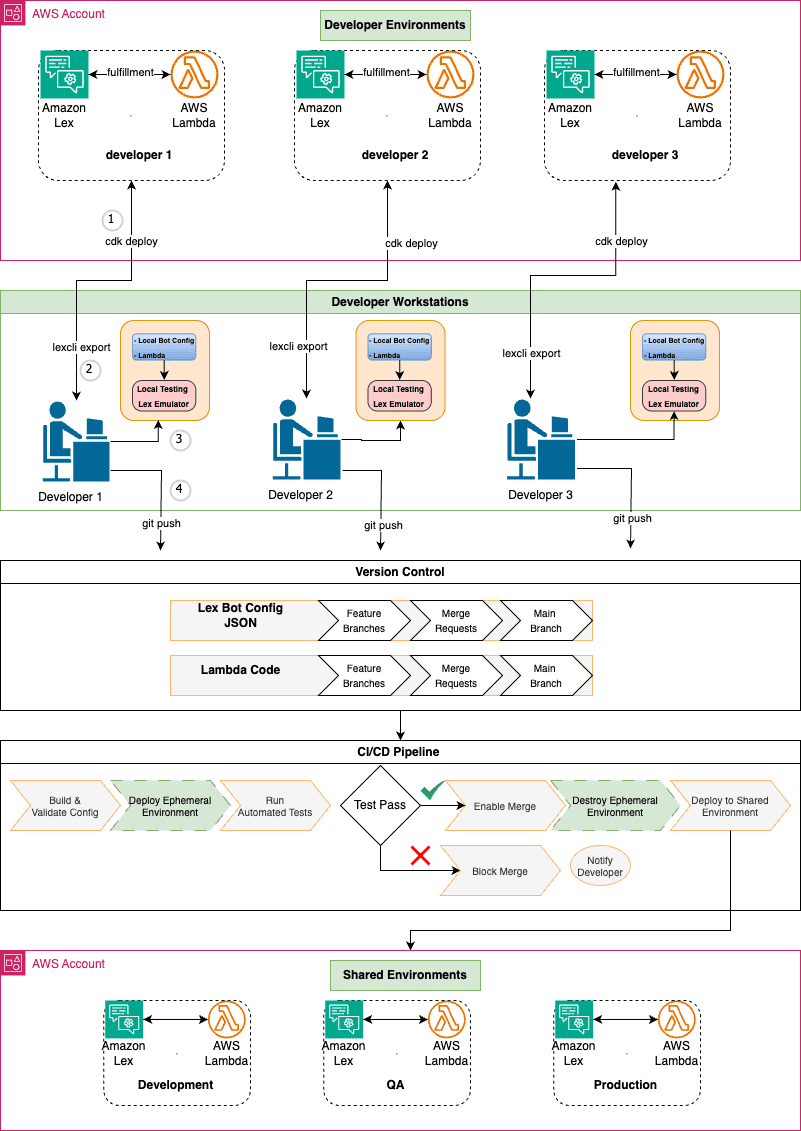

Drive organizational growth with Amazon Lex multi-developer CI/CD pipeline

Favorite As your conversational AI initiatives evolve, developing Amazon Lex assistants becomes increasingly complex. Multiple developers working on the same shared Lex instance leads to configuration conflicts, overwritten changes, and slower iteration cycles. Scaling Amazon Lex development requires isolated environments, version control, and automated deployment pipelines. By adopting well-structured continuous

Read More![]() Shared by AWS Machine Learning March 6, 2026

Shared by AWS Machine Learning March 6, 2026