Favorite Vanguard is a global investment management firm, offering a broad selection of investments, advice, retirement services, and insights to individual investors, institutions, and financial professionals. We operate under a unique, investor-owned structure and adhere to a straightforward purpose: To take a stand for all investors, to treat them fairly,

Read More

Shared by AWS Machine Learning April 29, 2026

Shared by AWS Machine Learning April 29, 2026

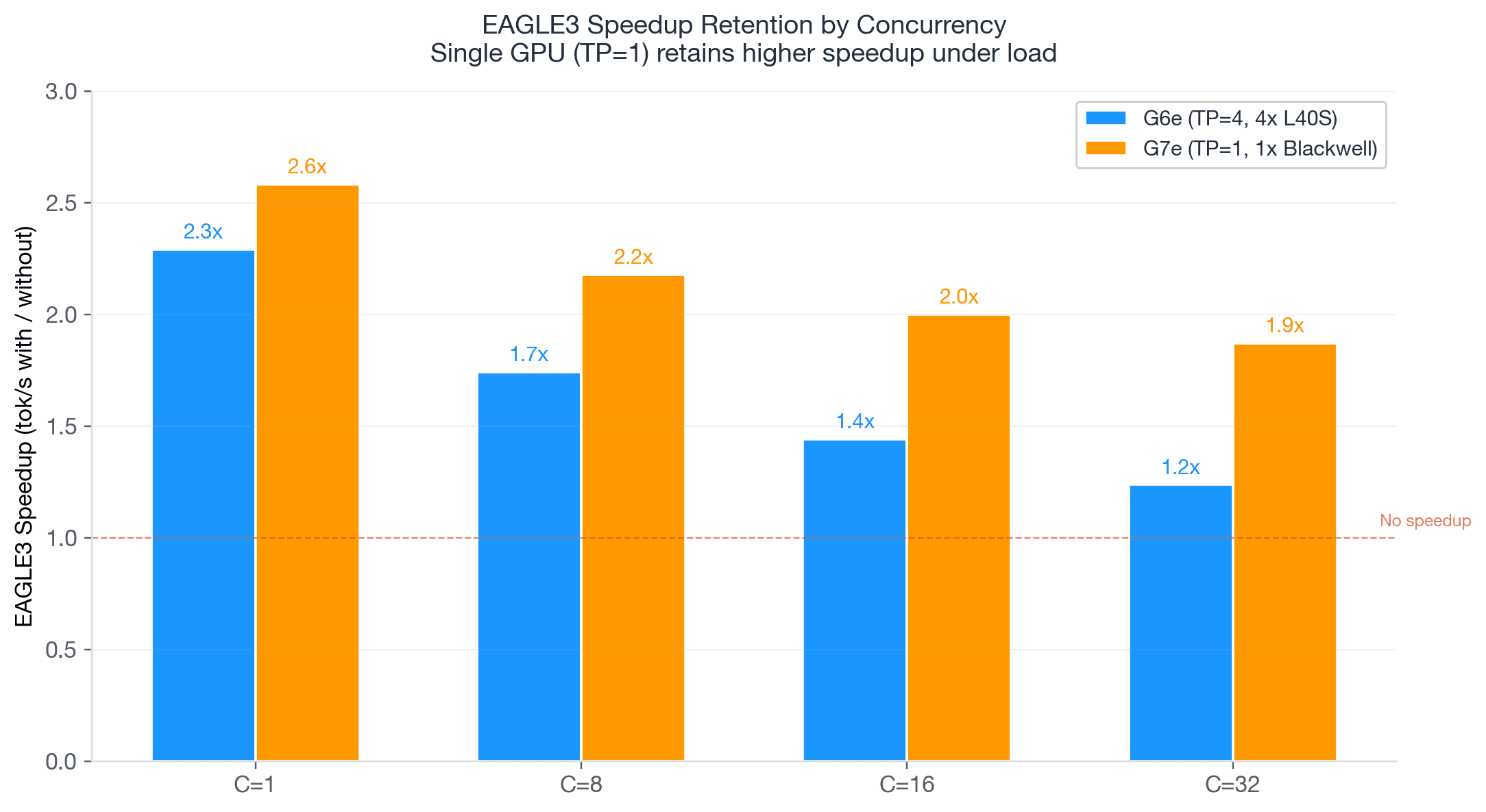

Favorite As the demand for generative AI continues to grow, developers and enterprises seek more flexible, cost-effective, and powerful accelerators to meet their needs. Today, we are thrilled to announce the availability of G7e instances powered by NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs on Amazon SageMaker AI. You

Read More

Shared by AWS Machine Learning April 20, 2026

Shared by AWS Machine Learning April 20, 2026

Favorite AI agents have evolved significantly beyond chat. Writing code, persist filesystem state, execute shell commands, and managing states throughout the filesystem are some examples of things that they can do. As agentic coding assistants and development workflows have matured, the filesystem has become agents’ primary working memory, extending their

Read More

Shared by AWS Machine Learning April 2, 2026

Shared by AWS Machine Learning April 2, 2026

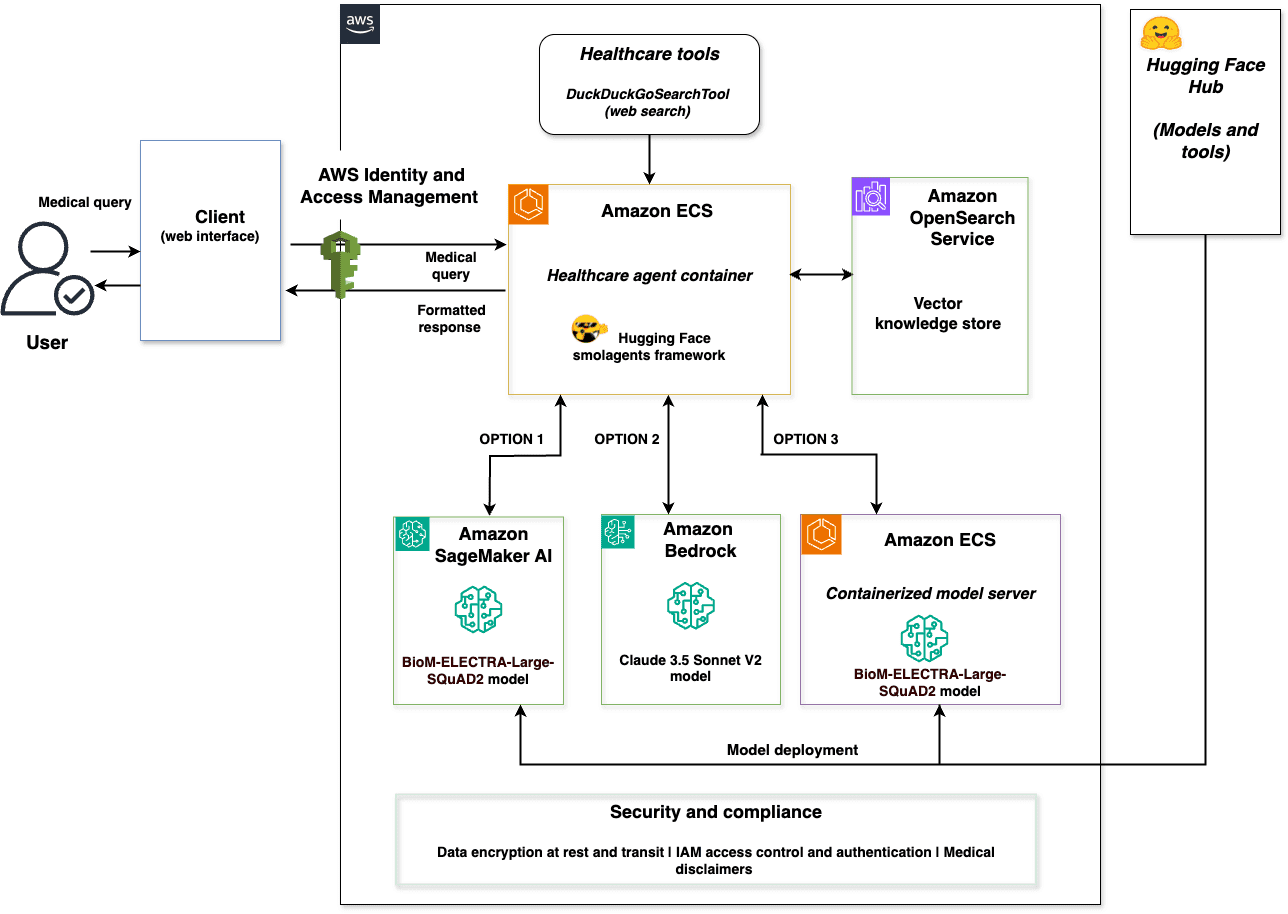

Favorite This post is cowritten by Jeff Boudier, Simon Pagezy, and Florent Gbelidji from Hugging Face. Agentic AI systems represent an evolution from conversational AI to autonomous agents capable of complex reasoning, tool usage, and code execution. Enterprise applications benefit from strategic deployment approaches tailored to specific needs. These needs include managed

Read More

Shared by AWS Machine Learning February 24, 2026

Shared by AWS Machine Learning February 24, 2026

Favorite This post was written with Alex Gnibus of Stability AI. Stability AI Image Services are now available in Amazon Bedrock, offering ready-to-use media editing capabilities delivered through the Amazon Bedrock API. These image editing tools expand on the capabilities of Stability AI’s Stable Diffusion 3.5 models (SD3.5) and Stable

Read More

Shared by AWS Machine Learning September 19, 2025

Shared by AWS Machine Learning September 19, 2025

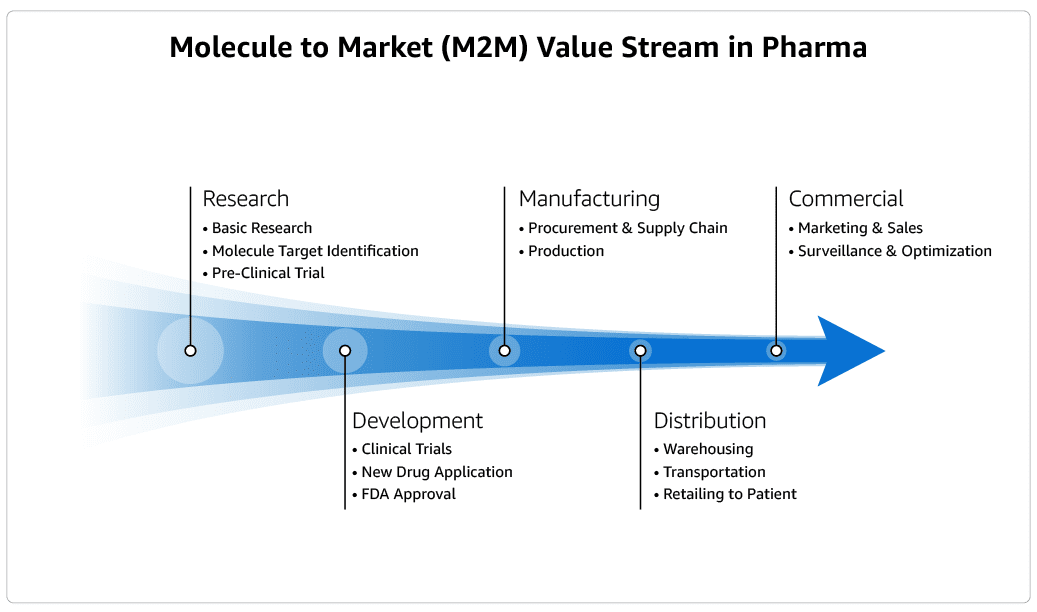

Favorite It takes biopharma companies over 10 years, at a cost of over $2 billion and with a failure rate of over 90%, to deliver a new drug to patients. The Market to Molecule (M2M) value stream process, which biopharma companies must apply to bring new drugs to patients, is

Read More

Shared by AWS Machine Learning May 29, 2025

Shared by AWS Machine Learning May 29, 2025

Favorite Large language models (LLMs) have revolutionized the field of natural language processing, enabling machines to understand and generate human-like text with remarkable accuracy. However, despite their impressive language capabilities, LLMs are inherently limited by the data they were trained on. Their knowledge is static and confined to the information

Read More

Shared by AWS Machine Learning March 17, 2025

Shared by AWS Machine Learning March 17, 2025

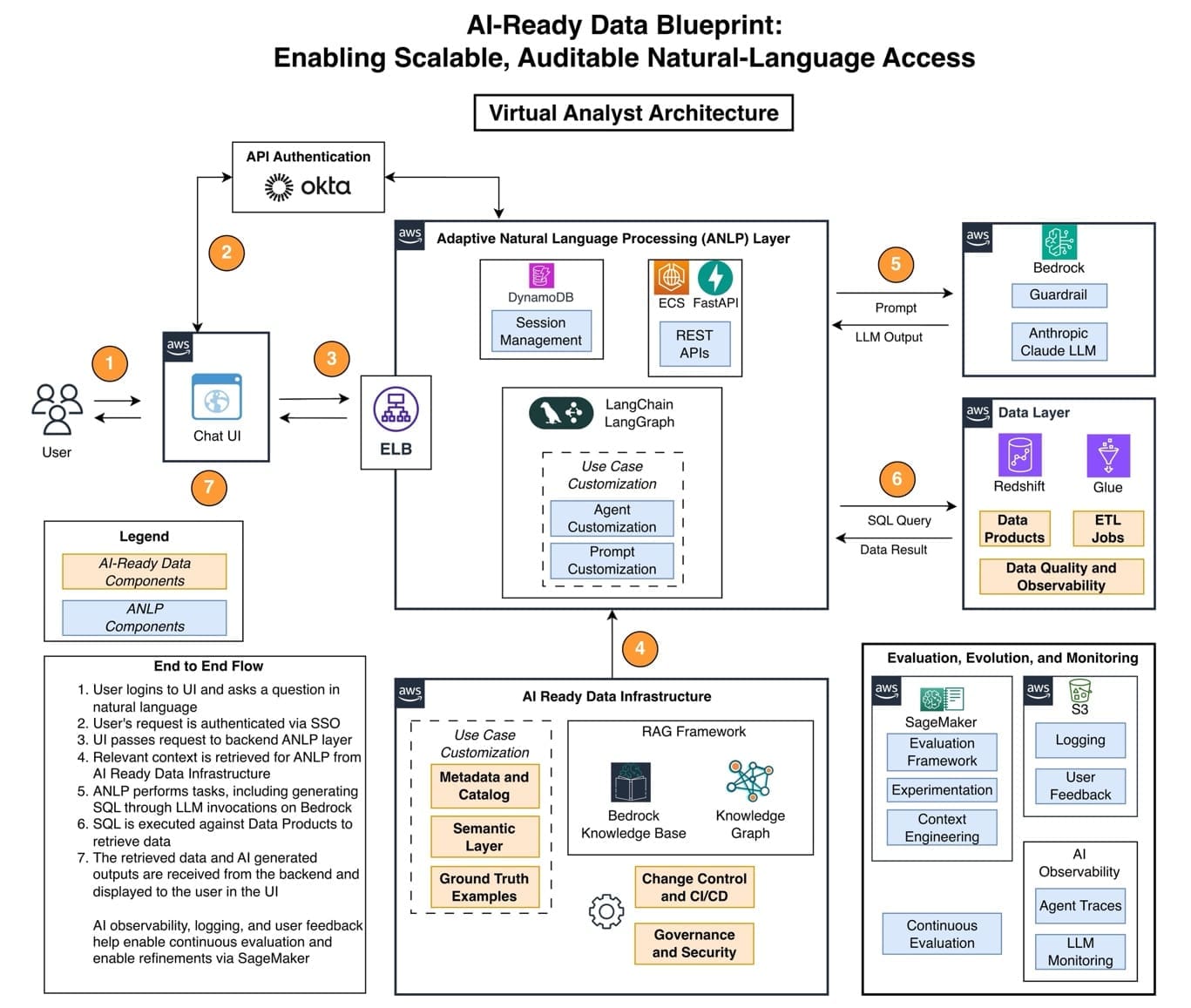

Favorite This post was written with Dian Xu and Joel Hawkins of Rocket Companies. Rocket Companies is a Detroit-based FinTech company with a mission to “Help Everyone Home”. With the current housing shortage and affordability concerns, Rocket simplifies the homeownership process through an intuitive and AI-driven experience. This comprehensive framework

Read More

Shared by AWS Machine Learning February 21, 2025

Shared by AWS Machine Learning February 21, 2025

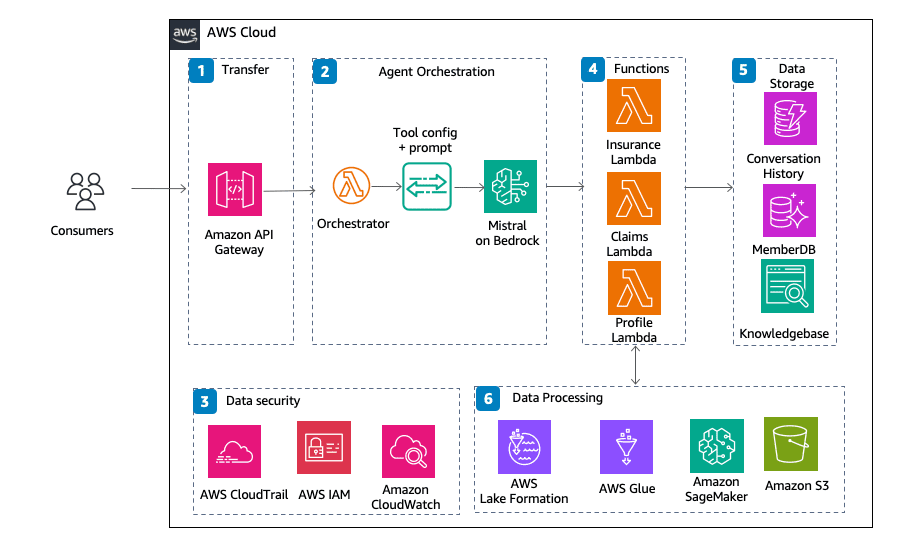

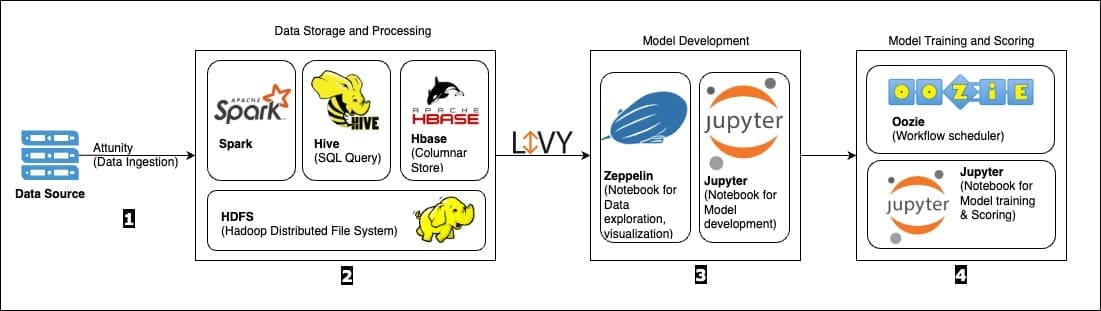

Favorite This post is co-written with Javier Beltrán, Ornela Xhelili, and Prasidh Chhabri from Aetion. For decision-makers in healthcare, it is critical to gain a comprehensive understanding of patient journeys and health outcomes over time. Scientists, epidemiologists, and biostatisticians implement a vast range of queries to capture complex, clinically relevant

Read More

Shared by AWS Machine Learning February 7, 2025

Shared by AWS Machine Learning February 7, 2025

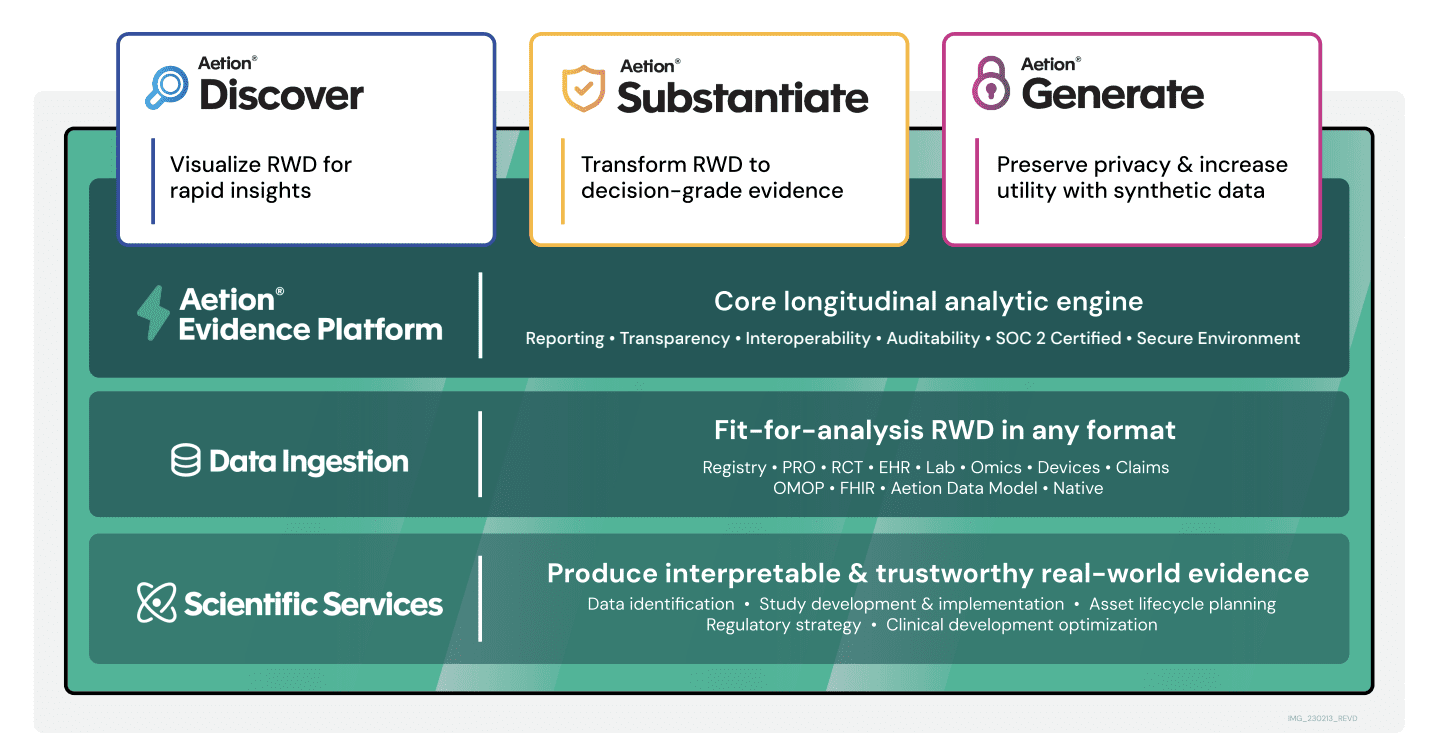

Favorite The real-world data collected and derived from patient journeys offers a wealth of insights into patient characteristics and outcomes and the effectiveness and safety of medical innovations. Researchers ask questions about patient populations in the form of structured queries; however, without the right choice of structured query and deep

Read More

Shared by AWS Machine Learning January 31, 2025

Shared by AWS Machine Learning January 31, 2025

![]() Shared by AWS Machine Learning April 29, 2026

Shared by AWS Machine Learning April 29, 2026