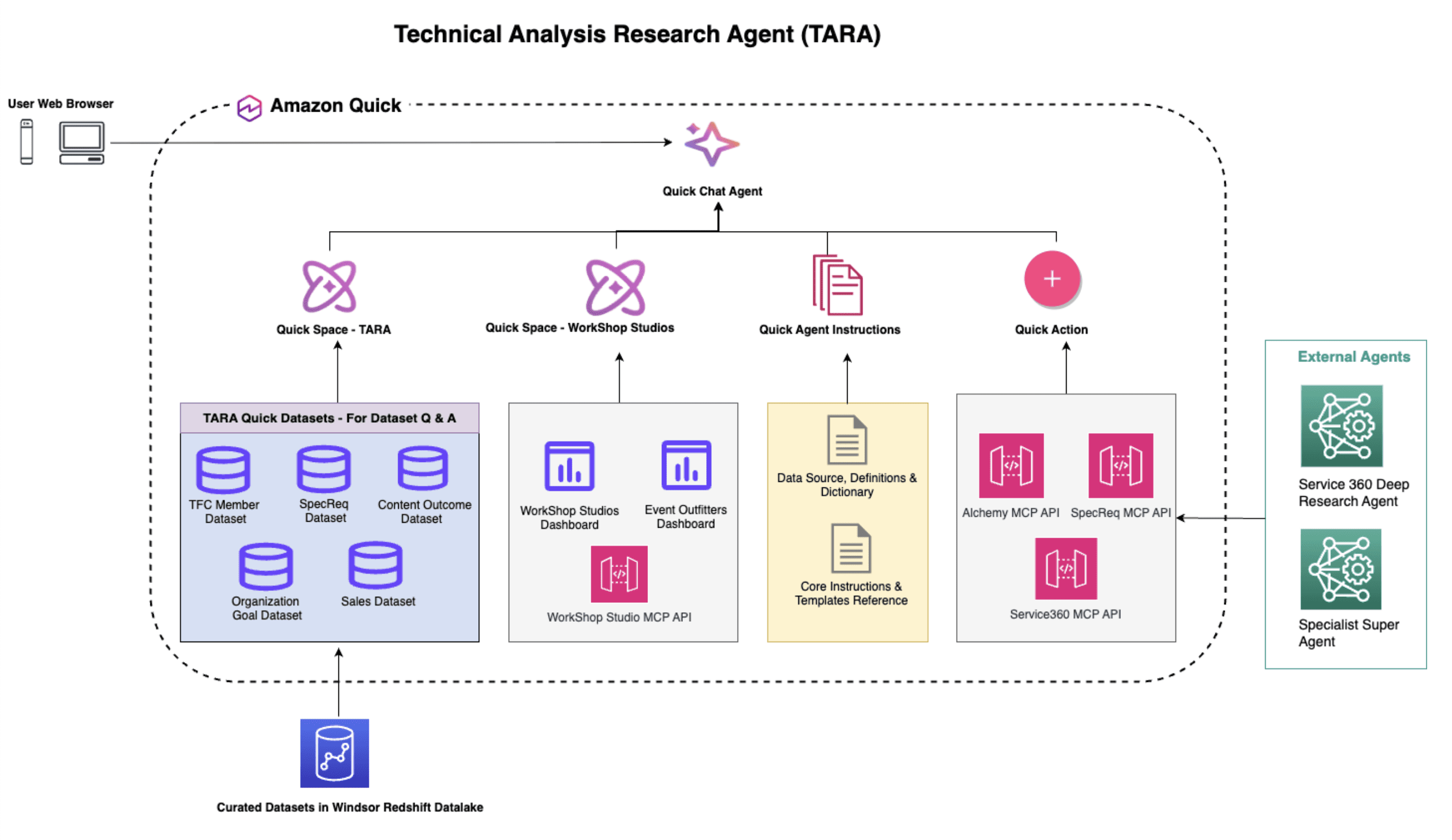

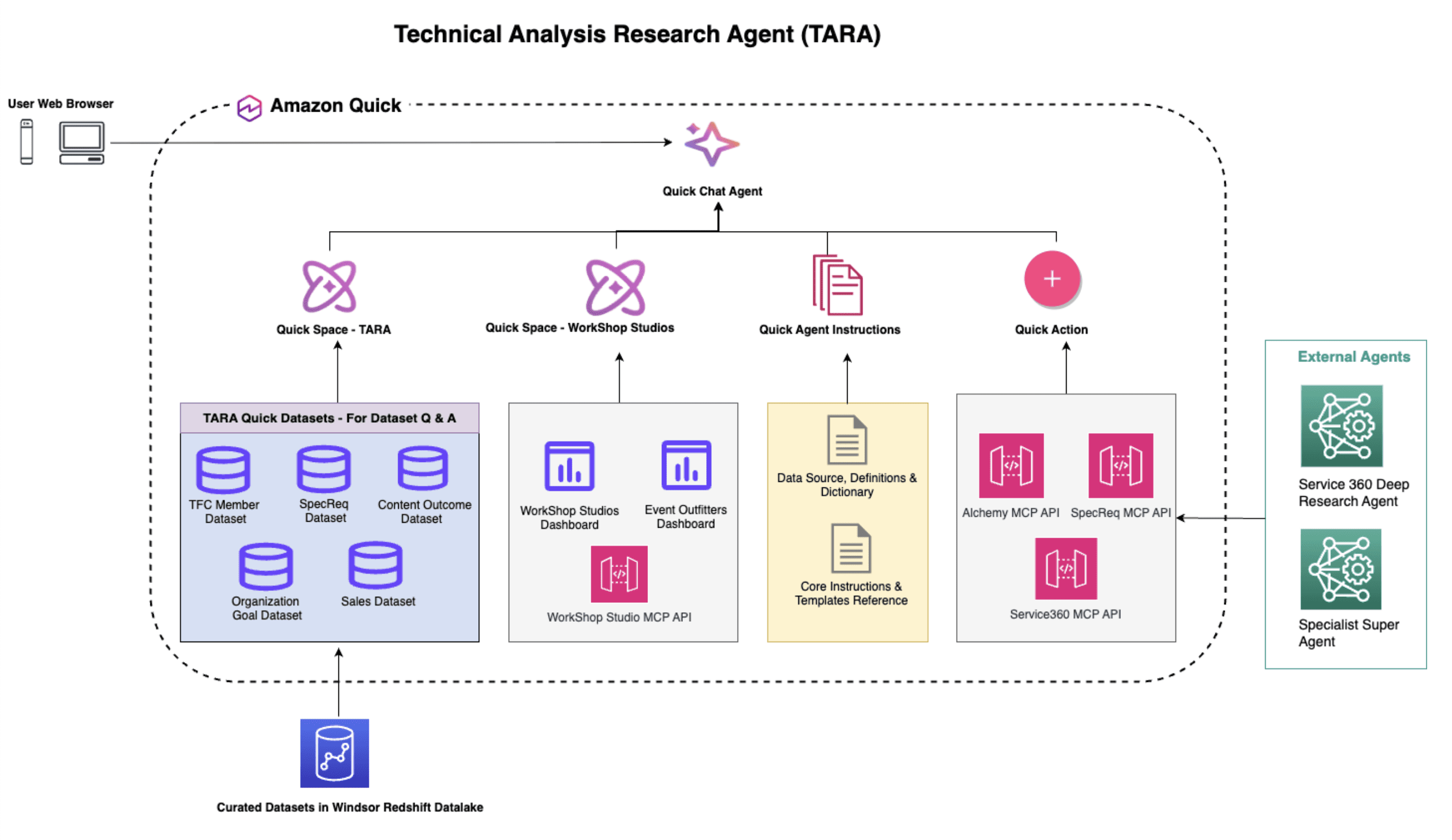

Favorite Business leaders across industries rely on operational dashboards as the shared source of truth that their teams execute against daily. But dashboards are built to answer known questions. When teams need to explore further, ad-hoc, multi-dimensional, or unforeseen questions, they hit a bottleneck. They wait hours or days for

Read More

Shared by AWS Machine Learning May 4, 2026

Shared by AWS Machine Learning May 4, 2026

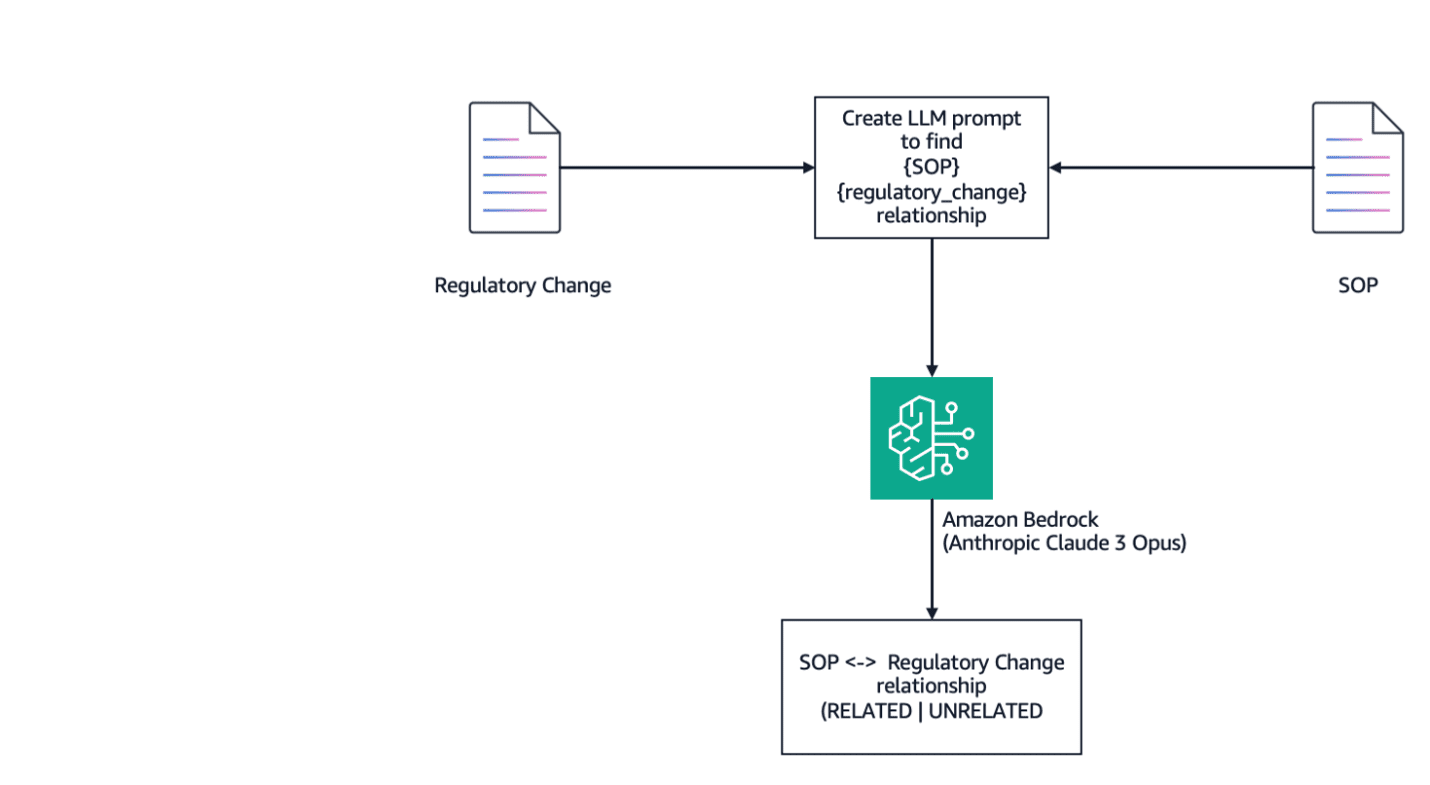

Favorite Standard operating procedures (SOPs) are essential documents in the context of regulations and compliance. SOPs outline specific steps for various processes, making sure practices are consistent, efficient, and compliant with regulatory standards. SOP documents typically include key sections such as the title, scope, purpose, responsibilities, procedures, documentation, citations (references),

Read More

Shared by AWS Machine Learning June 3, 2025

Shared by AWS Machine Learning June 3, 2025

Favorite This blog post is co-written with Heidi Vogel Brockmann and Ronald Brockmann from GuardianGamer. Millions of families face a common challenge: how to keep children safe in online gaming without sacrificing the joy and social connection these games provide. In this post, we share how GuardianGamer—a member of the

Read More

Shared by AWS Machine Learning May 28, 2025

Shared by AWS Machine Learning May 28, 2025

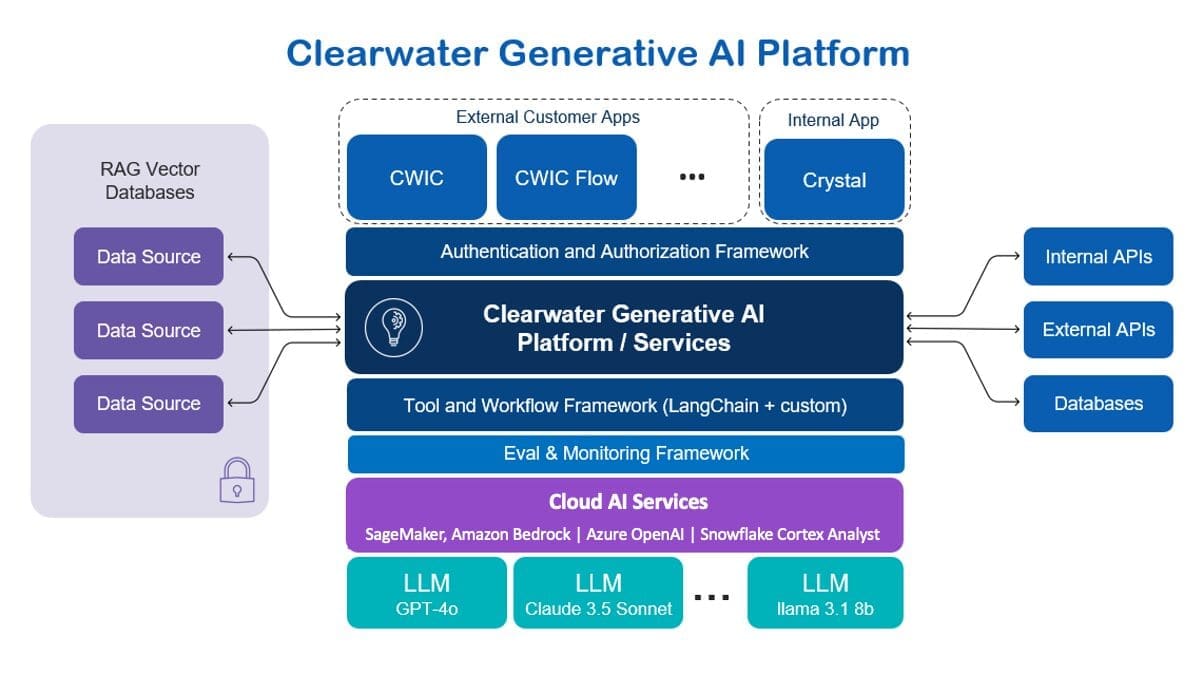

Favorite This post was written with Darrel Cherry, Dan Siddall, and Rany ElHousieny of Clearwater Analytics. As global trading volumes rise rapidly each year, capital markets firms are facing the need to manage large and diverse datasets to stay ahead. These datasets aren’t just expansive in volume; they’re critical in

Read More

Shared by AWS Machine Learning December 14, 2024

Shared by AWS Machine Learning December 14, 2024

Favorite In the dynamic world of streaming on Amazon Music, every search for a song, podcast, or playlist holds a story, a mood, or a flood of emotions waiting to be unveiled. These searches serve as a gateway to new discoveries, cherished experiences, and lasting memories. The search bar is

Read More

Shared by AWS Machine Learning November 22, 2023

Shared by AWS Machine Learning November 22, 2023

Favorite Linked below is an excellent video on the 2022 KM strategy from the UN Development program. Good to see the focus on culture and networks. View Original Source (nickmilton.com) Here.

Favorite MDaudit provides a cloud-based billing compliance and revenue integrity software as a service (SaaS) platform to more than 70,000 healthcare providers and 1,500 healthcare facilities, ensuring healthcare customers maintain regulatory compliance and retain revenue. Working with the top 60+ US healthcare networks, MDaudit needs to be able to scale

Read More

Shared by AWS Machine Learning September 27, 2023

Shared by AWS Machine Learning September 27, 2023

Favorite In this post, we discuss how United Airlines, in collaboration with the Amazon Machine Learning Solutions Lab, build an active learning framework on AWS to automate the processing of passenger documents. “In order to deliver the best flying experience for our passengers and make our internal business process as

Read More

Shared by AWS Machine Learning September 21, 2023

Shared by AWS Machine Learning September 21, 2023

Favorite This is a guest post co-written by Julian Blau, Data Scientist at xarvio Digital Farming Solutions; BASF Digital Farming GmbH, and Antonio Rodriguez, AI/ML Specialist Solutions Architect at AWS xarvio Digital Farming Solutions is a brand from BASF Digital Farming GmbH, which is part of BASF Agricultural Solutions division.

Read More

Shared by AWS Machine Learning December 1, 2022

Shared by AWS Machine Learning December 1, 2022

Favorite Yara is the world’s leading crop nutrition company and a provider of environmental and agricultural solutions. Yara’s ambition is focused on growing a nature-positive food future that creates value for customers, shareholders, and society at large, and delivers a more sustainable food value chain. Supporting our vision of a

Read More

Shared by AWS Machine Learning November 17, 2022

Shared by AWS Machine Learning November 17, 2022

![]() Shared by AWS Machine Learning May 4, 2026

Shared by AWS Machine Learning May 4, 2026