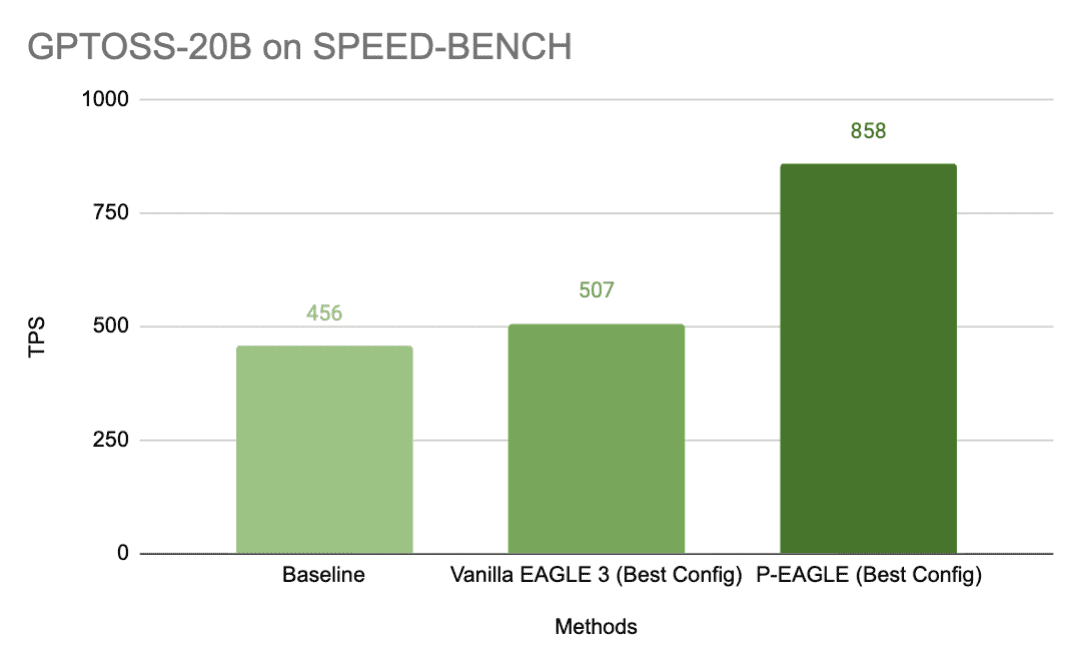

P-EAGLE: Faster LLM inference with Parallel Speculative Decoding in vLLM

Favorite EAGLE is the state-of-the-art method for speculative decoding in large language model (LLM) inference, but its autoregressive drafting creates a hidden bottleneck: the more tokens that you speculate, the more sequential forward passes the drafter needs. Eventually those overhead eats into your gains. P-EAGLE removes this ceiling by generating

Read More![]() Shared by AWS Machine Learning March 13, 2026

Shared by AWS Machine Learning March 13, 2026