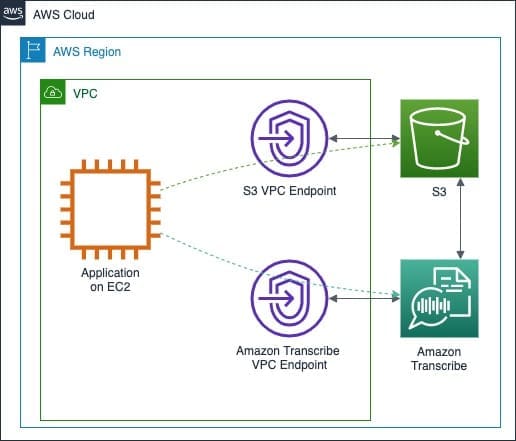

Best practices for building secure applications with Amazon Transcribe

Favorite Amazon Transcribe is an AWS service that allows customers to convert speech to text in either batch or streaming mode. It uses machine learning–powered automatic speech recognition (ASR), automatic language identification, and post-processing technologies. Amazon Transcribe can be used for transcription of customer care calls, multiparty conference calls, and

Read More![]() Shared by AWS Machine Learning March 26, 2024

Shared by AWS Machine Learning March 26, 2024