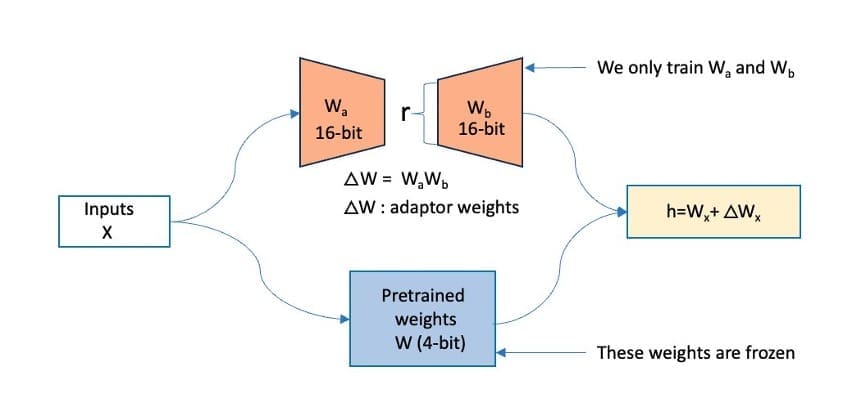

Favorite Companies across various scales and industries are using large language models (LLMs) to develop generative AI applications that provide innovative experiences for customers and employees. However, building or fine-tuning these pre-trained LLMs on extensive datasets demands substantial computational resources and engineering effort. With the increase in sizes of these

Read More

Shared by AWS Machine Learning November 23, 2024

Shared by AWS Machine Learning November 23, 2024

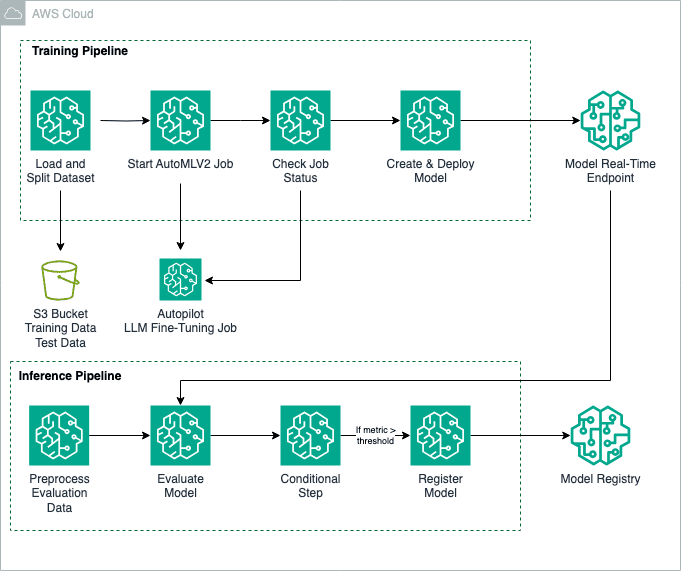

Favorite Fine-tuning foundation models (FMs) is a process that involves exposing a pre-trained FM to task-specific data and fine-tuning its parameters. It can then develop a deeper understanding and produce more accurate and relevant outputs for that particular domain. In this post, we show how to use an Amazon SageMaker

Read More

Shared by AWS Machine Learning November 22, 2024

Shared by AWS Machine Learning November 22, 2024

Favorite Today, we’re excited to share the journey of the VW—an innovator in the automotive industry and Europe’s largest car maker—to enhance knowledge management by using generative AI, Amazon Bedrock, and Amazon Kendra to devise a solution based on Retrieval Augmented Generation (RAG) that makes internal information more easily accessible

Read More

Shared by AWS Machine Learning November 22, 2024

Shared by AWS Machine Learning November 22, 2024

Favorite Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies like AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI, and Amazon through a single API, along with a broad set of capabilities to build generative AI applications with

Read More

Shared by AWS Machine Learning November 22, 2024

Shared by AWS Machine Learning November 22, 2024

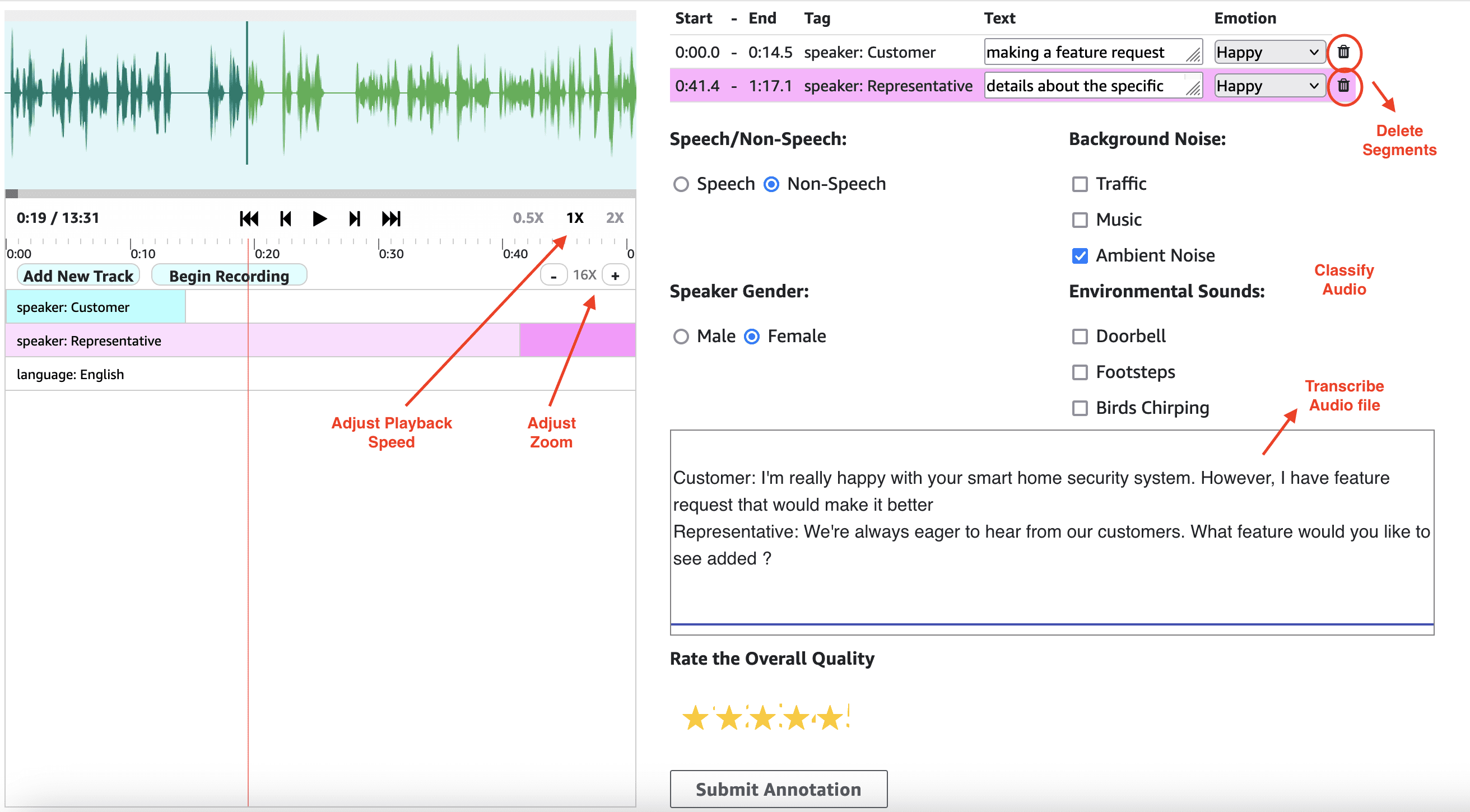

Favorite As generative AI models advance in creating multimedia content, the difference between good and great output often lies in the details that only human feedback can capture. Audio and video segmentation provides a structured way to gather this detailed feedback, allowing models to learn through reinforcement learning from human

Read More

Shared by AWS Machine Learning November 22, 2024

Shared by AWS Machine Learning November 22, 2024

Favorite Google.org announced the 80 organizations that will receive funding and support from the AI Opportunity Fund: Europe to help 20,000 people learn AI skills. View Original Source (blog.google/technology/ai/) Here.

Favorite Large language models (LLMs) are AI systems trained on vast amounts of text data, enabling them to understand, generate, and reason with natural language in highly capable and flexible ways. LLM training has seen remarkable advances in recent years, with organizations pushing the boundaries of what’s possible in terms

Read More

Shared by AWS Machine Learning November 21, 2024

Shared by AWS Machine Learning November 21, 2024

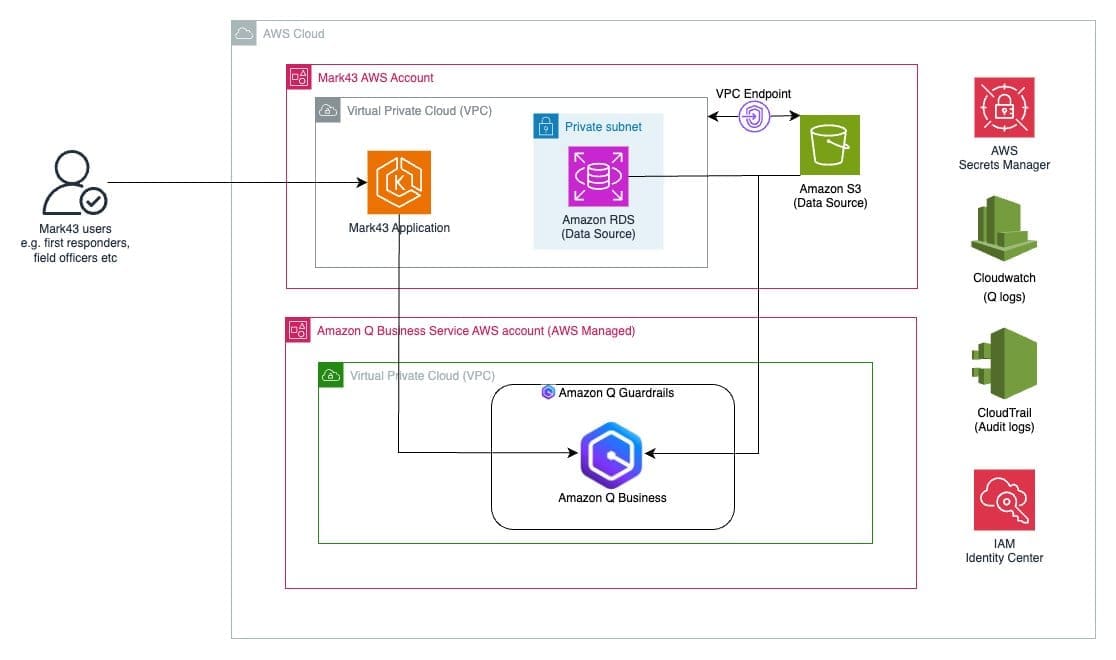

Favorite This post is co-written with Lawrence Zorio III from Mark43. Public safety organizations face the challenge of accessing and analyzing vast amounts of data quickly while maintaining strict security protocols. First responders need immediate access to relevant data across multiple systems, while command staff require rapid insights for operational decisions.

Read More

Shared by AWS Machine Learning November 21, 2024

Shared by AWS Machine Learning November 21, 2024

Favorite Retrieval Augmented Generation (RAG) has become a crucial technique for improving the accuracy and relevance of AI-generated responses. The effectiveness of RAG heavily depends on the quality of context provided to the large language model (LLM), which is typically retrieved from vector stores based on user queries. The relevance

Read More

Shared by AWS Machine Learning November 21, 2024

Shared by AWS Machine Learning November 21, 2024

Favorite Email remains a vital communication channel for business customers, especially in HR, where responding to inquiries can use up staff resources and cause delays. The extensive knowledge required can make it overwhelming to respond to email inquiries manually. In the future, high automation will play a crucial role in

Read More

Shared by AWS Machine Learning November 21, 2024

Shared by AWS Machine Learning November 21, 2024

![]() Shared by AWS Machine Learning November 23, 2024

Shared by AWS Machine Learning November 23, 2024