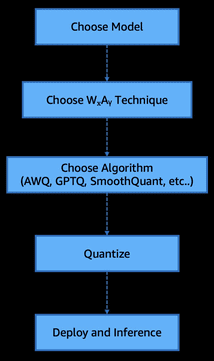

Favorite Foundation models (FMs) and large language models (LLMs) have been rapidly scaling, often doubling in parameter count within months, leading to significant improvements in language understanding and generative capabilities. This rapid growth comes with steep costs: inference now requires enormous memory capacity, high-performance GPUs, and substantial energy consumption. This

Read More

Shared by AWS Machine Learning January 10, 2026

Shared by AWS Machine Learning January 10, 2026

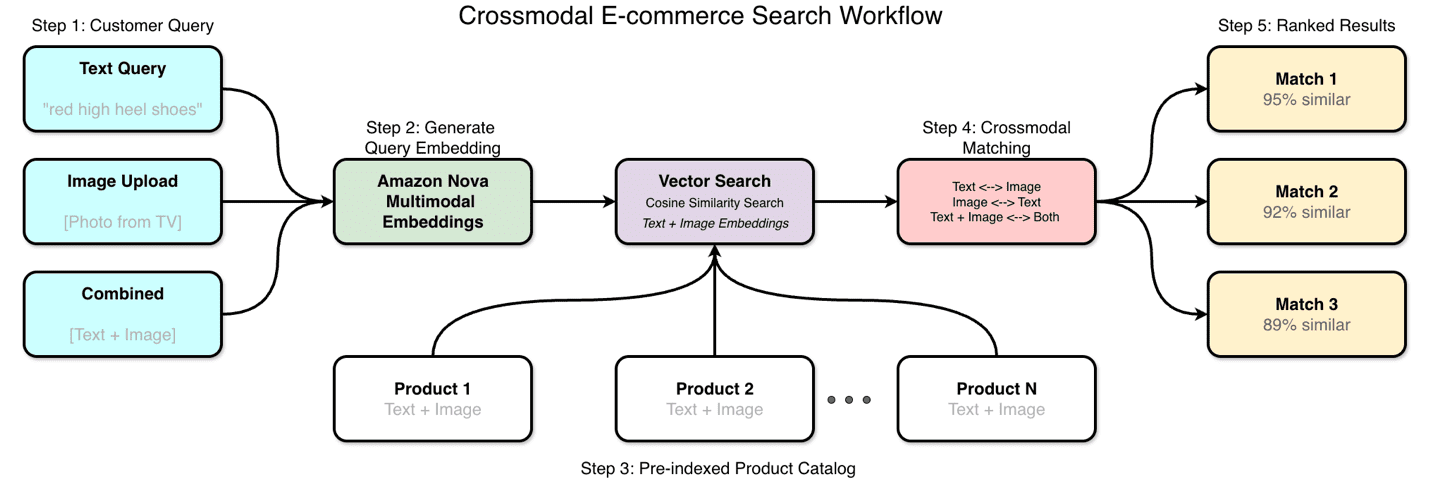

Favorite Amazon Nova Multimodal Embeddings processes text, documents, images, video, and audio through a single model architecture. Available through Amazon Bedrock, the model converts different input modalities into numerical embeddings within the same vector space, supporting direct similarity calculations regardless of content type. We developed this unified model to reduce

Read More

Shared by AWS Machine Learning January 10, 2026

Shared by AWS Machine Learning January 10, 2026

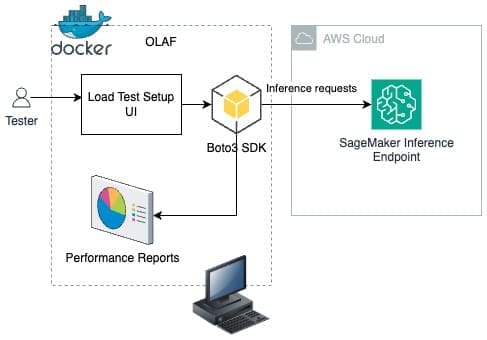

Favorite This post is cowritten with Aashraya Sachdeva from Observe.ai. You can use Amazon SageMaker to build, train and deploy machine learning (ML) models, including large language models (LLMs) and other foundation models (FMs). This helps you significantly reduce the time required for a range of generative AI and ML

Read More

Shared by AWS Machine Learning January 8, 2026

Shared by AWS Machine Learning January 8, 2026

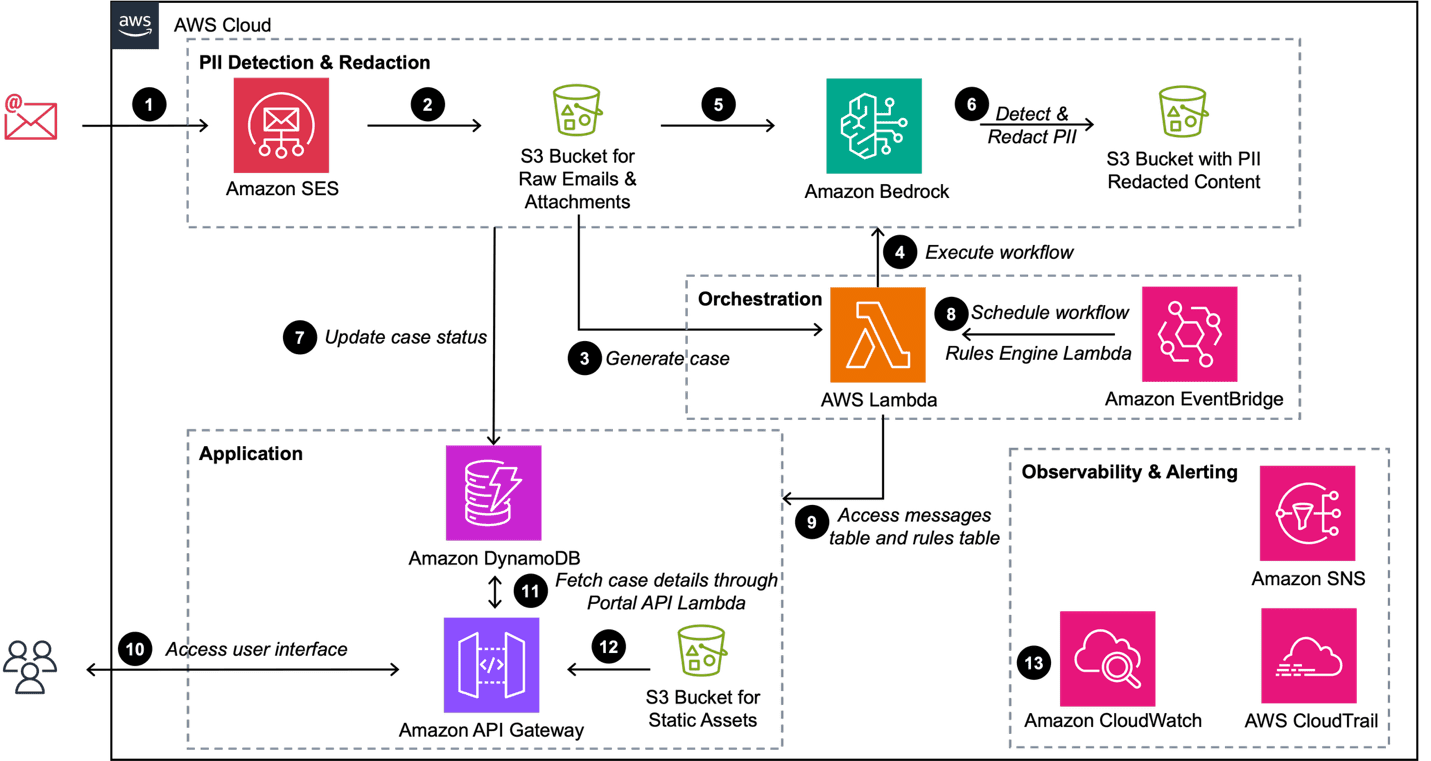

Favorite Organizations handle vast amounts of sensitive customer information through various communication channels. Protecting Personally Identifiable Information (PII), such as social security numbers (SSNs), driver’s license numbers, and phone numbers has become increasingly critical for maintaining compliance with data privacy regulations and building customer trust. However, manually reviewing and redacting

Read More

Shared by AWS Machine Learning January 8, 2026

Shared by AWS Machine Learning January 8, 2026

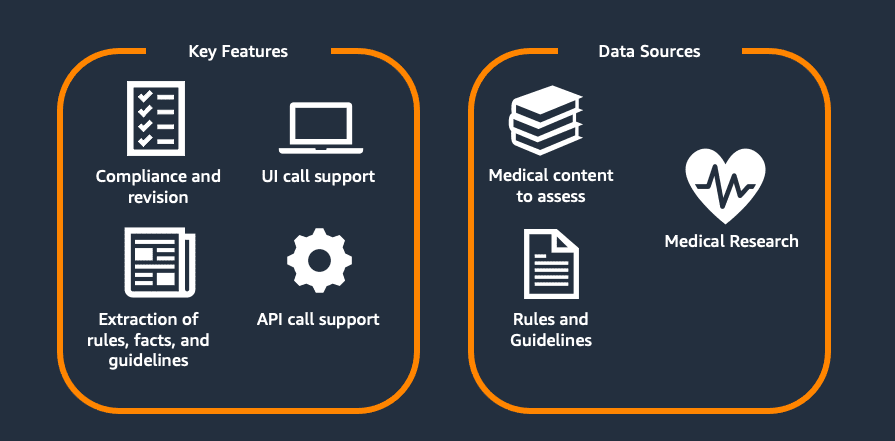

Favorite This blog post is based on work co-developed with Flo Health. Healthcare science is rapidly advancing. Maintaining accurate and up-to-date medical content directly impacts people’s lives, health decisions, and well-being. When someone searches for health information, they are often at their most vulnerable, making accuracy not just important, but

Read More

Shared by AWS Machine Learning January 8, 2026

Shared by AWS Machine Learning January 8, 2026

Favorite The Open Source Initiative (OSI) is pleased to welcome the Open Source Technology Improvement Fund (OSTIF) to the Open Policy Alliance. The Open Policy Alliance (OPA) was started in 2023 to bring together nonprofit Open Source community members to better understand and contribute to the changing landscape of public

Read More

Shared by voicesofopensource January 8, 2026

Shared by voicesofopensource January 8, 2026

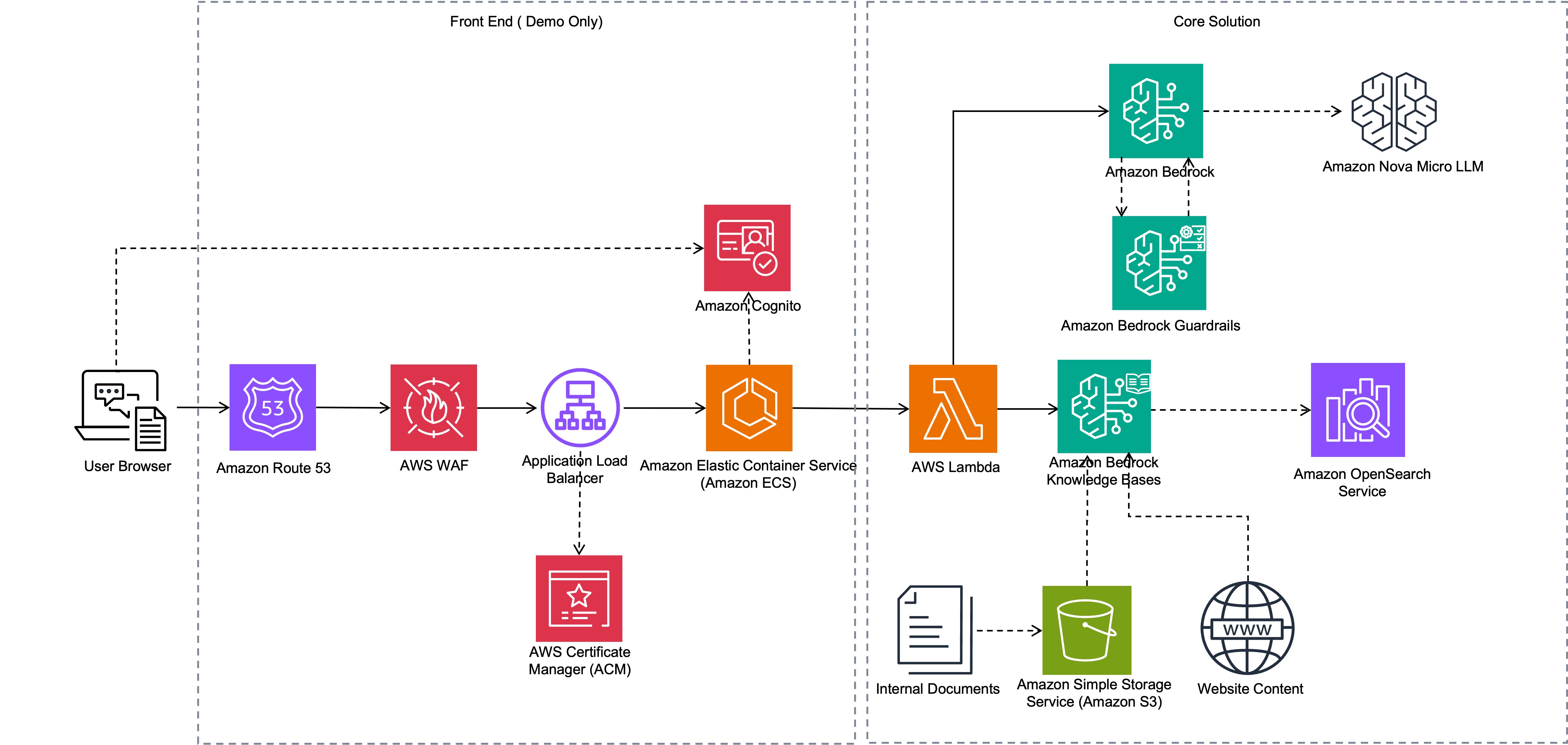

Favorite Businesses face a growing challenge: customers need answers fast, but support teams are overwhelmed. Support documentation like product manuals and knowledge base articles typically require users to search through hundreds of pages, and support agents often run 20–30 customer queries per day to locate specific information. This post demonstrates

Read More

Shared by AWS Machine Learning December 29, 2025

Shared by AWS Machine Learning December 29, 2025

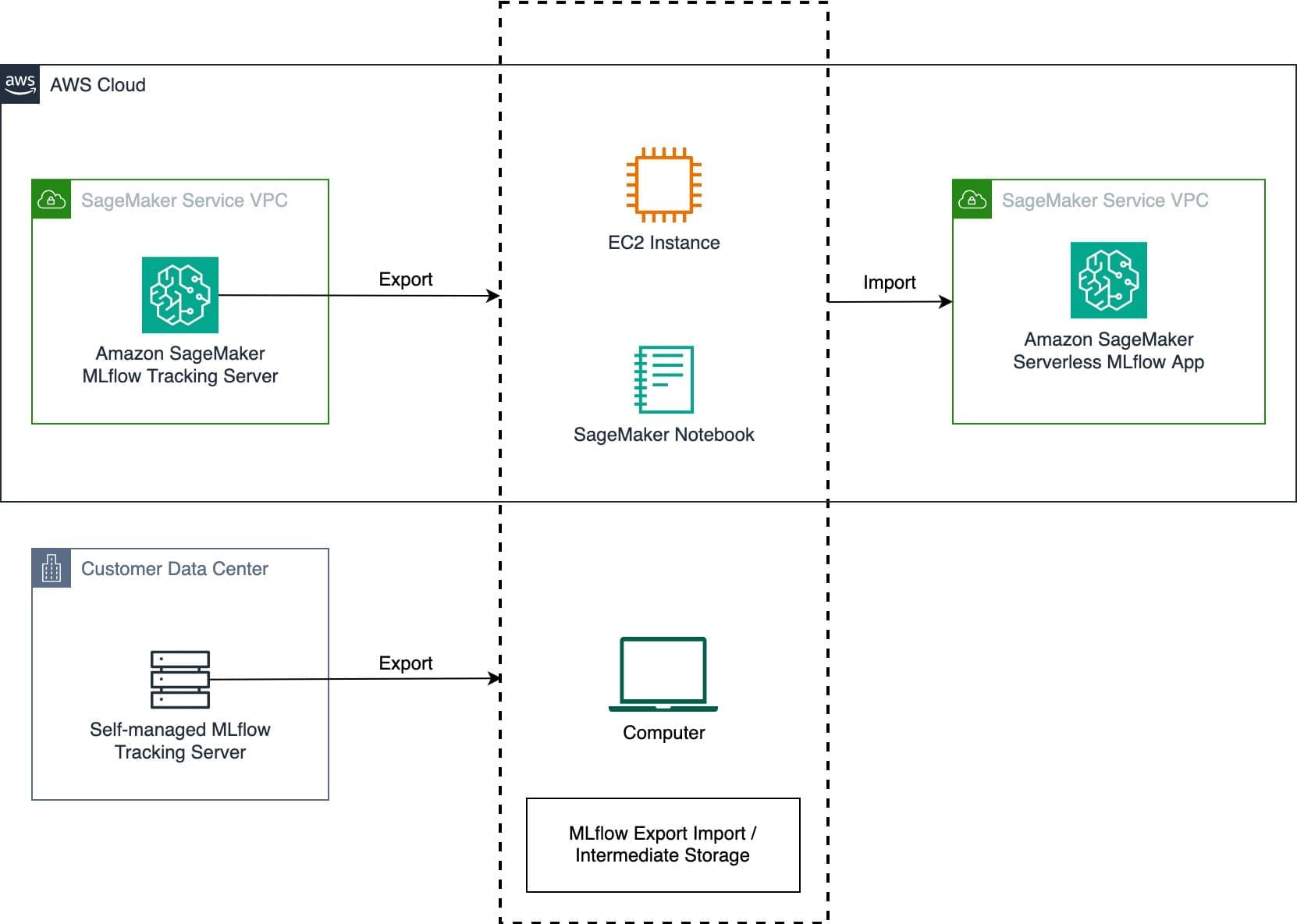

Favorite Operating a self-managed MLflow tracking server comes with administrative overhead, including server maintenance and resource scaling. As teams scale their ML experimentation, efficiently managing resources during peak usage and idle periods is a challenge. Organizations running MLflow on Amazon EC2 or on-premises can optimize costs and engineering resources by

Read More

Shared by AWS Machine Learning December 29, 2025

Shared by AWS Machine Learning December 29, 2025

Favorite Here are Google’s latest AI updates from December 2025 View Original Source (blog.google/technology/ai/) Here.

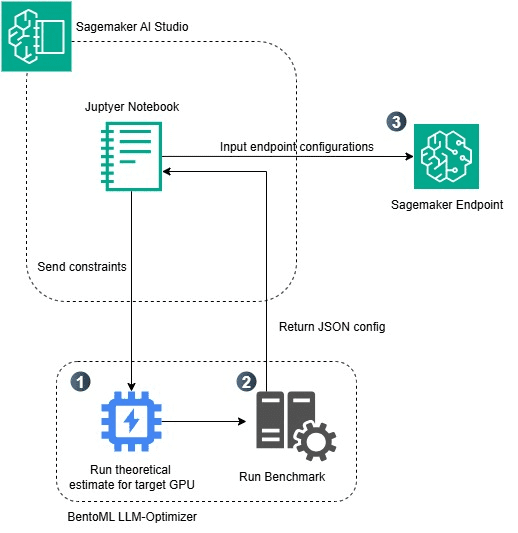

Favorite The rise of powerful large language models (LLMs) that can be consumed via API calls has made it remarkably straightforward to integrate artificial intelligence (AI) capabilities into applications. Yet despite this convenience, a significant number of enterprises are choosing to self-host their own models—accepting the complexity of infrastructure management,

Read More

Shared by AWS Machine Learning December 24, 2025

Shared by AWS Machine Learning December 24, 2025

![]() Shared by AWS Machine Learning January 10, 2026

Shared by AWS Machine Learning January 10, 2026