Benchmark and optimize endpoint deployment in Amazon SageMaker JumpStart

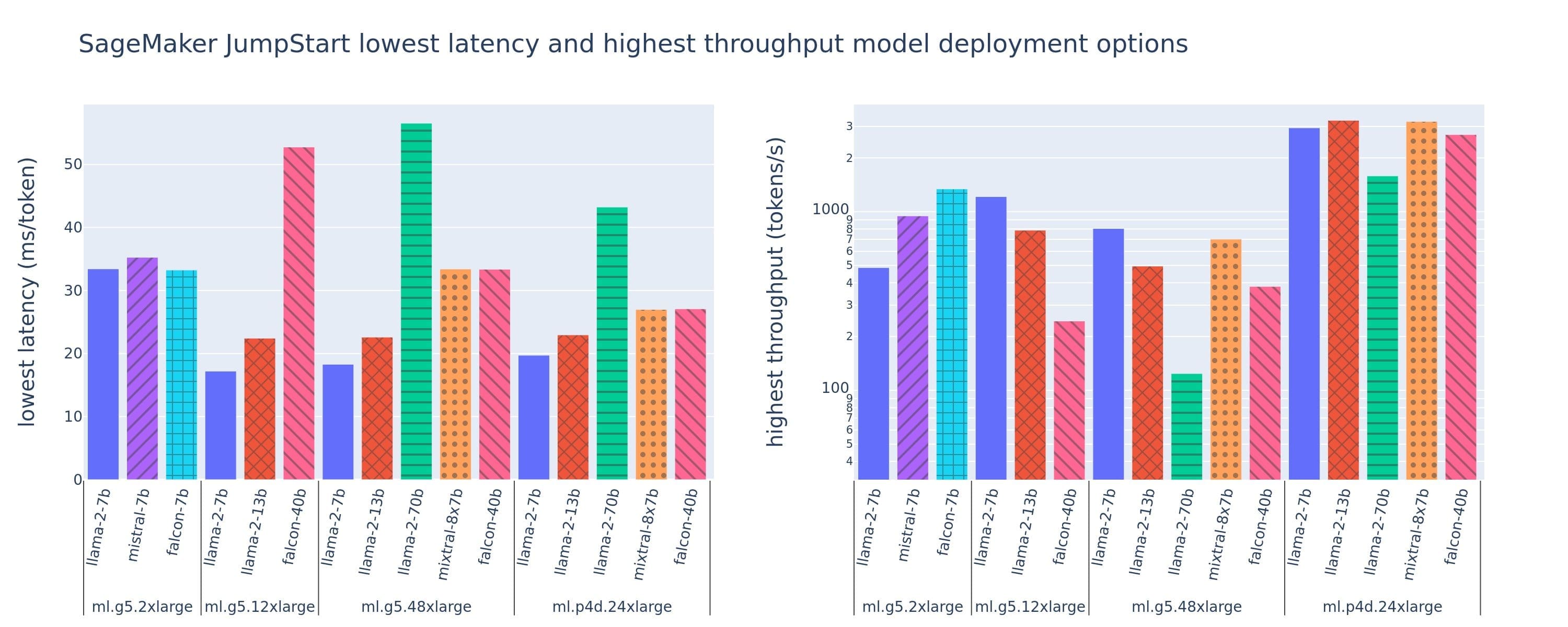

Favorite When deploying a large language model (LLM), machine learning (ML) practitioners typically care about two measurements for model serving performance: latency, defined by the time it takes to generate a single token, and throughput, defined by the number of tokens generated per second. Although a single request to the

Read More![]() Shared by AWS Machine Learning January 29, 2024

Shared by AWS Machine Learning January 29, 2024