Favorite Members Newsletter – December 2024 Our work thrives because of a passionate community dedicated to Open Source values. From advancing initiatives like the Open Source AI Definition to addressing challenges in licensing and policy, collaboration has always been our driving force. While 2024 brought significant progress, the challenges ahead

Read More

Shared by voicesofopensource December 3, 2024

Shared by voicesofopensource December 3, 2024

Favorite From a powerful new AI-flood forecasting initiative to help from AI in advancing quantum computers. View Original Source (blog.google/technology/ai/) Here.

Favorite The Open Source community underpins much of today’s software innovation, but with this power comes responsibility. Security vulnerabilities, unclear licensing, and a lack of transparency in software components pose significant risks to software supply chains. Recognizing this challenge, GitHub recently announced the GitHub Secure Open Source Fund—a transformative initiative

Read More

Shared by voicesofopensource December 3, 2024

Shared by voicesofopensource December 3, 2024

Favorite Today, the Accounts Payable (AP) and Accounts Receivable (AR) analysts in Amazon Finance operations receive queries from customers through email, cases, internal tools, or phone. When a query arises, analysts must engage in a time-consuming process of reaching out to subject matter experts (SMEs) and go through multiple policy

Read More

Shared by AWS Machine Learning December 3, 2024

Shared by AWS Machine Learning December 3, 2024

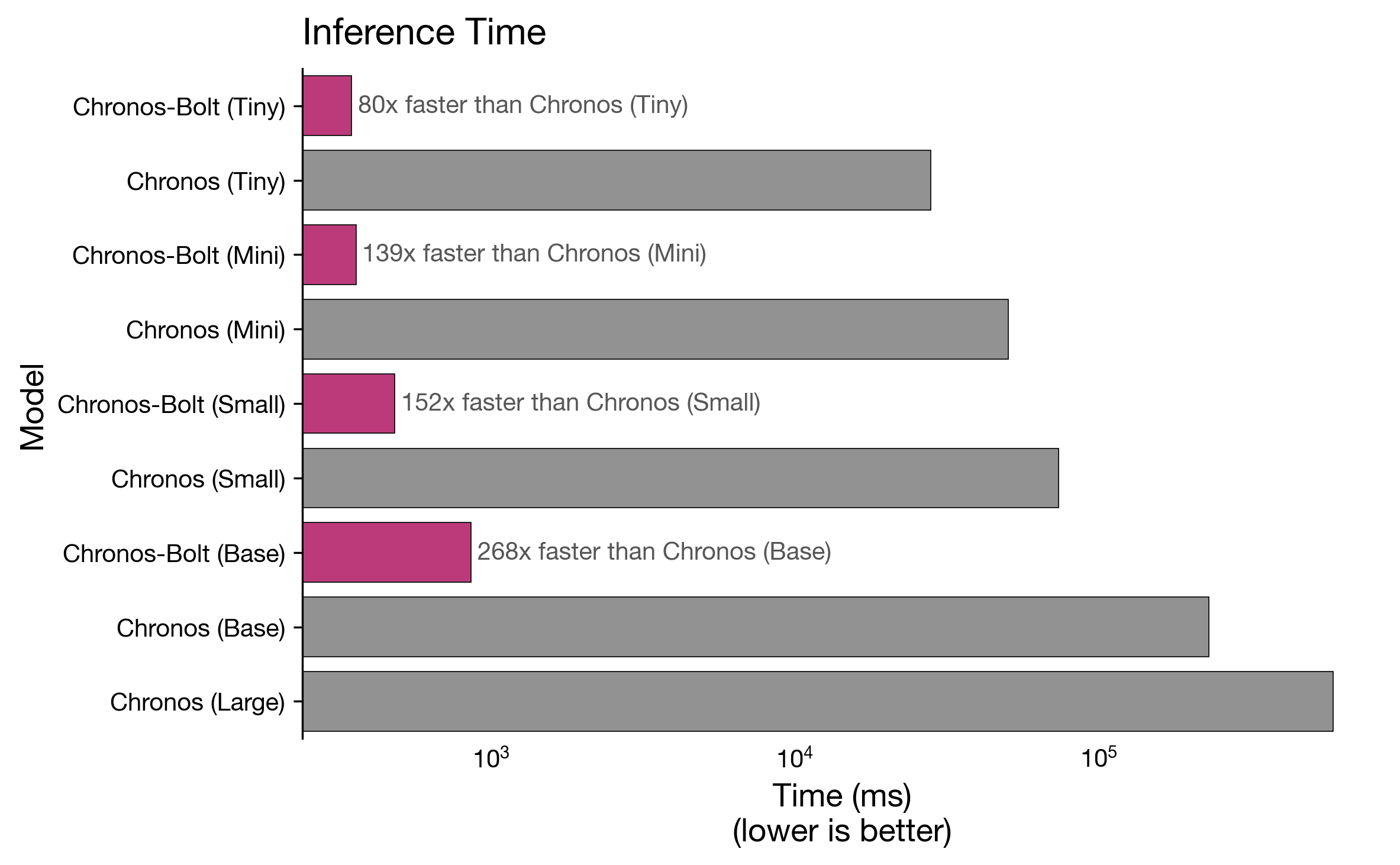

Favorite Chronos-Bolt is the newest addition to AutoGluon-TimeSeries, delivering accurate zero-shot forecasting up to 250 times faster than the original Chronos models [1]. Time series forecasting plays a vital role in guiding key business decisions across industries such as retail, energy, finance, and healthcare. Traditionally, forecasting has relied on statistical

Read More

Shared by AWS Machine Learning December 3, 2024

Shared by AWS Machine Learning December 3, 2024

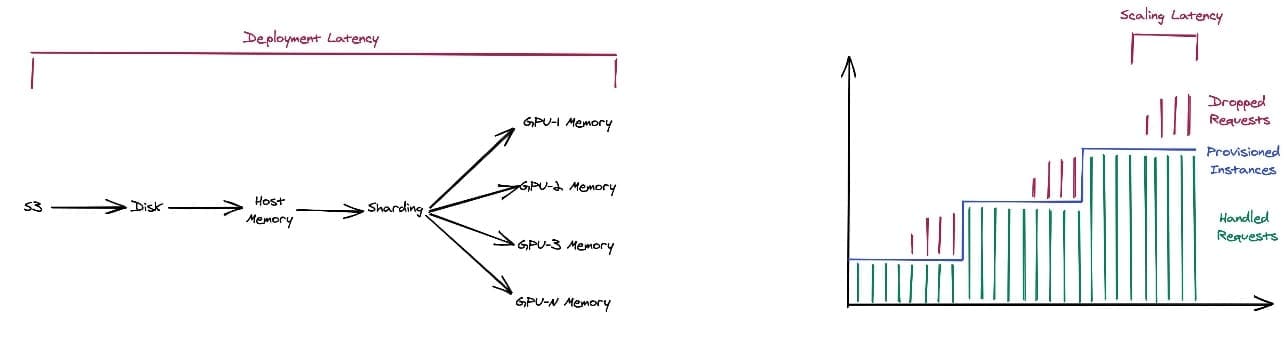

Favorite In Part 1 of this series, we introduced Amazon SageMaker Fast Model Loader, a new capability in Amazon SageMaker that significantly reduces the time required to deploy and scale large language models (LLMs) for inference. We discussed how this innovation addresses one of the major bottlenecks in LLM deployment: the

Read More

Shared by AWS Machine Learning December 3, 2024

Shared by AWS Machine Learning December 3, 2024

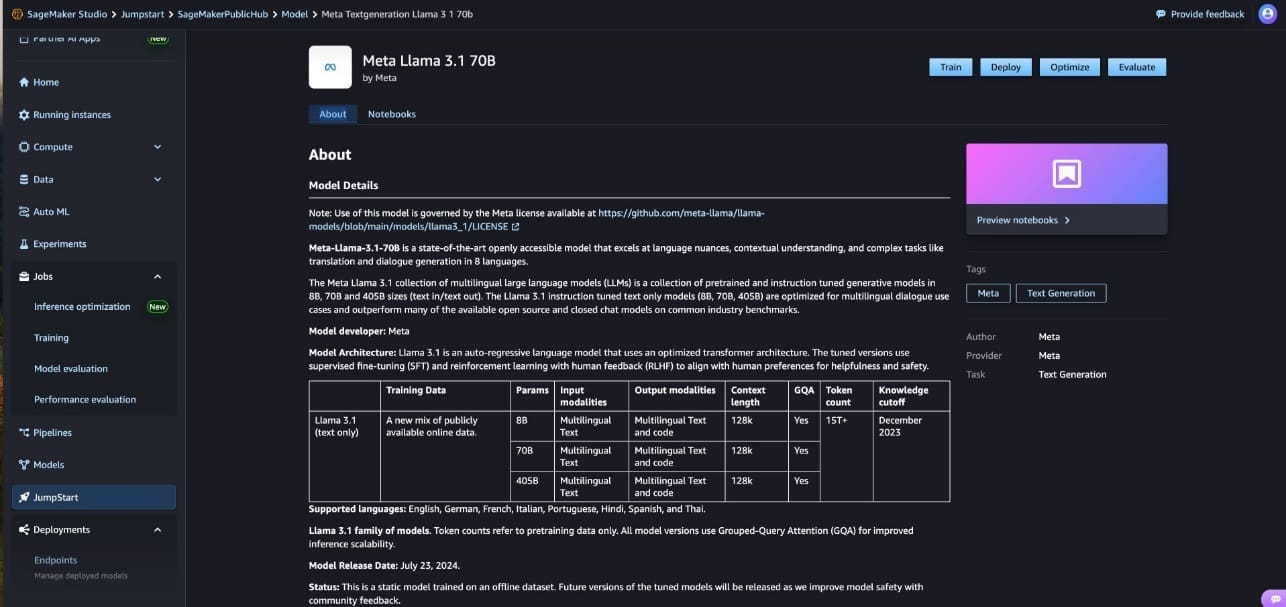

Favorite The generative AI landscape has been rapidly evolving, with large language models (LLMs) at the forefront of this transformation. These models have grown exponentially in size and complexity, with some now containing hundreds of billions of parameters and requiring hundreds of gigabytes of memory. As LLMs continue to expand,

Read More

Shared by AWS Machine Learning December 3, 2024

Shared by AWS Machine Learning December 3, 2024

Favorite Today at AWS re:Invent 2024, we are excited to announce the new Container Caching capability in Amazon SageMaker, which significantly reduces the time required to scale generative AI models for inference. This innovation allows you to scale your models faster, observing up to 56% reduction in latency when scaling

Read More

Shared by AWS Machine Learning December 3, 2024

Shared by AWS Machine Learning December 3, 2024

Favorite Today at AWS re:Invent 2024, we are excited to announce a new feature for Amazon SageMaker inference endpoints: the ability to scale SageMaker inference endpoints to zero instances. This long-awaited capability is a game changer for our customers using the power of AI and machine learning (ML) inference in

Read More

Shared by AWS Machine Learning December 3, 2024

Shared by AWS Machine Learning December 3, 2024

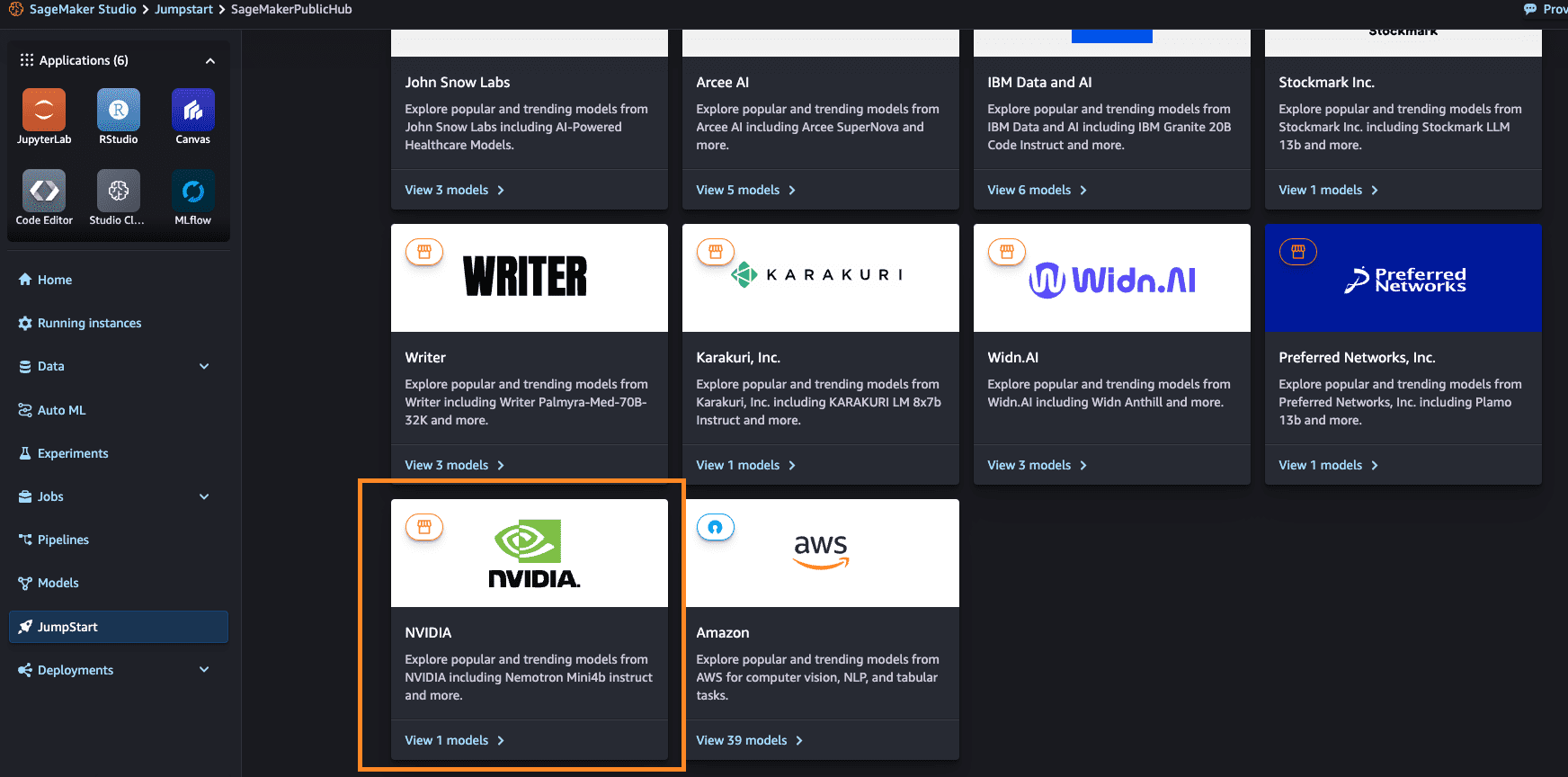

Favorite This post is co-written with Abhishek Sawarkar, Eliuth Triana, Jiahong Liu and Kshitiz Gupta from NVIDIA. At re:Invent 2024, we are excited to announce new capabilities to speed up your AI inference workloads with NVIDIA accelerated computing and software offerings on Amazon SageMaker. These advancements build upon our collaboration

Read More

Shared by AWS Machine Learning December 3, 2024

Shared by AWS Machine Learning December 3, 2024

Shared by voicesofopensource December 3, 2024