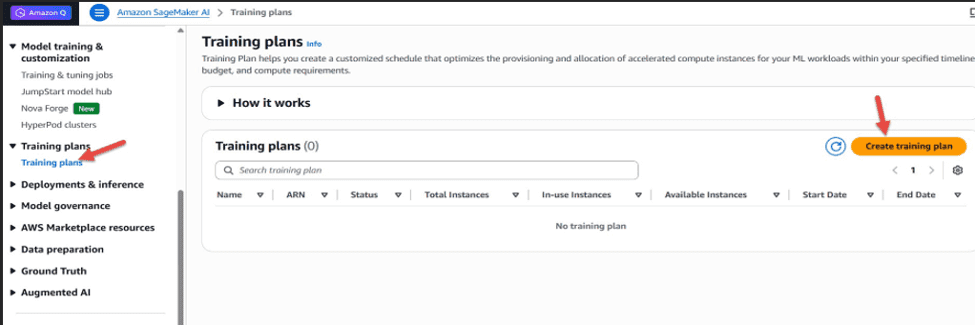

Favorite Deploying large language models (LLMs) for inference requires reliable GPU capacity, especially during critical evaluation periods, limited-duration production testing, or burst workloads. Capacity constraints can delay deployments and impact application performance. Customers can use Amazon SageMaker AI training plans to reserve compute capacity for specified time periods. Originally designed

Read More

Shared by AWS Machine Learning March 28, 2026

Shared by AWS Machine Learning March 28, 2026

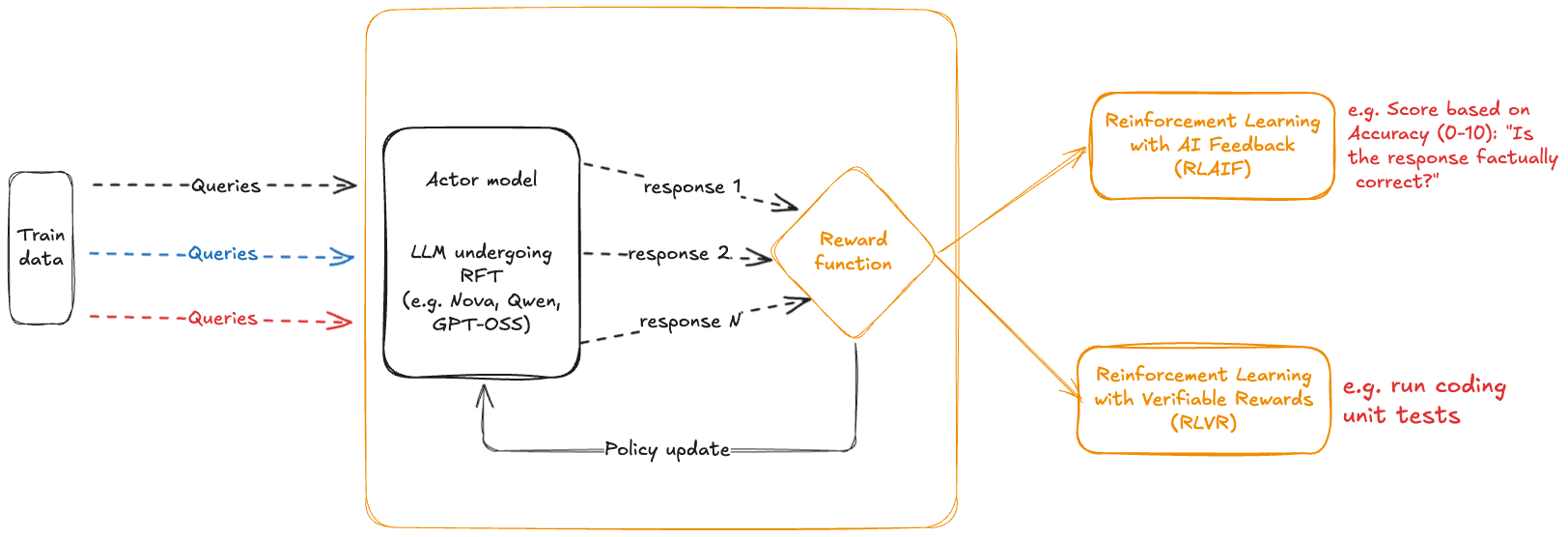

Favorite In December 2025, we announced the availability of Reinforcement fine-tuning (RFT) on Amazon Bedrock starting with support for Nova models. This was followed by extended support for Open weight models such as OpenAI GPT OSS 20B and Qwen 3 32B in February 2026. RFT in Amazon Bedrock automates the

Read More

Shared by AWS Machine Learning March 28, 2026

Shared by AWS Machine Learning March 28, 2026

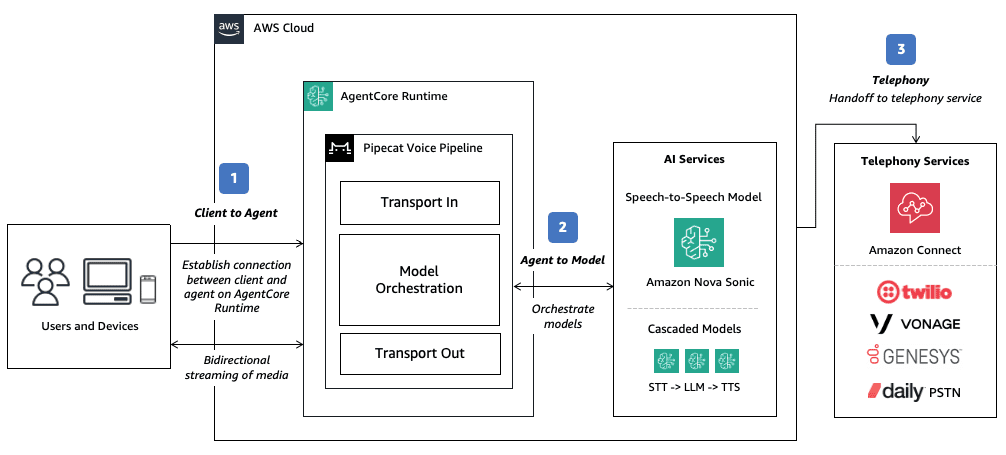

Favorite This post is a collaboration between AWS and Pipecat. Deploying intelligent voice agents that maintain natural, human-like conversations requires streaming to users where they are, across web, mobile, and phone channels, even under heavy traffic and unreliable network conditions. Even small delays can break the conversational flow, causing users

Read More

Shared by AWS Machine Learning March 28, 2026

Shared by AWS Machine Learning March 28, 2026

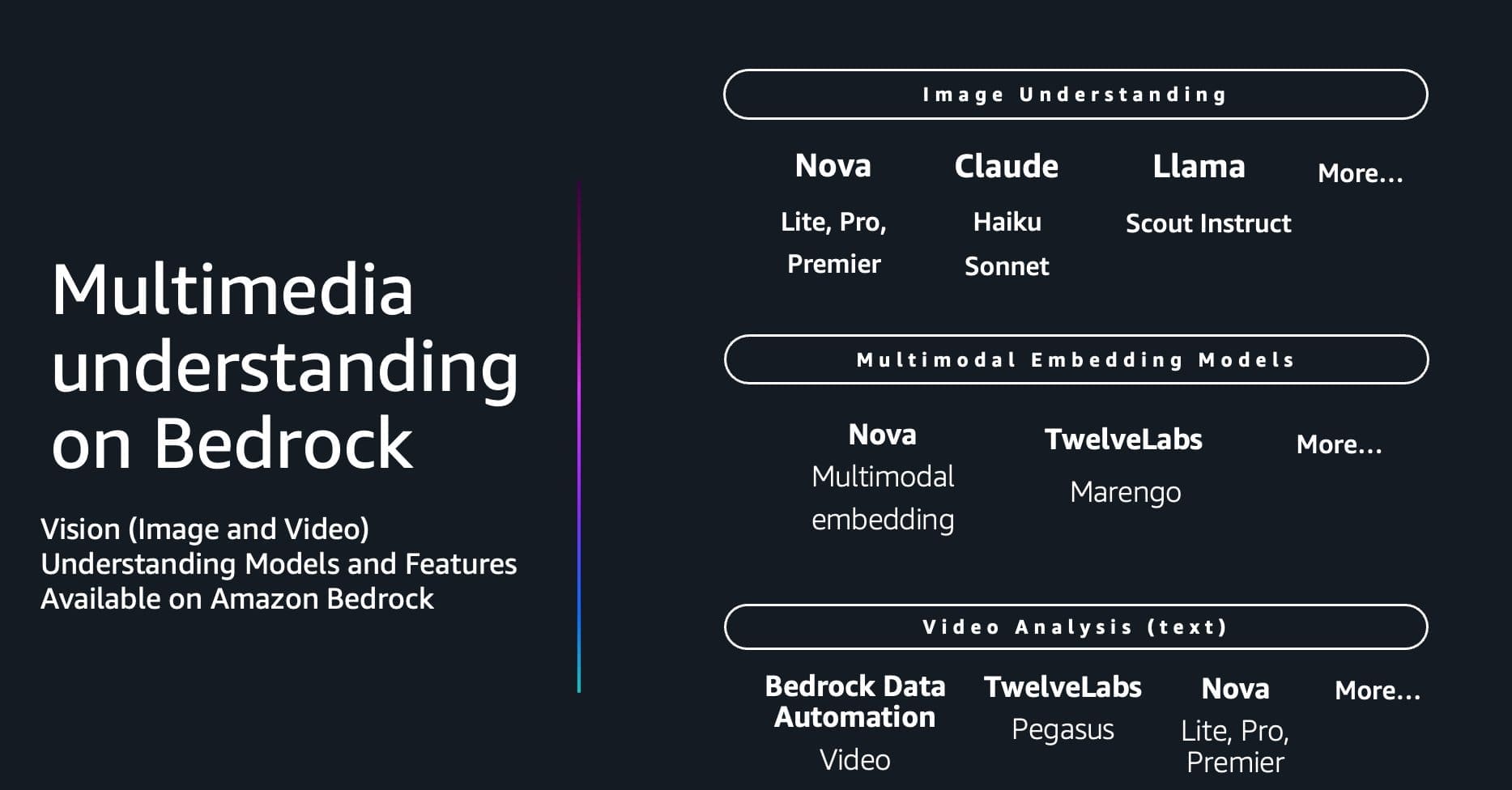

Favorite Video content is now everywhere, from security surveillance and media production to social platforms and enterprise communications. However, extracting meaningful insights from large volumes of video remains a major challenge. Organizations need solutions that can understand not only what appears in a video, but also the context, narrative, and

Read More

Shared by AWS Machine Learning March 28, 2026

Shared by AWS Machine Learning March 28, 2026

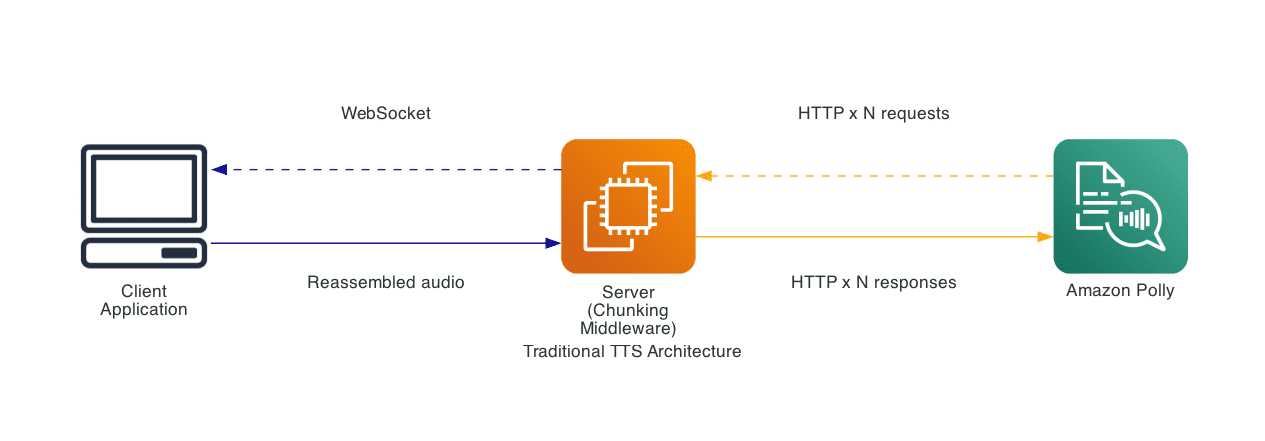

Favorite Building natural conversational experiences requires speech synthesis that keeps pace with real-time interactions. Today, we’re excited to announce the new Bidirectional Streaming API for Amazon Polly, enabling streamlined real-time text-to-speech (TTS) synthesis where you can start sending text and receiving audio simultaneously. This new API is built for conversational

Read More

Shared by AWS Machine Learning March 28, 2026

Shared by AWS Machine Learning March 28, 2026

Favorite Last year, AWS announced an integration between Amazon SageMaker Unified Studio and Amazon S3 general purpose buckets. This integration makes it straightforward for teams to use unstructured data stored in Amazon Simple Storage Service (Amazon S3) for machine learning (ML) and data analytics use cases. In this post, we

Read More

Shared by AWS Machine Learning March 28, 2026

Shared by AWS Machine Learning March 28, 2026

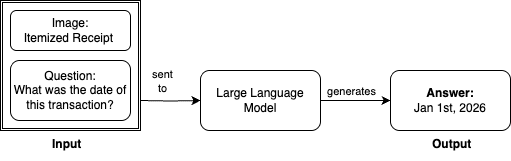

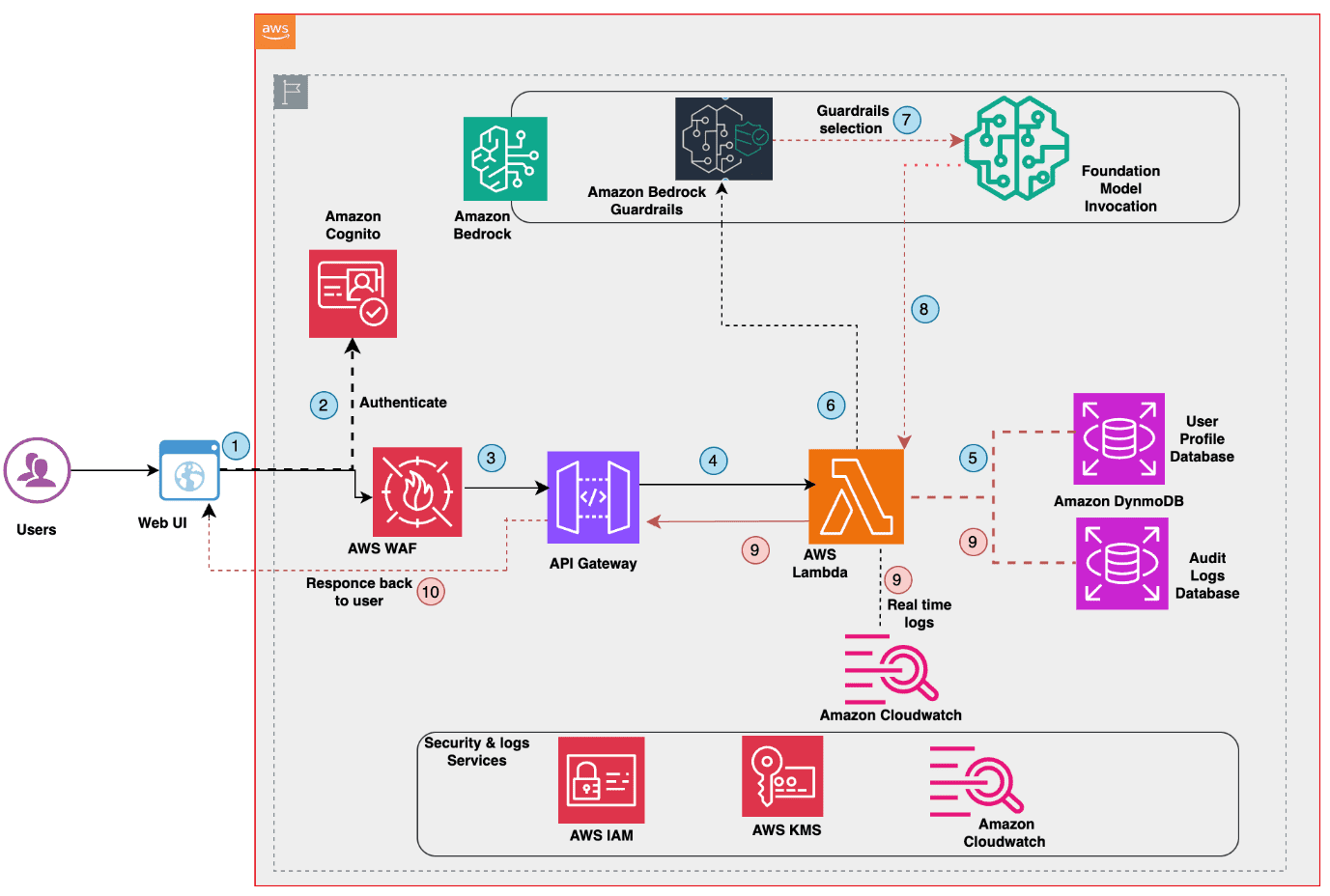

Favorite As you deploy generative AI applications to diverse user groups, you might face a significant challenge that impacts user safety and application reliability: verifying each AI response is appropriate, accurate, and safe for the specific user receiving it. Content suitable for adults might be inappropriate or confusing for children,

Read More

Shared by AWS Machine Learning March 28, 2026

Shared by AWS Machine Learning March 28, 2026

Favorite Kia ora! Customers in New Zealand have been asking for access to foundation models (FMs) on Amazon Bedrock from their local AWS Region. Today, we’re excited to announce that Amazon Bedrock is now available in the Asia Pacific (New Zealand) Region (ap-southeast-6). Customers in New Zealand can now access

Read More

Shared by AWS Machine Learning March 28, 2026

Shared by AWS Machine Learning March 28, 2026

Favorite In the latest episode of our Dialogues on Technology and Society series, LL COOL J sits down with James Manyika. View Original Source (blog.google/technology/ai/) Here.

Favorite Google Translate’s Live translate with headphones is officially arriving on iOS! And we’re expanding the capability for both iOS and Android users to even more countries… View Original Source (blog.google/technology/ai/) Here.

![]() Shared by AWS Machine Learning March 28, 2026

Shared by AWS Machine Learning March 28, 2026