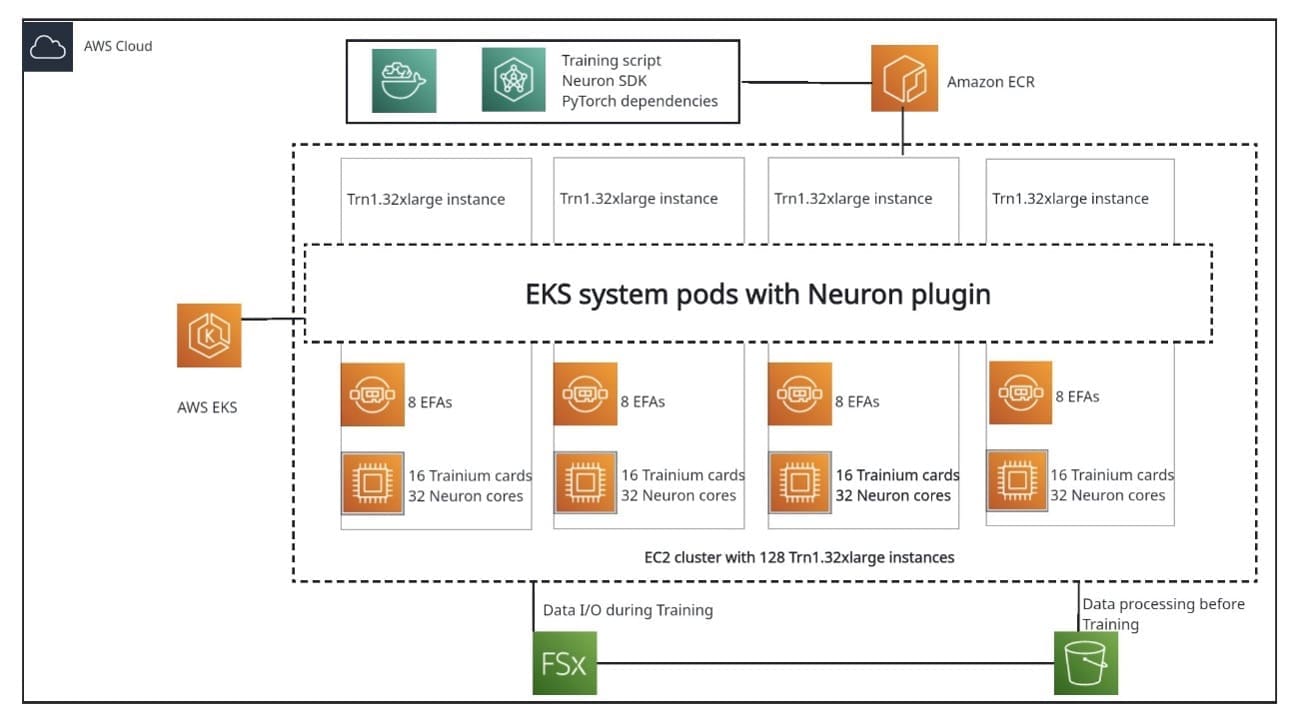

End-to-end LLM training on instance clusters with over 100 nodes using AWS Trainium

Favorite Llama is Meta AI’s large language model (LLM), with variants ranging from 7 billion to 70 billion parameters. Llama uses a transformers-based decoder-only model architecture, which specializes at language token generation. To train a model from scratch, a dataset containing trillions of tokens is required. The Llama family is

Read More![]() Shared by AWS Machine Learning May 30, 2024

Shared by AWS Machine Learning May 30, 2024